The Classroom Has Already Changed (Whether You Notice or Not)

Five Foundational Theories for the AI Era

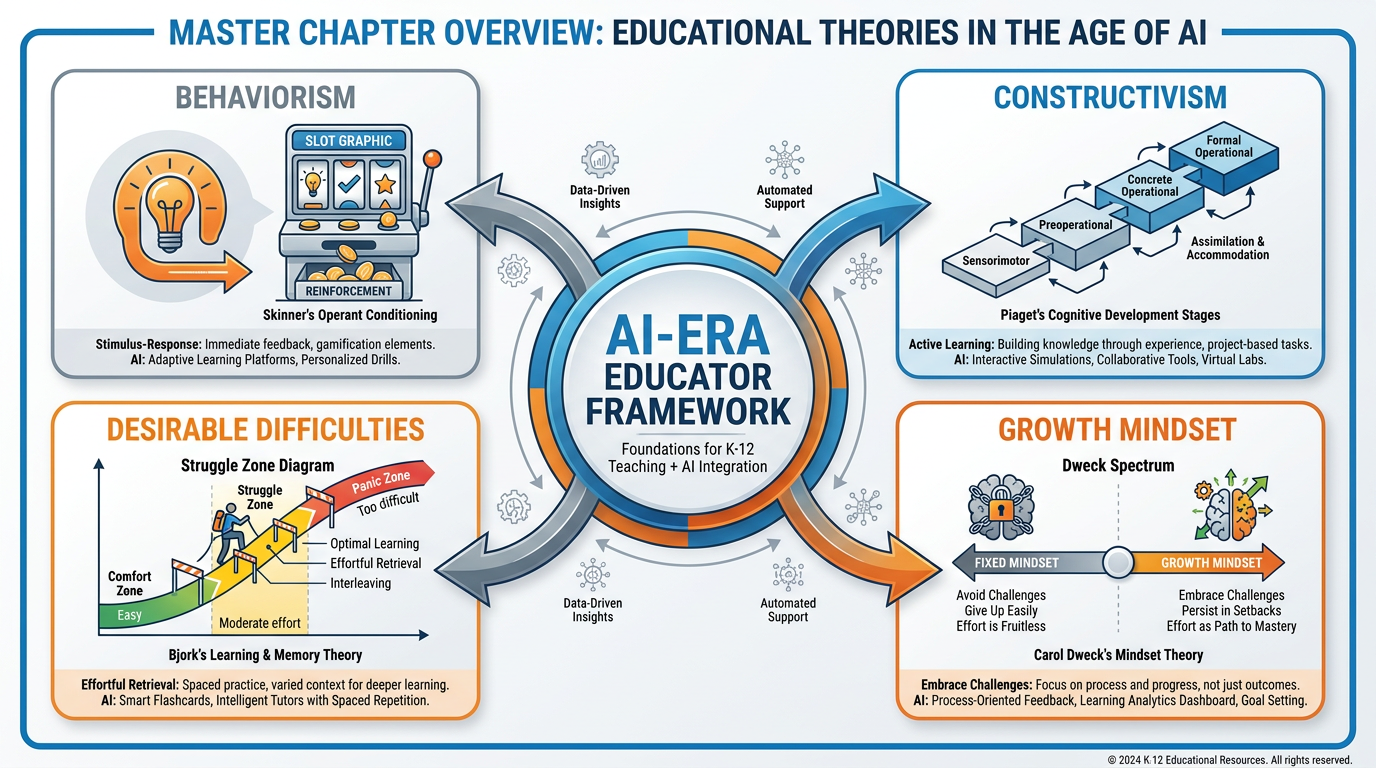

Figure 1:Chapter 1 at a Glance. Five theories, one century of learning science, and one disruptive technology — mapped together. By the end of this chapter, each of these concepts will feel like old friends.

11.1 A Tale of Two Tuesdays: Two Teachers, Same Lesson, Different Centuries¶

It’s Tuesday morning. Third period. Thirty-two students. A lesson on the American Revolution.

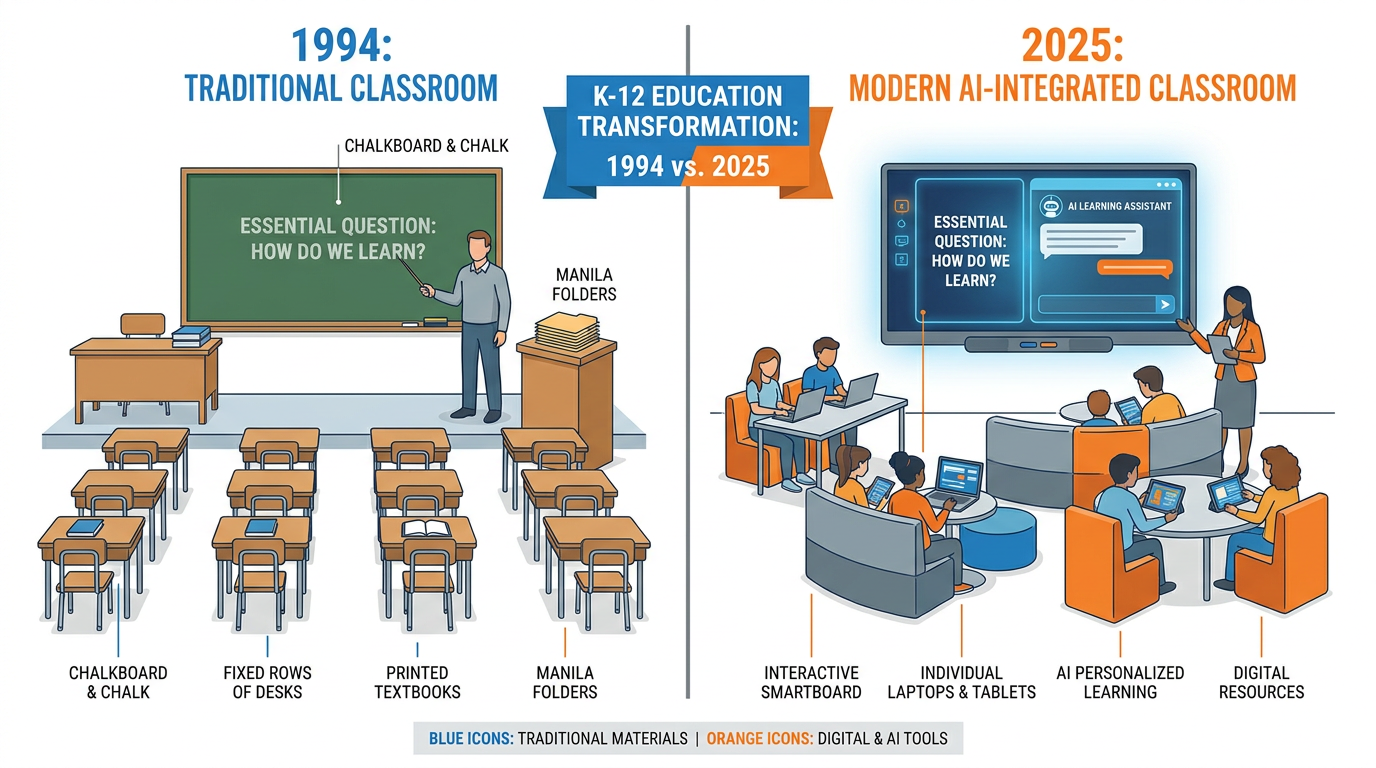

The year is 1994. The teacher — let’s call her Ms. Patterson — arrives with a manila folder, a ditto machine copy of a primary source document, and a yellow highlighter she’s been refilling since 1987. She writes the essential question on the chalkboard in careful cursive: Why do people revolt? The room smells like chalk dust and floor wax. Students copy the question into spiral notebooks. Some listen. Some don’t. Ms. Patterson does what good teachers have always done: she reads the room, she adjusts, she cares. She knows that Marcus in the third row is brilliant but bored, and she finds a way to call on him three times today in ways that make him lean forward.

Now fast forward. It’s Tuesday morning, same lesson, thirty-one years later.

The teacher — let’s call her Ms. Rivera — arrives to find that five students have already submitted an AI-generated “outline” of the causes of the American Revolution, copied directly from ChatGPT. Two more have AI-generated essays that are technically accurate, beautifully formatted, and completely hollow. One student, Jaylen, has done something unexpected: he’s used an AI chatbot to argue against the revolution, and he wants to debate the class. The essential question is the same. Why do people revolt? The stakes are entirely different.

Here’s the thing. Nothing fundamental about learning has changed between those two Tuesdays. The human brain still works the same way. Memory still consolidates the same way. Adolescent development still follows the same arc. Motivation still lives in the same deep structures. What has changed is the landscape — the external environment in which learning either happens or doesn’t.

Ms. Patterson’s biggest technological disruption was the overhead projector. Ms. Rivera’s is a tool that can write a five-paragraph essay in four seconds, explain photosynthesis in twelve different languages, and simulate a Socratic dialogue with a seventh grader at 2 a.m.

The teachers who will thrive in Ms. Rivera’s world are not the ones who pretend it still looks like Ms. Patterson’s. And they’re not the ones who hand over the keys to the machine either. They’re the ones who understand both what has changed and, more importantly, what has not.

That’s what this chapter is about.

Figure 2:Two Tuesdays. The essential question is the same. The landscape is unrecognizable. Understanding the difference between what changed and what didn’t is the first act of AI literacy.

21.2 The Oldest Dream in Education: A Tutor for Every Student¶

There’s a thought experiment that every learning scientist knows. Benjamin Bloom posed it in 1984, and it became one of the most cited findings in all of education research. He called it the “2 Sigma Problem.”

Here’s the setup: Bloom compared three groups of students. The first group learned through conventional classroom instruction — one teacher, thirty kids, a lecture, a test. The second group did the same but with mastery-based learning: they couldn’t move on until they’d actually demonstrated understanding. The third group received one-on-one tutoring. Not just any tutoring. Real, responsive, adaptive, personal instruction from a knowledgeable human being.

The results were staggering. The average student with a personal tutor performed two standard deviations better than the average student in a conventional classroom. Two sigma. That means the tutored student performed better than 98% of students in traditional instruction.

Bloom called it a “problem” because the implication was clear and devastating: we know what works. We just can’t afford it. One human tutor for every student is financially impossible. It was an unreachable ideal.

Until now.

Not because AI tutors are perfect — they’re not. They hallucinate, they don’t actually understand anything, and they can’t notice that a student is about to cry. But for the first time in the history of mass education, a version of the personalized tutor is available to every student with a phone, at any hour, in dozens of languages, at zero marginal cost.

That’s not a small thing. That’s a civilizational shift.

The question for you — the teacher standing in front of those thirty kids on a Tuesday morning — is not whether your students will have access to an AI tutor. They already do. The question is what you will do with that reality. Will you compete with it, ignore it, or figure out what it means for the irreplaceable things only you can do?

One Teacher. Many Students.

One pace for everyone

Feedback arrives days after the work

Struggling students fall behind silently

Advanced students hit ceilings

The teacher is the only source of explanation

This model was designed for an industrial economy that needed compliant workers who all learned the same things at the same time.

One Teacher. AI Support. Human Heart.

Differentiated pace supported by AI feedback

Instant explanations available on demand

Students surface confusion in real time

Advanced students get extension challenges

The teacher focuses on what only humans can do: connection, context, judgment, wisdom

This model requires more from teachers, not less — but the right kind of more.

31.3 The Mirror Image: A Teaching Assistant for Every Teacher¶

Bloom’s 2 Sigma Problem is usually told from the student’s side. But there’s a mirror image that gets less attention.

Think about what a great teaching assistant does. They prep materials. They give feedback on drafts. They pull data on who’s struggling and why. They write the first pass of a rubric. They transcribe and summarize. They research background reading. They build practice sets. They do the hundred invisible tasks that make great teaching possible — the scaffolding work that great teachers want to do but never have time for because there are thirty-two students and only one of them.

That’s also what AI can do. For teachers.

For the first time, every teacher has access to something that functions like a tireless, never-complaining, well-read research assistant who is available at midnight before a Friday deadline. It won’t replace your judgment. It can’t replace the years you’ve spent learning to read a classroom, to know when to push and when to pull back, to understand that Marcus in the third row needs to be challenged in a specific way. But it can draft the first version of everything.

This book is built around that dual reality: AI as tutor for students, AI as assistant for teachers. The rest of this course will teach you to use both of those capabilities with skill and intentionality. But first, you need to understand the risks — because the same technology that can accelerate learning can also quietly hollow it out.

41.4 The Risk Nobody Wants to Name: When “Easy” Becomes Dangerous¶

Here’s the conversation nobody in the tech industry wants to have.

When learning is too easy, it doesn’t stick.

That sounds obvious when you say it out loud. Of course struggle is part of learning. We all know this. We’ve felt it — the moment something finally clicks after you’ve been wrestling with it for days feels completely different from the moment you copy down an answer someone told you. The first moment creates a memory. The second creates a note you’ll lose by Thursday.

But here’s where it gets uncomfortable: AI is the most powerful “easy” button ever built. Ask it anything. Get an answer. Move on. No confusion. No friction. No struggle. No learning.

The students who use AI as a bypass — not as a tool, but as a replacement for thinking — are doing something that feels like learning but isn’t. They’re producing outputs without building the cognitive structures that make those outputs meaningful. It’s the educational equivalent of reading the summary instead of the book, but faster, more convincing, and more dangerous because it’s invisible.

You can’t always see it on the paper. The paper looks great. The argument is coherent. The citations are real. And the student, if you asked them to talk through their thinking, would be lost.

This is the risk nobody wants to name. Not because it’s new — teachers have always had to fight the gap between performed understanding and actual understanding. But because the scale and sophistication of AI has made the gap wider, the performed understanding more convincing, and the path of least resistance more seductive than it has ever been.

The good news — and there is genuinely good news — is that learning science has known for decades how to make this work. It just hasn’t always been easy to implement. Now it is.

51.5 How Brains Actually Learn — Neurons That Fire Together, Wire Together¶

Before we talk about AI, we need to talk about memory. Not memory as in “you remembered the answer on the test.” Memory as in: the fundamental biological process by which experience becomes knowledge.

Here’s the basic story. Your brain is a network of roughly 86 billion neurons. Each neuron is connected to thousands of others through structures called synapses. When you experience something — hear a story, solve a problem, make a mistake, feel surprised — neurons fire. When neurons fire together, repeatedly, the synapse between them gets stronger. The connection becomes easier to activate. The pathway gets carved deeper.

This is often summarized as Hebb’s Rule: “Neurons that fire together, wire together.” It’s reductive, but it’s pointing at something real. Learning, at the biological level, is the process of strengthening neural pathways through activation.

The implications for teaching are enormous.

First: memory is reconstructive, not recording. Your students don’t record information like a hard drive. They reconstruct it each time, layering new experience on top of existing patterns. This means what they already know profoundly shapes what they can learn next. (Piaget had a lot to say about this. We’ll get there.)

Second: retrieval is more powerful than review. The act of pulling information out of memory — struggling to remember, testing yourself, being asked a question you have to really think about — actually strengthens the neural pathway more than re-reading or re-watching does. This finding (from research by Roediger, Karpicke, and others) is called the testing effect or retrieval practice effect, and it’s one of the most replicated results in all of cognitive psychology.

Third: spaced repetition beats massed practice. Studying something intensively for one night leaves shallower traces than returning to it across multiple sessions. Your brain consolidates memories during sleep and rest. The classic “cram and forget” experience is not a bug — it’s the predictable consequence of mass practice without spacing.

Now look at most educational AI tools. Many of them violate all three of these principles simultaneously. They answer questions instead of prompting retrieval. They provide information in continuous streams instead of spaced return sessions. They remove the reconstructive work by handing students polished explanations that bypass the neurons-struggling-to-fire experience entirely.

This doesn’t mean AI is bad for learning. It means how you use AI with students is everything.

61.6 The Behaviorist Inheritance: Why Most EdTech Feels Like a Slot Machine¶

Let me tell you about a hungry cat in a box.

In the early 1900s, Edward Thorndike built a “puzzle box” — a wooden cage with a latch mechanism inside. He put a cat in the box with food visible on the outside. The cat would scratch, push, and stumble around randomly. Eventually, by accident, it hit the latch and escaped to the food. Thorndike put it back in the box. The next escape took less time. Then less. Then less. The cat wasn’t thinking about the latch. It was learning through consequences: behavior that produces reward gets repeated.

Thorndike called this the Law of Effect: responses followed by satisfying outcomes are strengthened; responses followed by unsatisfying outcomes are weakened.

A few years later, Ivan Pavlov rang a bell every time he fed dogs. Eventually the dogs salivated at the bell alone. No food required. A neutral stimulus had been paired with a meaningful one until the neutral stimulus alone could trigger the response. This is classical conditioning — the brain learning to predict.

Then came B.F. Skinner, who took all of this and built it into a comprehensive theory of behavior and a political philosophy of education. Skinner’s operant conditioning said this: all behavior is shaped by its consequences. Reinforce a behavior and you get more of it. Punish it and you get less. Given the right schedule of reinforcement, you can shape any behavior in any organism, including humans.

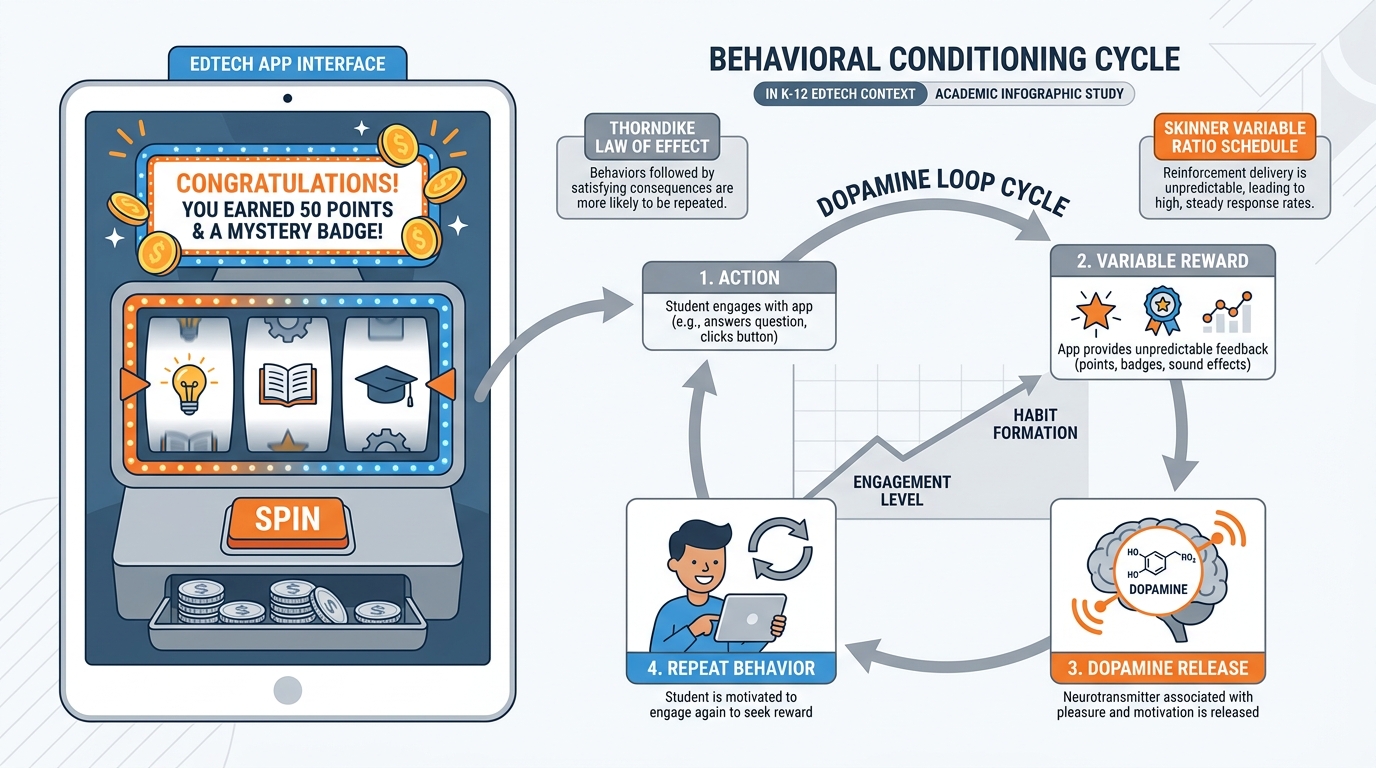

Here’s where it gets uncomfortable: most educational technology — from the first “teaching machines” of the 1950s to Duolingo in 2025 — is Skinnerian to its core. Points. Badges. Streaks. Levels. “Correct! Great job!” Sounds. Animations. Notifications. Variable reward schedules (the most addictive kind, famously used in slot machines) deployed at scale across millions of children.

Figure 3:The EdTech Slot Machine. Variable reward schedules — pioneered by Skinner, perfected by casinos, deployed in educational apps — maximize engagement without necessarily maximizing learning. Know the difference between motivation and manipulation.

Behaviorism is not wrong. It’s partial. It explains a real and important layer of how humans learn. Reinforcement works. Practice works. Feedback works. The problem is what it misses: the internal world. Meaning. Understanding. The experience of insight. The development of a mind, not just a behavior.

Behaviorist EdTech optimizes for engagement metrics. Minutes on platform. Daily streaks. Points earned. These metrics are measurable, so they get optimized. Understanding is harder to measure, so it gets less attention. The result is technology that can keep a child clicking for two hours and leave them knowing almost nothing they didn’t know before they started.

Where the analogy breaks down: a slot machine has no interest in whether you win. Some EdTech genuinely does. The best adaptive learning systems use behavioral data to do something behaviorism alone couldn’t — build a model of what a student actually knows and doesn’t know, then direct their attention toward genuine gaps. The shell is Skinnerian. The core can be constructivist.

Why this matters for AI: AI-powered tutors can be far more sophisticated than the slot machine model. They can provide corrective feedback that explains why an answer is wrong, not just that it is. They can adjust challenge level in real time. They can ask follow-up questions that force retrieval. But they can also be deployed in purely behaviorist ways — praise dispensers, hint machines, answer finders. The technology is neutral. How it’s used is not.

Deep Dive: Skinner’s Teaching Machines

In the 1950s, B.F. Skinner built a mechanical “teaching machine” that presented students with questions, required a written response, then revealed the correct answer. Students moved at their own pace, received immediate feedback, and progressed through carefully sequenced material. Skinner believed this was the future of education.

He was partly right. The principles he identified — immediate feedback, mastery before progression, self-pacing — are now cornerstones of the most effective adaptive learning systems. What he got wrong was the assumption that all meaningful learning could be decomposed into stimulus-response pairs. It can’t. But understanding the lesson he understood first is still essential to using today’s tools wisely.

71.7 Productive Struggle: The Forgotten Engine of Real Learning¶

This is one of the most important ideas in this entire course. Read it twice.

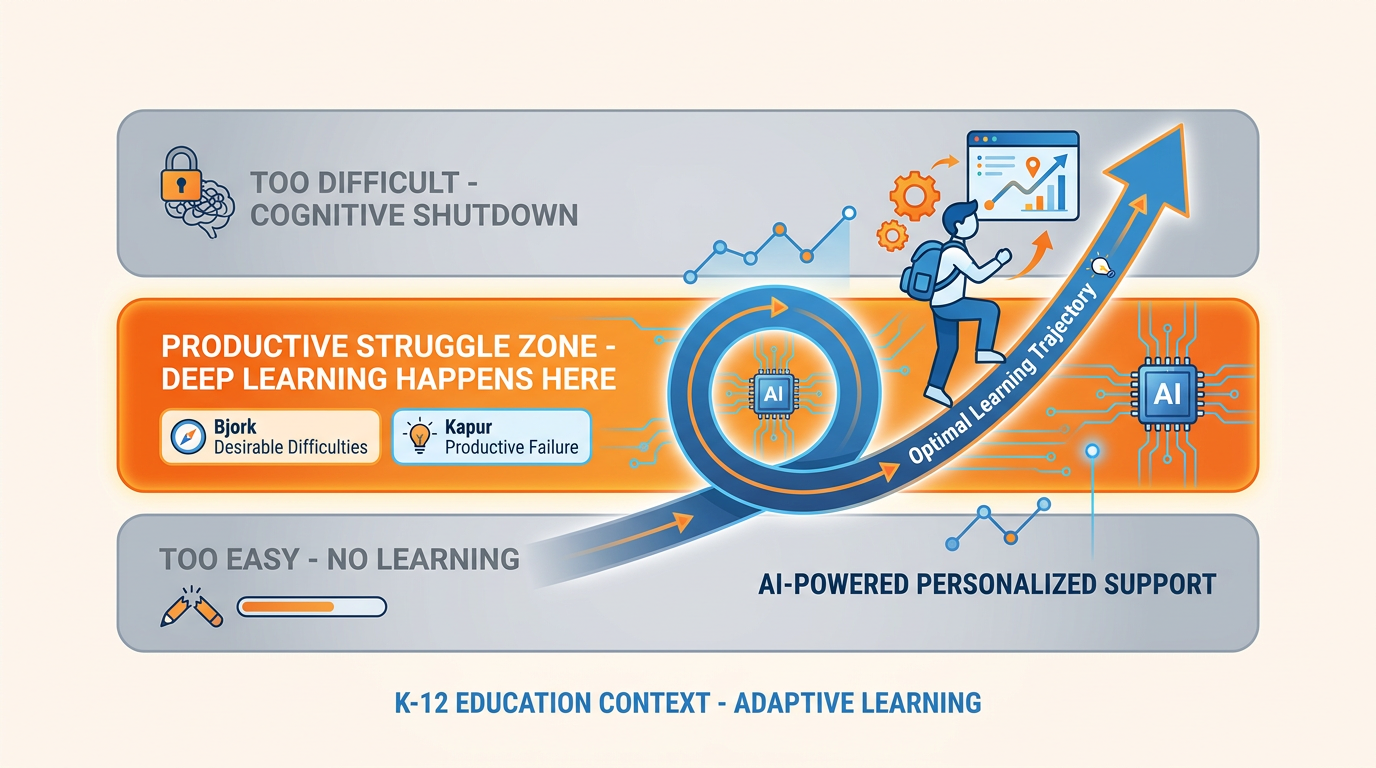

Robert Bjork at UCLA has spent decades studying the difference between performance and learning. Here’s the insight: what makes performance go up in the short term often makes learning go down in the long term, and vice versa.

When learning feels smooth and easy — when the explanation is clear, the examples are perfectly pitched, the feedback is instant and affirming — students perform well right now. Ask them the same question three days later and the answer has often evaporated.

When learning feels effortful and a bit uncomfortable — when the student has to struggle to retrieve an answer, when the problem doesn’t perfectly pattern-match to the example, when there’s a gap between what they know and what they need to know — performance in the moment goes down. But learning goes up. The struggle activates deeper processing. The neural pathways get carved more deeply.

Bjork calls these Desirable Difficulties: constraints and challenges that are frustrating in the short term and powerfully effective in the long term. The major desirable difficulties include:

Spaced practice — returning to material over time rather than massing it

Interleaving — mixing different types of problems rather than blocking by type

Retrieval practice — testing yourself instead of re-reading

Varying conditions — practicing in different contexts to prevent over-fitting to one context

Generation effects — trying to produce an answer before seeing it, even if you’re wrong

Manu Kapur at ETH Zurich has extended this idea into what he calls Productive Failure. In a series of elegant experiments, Kapur gave students complex problems before they’d been taught the relevant concept. They failed. They struggled. They generated wrong answers. Then they received direct instruction. Their learning was significantly better than students who received direct instruction first.

Why? Because the struggle phase forced students to activate everything they did know and bump it against the problem. When the instruction came, they had hooks to hang it on — genuine intellectual need met by the relevant insight at exactly the right moment.

Figure 4:The Productive Struggle Zone. Too easy and the brain coasts. Too hard and the brain shuts down. The zone of productive struggle — challenging enough to require real effort, achievable enough to maintain engagement — is where genuine learning lives.

Now here’s the collision with AI. AI collapses the productive struggle zone. Ask it a question and you get an answer. No generation effect. No retrieval struggle. No desirable difficulty. Just a smooth, polished response that delivers you to the answer without requiring you to make the journey.

For the student who has already learned the material, AI is a legitimate accelerant. For the student who is in the middle of learning it, AI as answer-dispenser is educationally destructive. The challenge for you as a teacher is to know which student you’re looking at and design your AI use accordingly.

Where the analogy breaks down: Productive failure requires students to have enough prior knowledge to meaningfully struggle. Completely novel material with no scaffolding produces unproductive failure — confusion and frustration that teaches nothing. AI can actually play a powerful role in providing just enough scaffolding to keep struggle productive without collapsing it into ease.

The practical question: When you design an assignment, ask yourself: where is the struggle? If AI can eliminate all of it, the assignment needs to be redesigned. Not to ban AI — but to move the intellectual demand to a place where the tool is a scaffold, not a bypass.

Kapur’s Key Insight — Why “Getting It Wrong First” Works

In Kapur’s studies at Singapore schools, students given problems before instruction consistently outperformed students who received instruction then problems — even though the first group failed during the problem phase.

The explanation: failure in a low-stakes environment activates prior knowledge, reveals conceptual gaps, and creates what Kapur calls “intellectual need.” When instruction arrives, it’s not entering a blank mind — it’s entering a mind that’s been actively asking the question the instruction answers.

Design your AI use to trigger intellectual need before students get the AI-generated answer, not after.

81.8 The Death of the Answer (and Why That’s Actually Good News)¶

For most of the history of education, the scarce resource was information. The teacher had it. The textbook had some of it. The library had more. Students didn’t have ready access to information — and that scarcity gave rise to a particular kind of educational task: retrieve the information and demonstrate that you have it.

Define photosynthesis. List the causes of World War I. Solve for x. Name the capital of Peru.

These tasks made sense in a world where the information wasn’t freely available. Testing whether students could retrieve information told you something meaningful about whether they’d learned it.

Now information is free. Not just free — it’s instantaneously, eloquently, endlessly available. Any student with a phone can get a better definition of photosynthesis in four seconds than most textbooks provide. The “retrieve and demonstrate” task has been made trivially easy by the same technology that makes it trivially uninteresting.

The death of the answer is the death of that task as the primary unit of educational exchange. And while that sounds like a crisis, it’s actually a liberation.

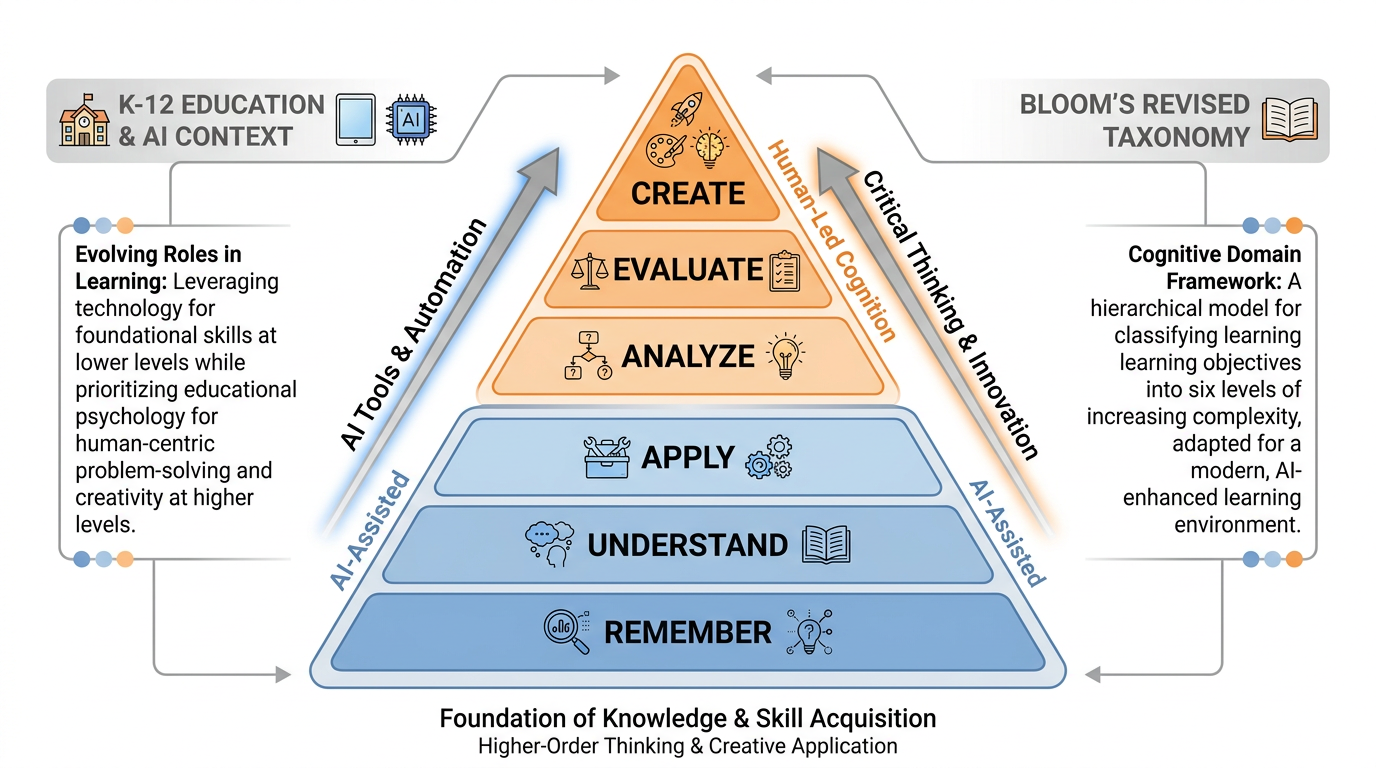

Because the interesting cognitive work was never in the retrieval. It was in what you do with what you’ve retrieved. The analysis, the argument, the synthesis, the judgment, the creation. Those are the tiers of Bloom’s Taxonomy that most K-12 education historically underweighted because there was so much time spent at the bottom.

Figure 5:Bloom’s Taxonomy in the AI Era. AI handles the bottom three tiers competently and quickly. Your instructional investment should be in the top three — analysis, evaluation, and creation — where the irreducible human work lives.

AI can handle Remember, Understand, and Apply with competence and speed. It does reasonably well at parts of Analysis. It struggles genuinely with Evaluation that requires ethical judgment, lived experience, and contextual nuance. It cannot Create in the way a human can — not because it lacks the capability to produce text or images, but because creation, in the educational sense, is about the transformation of the creator, not just the product.

Your instructional focus — right now, today — should be shifting up the taxonomy. Not because the lower tiers don’t matter (they do, as prerequisite knowledge), but because they no longer require the same amount of instructional time and can be supported and scaffolded by AI in ways that free you to do the harder, more valuable work.

91.9 Piaget Didn’t Know About ChatGPT — But He Was Still Right¶

Jean Piaget was a Swiss developmental psychologist who spent decades watching children — really watching them — and concluded that the development of human intelligence follows a predictable sequence of stages, each with its own cognitive architecture, its own logic, its own possibilities and limits.

He called his framework constructivism — not in the political sense, but in the cognitive one. Children don’t receive knowledge passively, like a bucket being filled. They construct it actively, building mental models (Piaget called them schemas) through two complementary processes:

Assimilation: taking new experience and fitting it into an existing schema (“this four-legged animal is a dog”)

Accommodation: encountering something that doesn’t fit, then restructuring the schema to incorporate it (“that four-legged animal is not a dog — it has a mane and it’s huge — I need a new category”)

The motor of learning, for Piaget, is cognitive disequilibrium: the discomfort of encountering something that doesn’t fit your current model. Disequilibrium demands resolution. Resolution requires schema restructuring. Schema restructuring is learning.

Notice what this means: learning requires encountering genuine surprise, genuine difficulty, genuine not-fitting. It requires, in other words, the very thing that AI as a smooth answer-machine eliminates. The desirable difficulty we talked about in Section 1.7 is, at the Piagetian level, cognitive disequilibrium by another name.

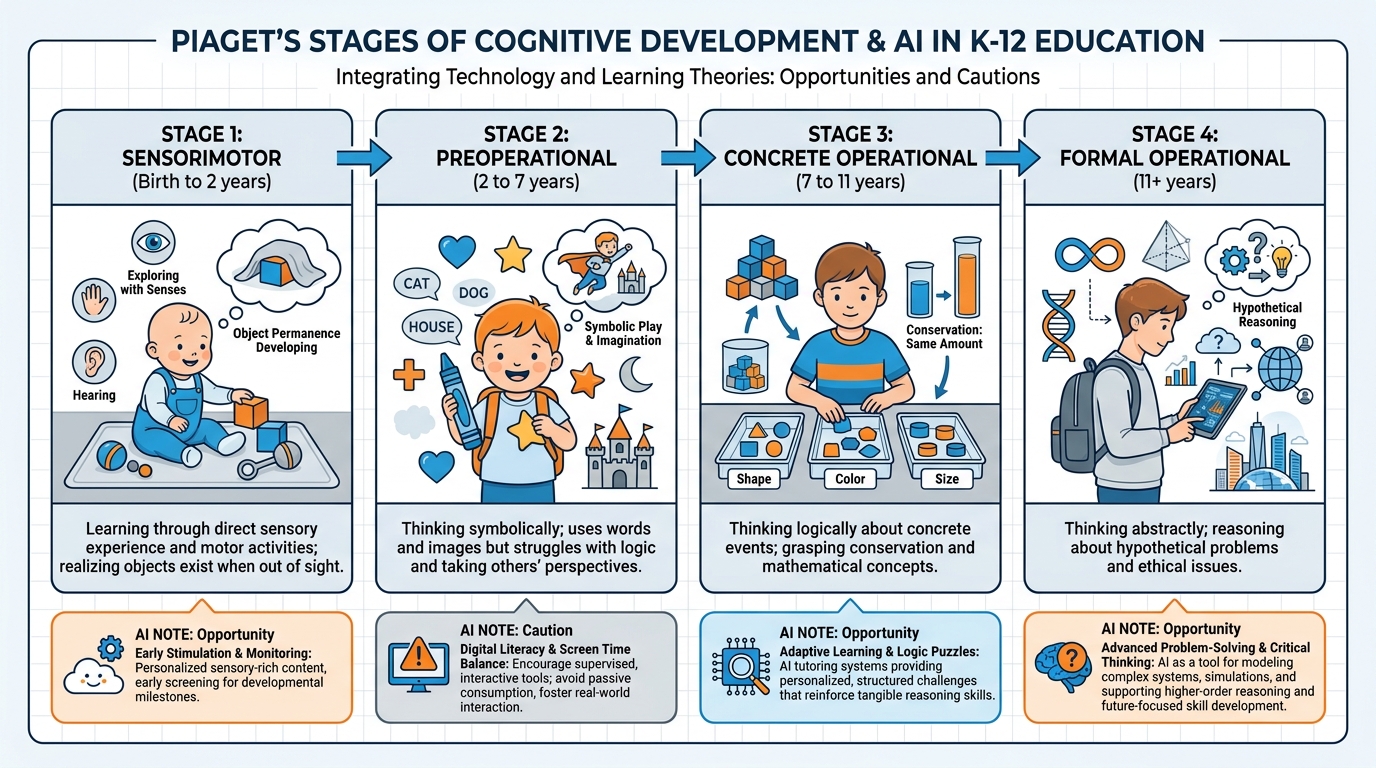

But Piaget’s work goes deeper than that. His four stages have direct implications for AI use in K-12 classrooms.

Figure 6:Piaget’s Four Stages and the AI Question. Each stage has a different cognitive architecture — and therefore a different risk profile and a different opportunity when AI enters the room.

9.1The Four Stages and Where AI Fits¶

Stage 1: Sensorimotor (Birth–2 years)

Children learn by doing. They explore through touch, taste, sight, sound. They develop object permanence — the understanding that things continue to exist when you can’t see them. This is foundational cognition, and it is entirely embodied.

AI at this stage: Largely irrelevant for direct use. But parents and caregivers increasingly use AI-generated content (videos, audio, interactive displays) with infants and toddlers. The research on this is still developing, but the principle holds: sensorimotor learning requires physical, embodied engagement that screens cannot replicate. Passive consumption of AI-generated stimulation is not learning at this stage.

Stage 2: Preoperational (Ages 2–7)

Language emerges. Symbolic thinking develops. Children learn that a word can stand for a thing, that a drawing can represent an experience. But their thinking is egocentric (they struggle to take another’s perspective) and intuitive rather than logical. They can’t yet conserve (a tall thin glass and a short wide glass with the same amount of water appear to have different amounts).

AI at this stage: AI-powered storytelling tools, conversation agents, and language learning apps are proliferating at this level. The risk: children at this stage have difficulty distinguishing AI-generated characters from real people. They form attachments. They believe what they’re told. The ethical stakes are high. AI tools for preoperational children should be used only with adult supervision and clear, age-appropriate explanation of what they are.

Stage 3: Concrete Operational (Ages 7–11)

Logic arrives. Conservation works. Children can classify, seriate, and perform reversible mental operations — on concrete objects and situations. They can think logically about things they’ve physically experienced, but abstract reasoning is still out of reach.

AI at this stage: Enormous opportunity. AI tutors that connect abstract concepts to concrete examples, use visual and interactive simulations, and ask children to predict-then-check can be powerful here. The key word is concrete: AI explanations that stay grounded in physical reality (analogies, simulations, real-world examples) fit the cognitive architecture of this stage. Abstract AI-generated text does not.

Stage 4: Formal Operational (Ages 11–adulthood)

Abstract reasoning becomes possible. Hypothetical thinking, scientific reasoning, ethical argument — the full suite of adult cognition. Piaget believed this stage was completed in adolescence, though subsequent research suggests many adults never fully develop formal operational thinking in all domains.

AI at this stage: The widest range of both opportunity and risk. AI can scaffold genuinely sophisticated intellectual work — argument analysis, research synthesis, perspective-taking at scale. But this is also the stage where AI-as-bypass is most damaging, because the cognitive work of formal operational development requires the student to practice it. Abstract reasoning is a capacity that develops through use. AI that does the abstract reasoning for a teenager is literally doing the developmental work the teenager’s brain needs to do.

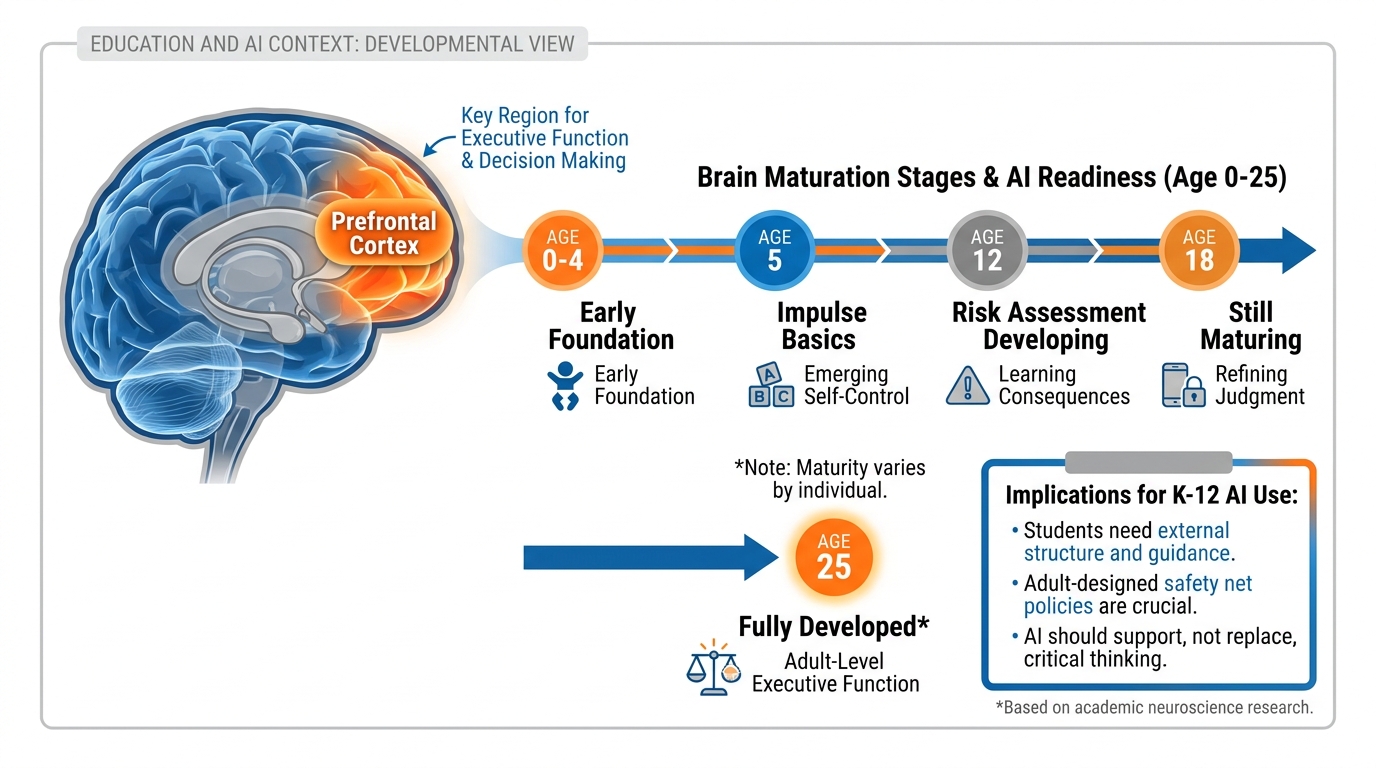

101.10 The Prefrontal Cortex Doesn’t Finish Until 25 (And Why That Matters Today)¶

Here’s a piece of neuroscience that most adults know abstractly but few have really internalized: the human brain is not fully developed until approximately age 25.

The last region to fully mature is the prefrontal cortex (PFC) — the part of the brain located just behind your forehead. The PFC is the seat of executive function: planning, impulse control, risk assessment, long-term thinking, the ability to delay gratification, the capacity to evaluate consequences before acting. It is, in a very real sense, the part of the brain that makes you a responsible adult.

Your students do not have a fully developed prefrontal cortex. Even your seniors. Even your juniors who seem so mature. Even the fifteen-year-old who writes beautifully about consequences and regrets — that reflective writing is coming from a brain that, neurologically, still outsources significant executive function to the emotional processing centers (the amygdala and related structures) because the PFC hasn’t finished wiring itself up.

Figure 7:The Developing Prefrontal Cortex. The brain region most responsible for judgment, impulse control, and long-term thinking isn’t fully online until age 25. This changes everything about how we should think about students making decisions about AI use.

Now introduce AI.

AI offers instant answers, instant gratification, instant resolution of cognitive discomfort. No waiting. No frustration. No the-delayed-reward-is-worth-it experience. For a brain whose impulse-control circuitry is still under construction, the AI answer is extraordinarily appealing — and the habit of reaching for it can be extraordinarily easy to build and extraordinarily hard to break.

The neurological implication is not “ban AI until 25.” It’s “build structure, routine, and intentionality around AI use precisely because your students’ brains are not yet fully equipped to regulate their own technology use.”

This is why AI use policies aren’t just institutional bureaucracy. They’re developmental scaffolding. When you create clear, explicit, consistently enforced norms around when AI can and cannot be used, you are providing the external executive function that developing prefrontal cortices genuinely need.

You are, in a neurological sense, lending your students your fully-developed brain until theirs finish developing.

That’s not a small thing. That’s what teaching is.

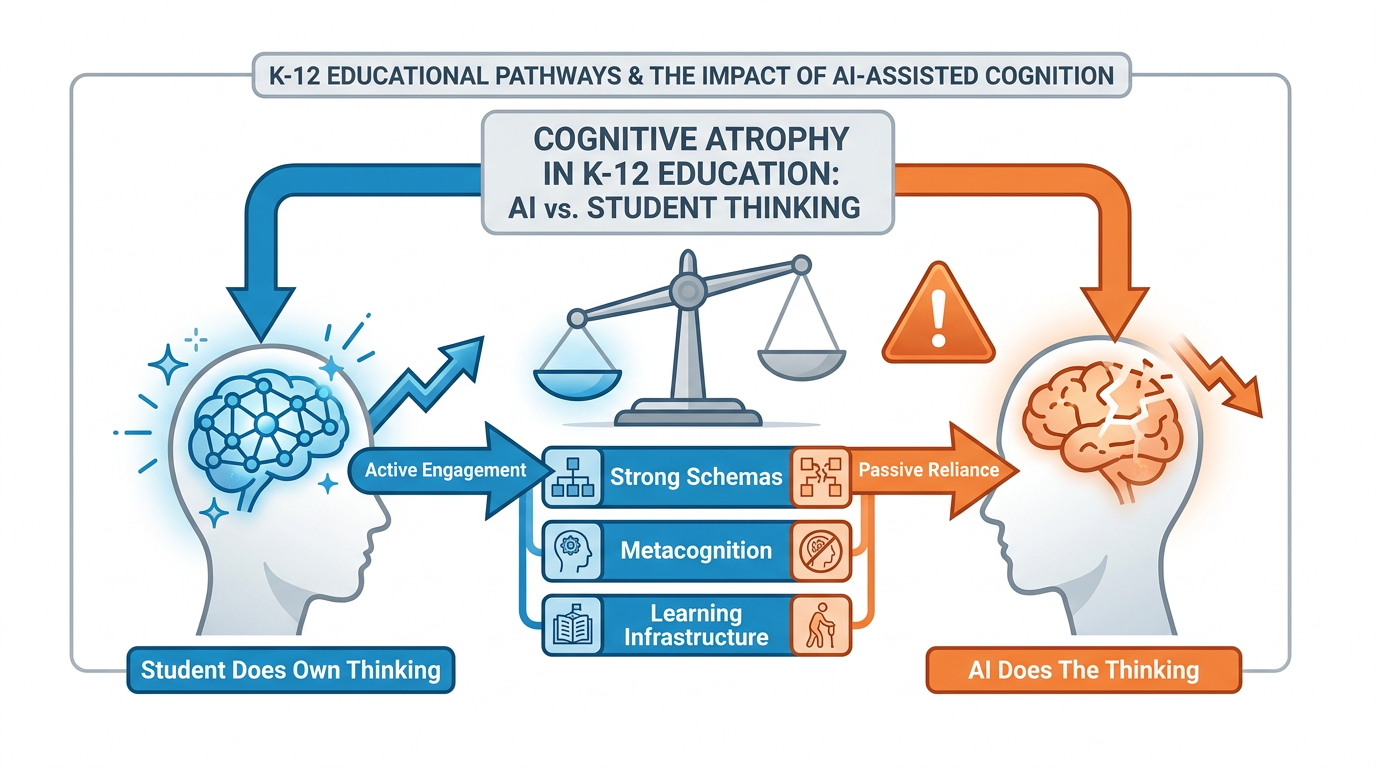

111.11 The Knowledge Tax: What Students Pay When AI Does the Thinking¶

There’s a concept called cognitive load — the amount of mental effort working memory is handling at any given moment. Your working memory is limited; you can hold roughly 7±2 items at a time. When cognitive load exceeds your working memory capacity, learning stops. You can’t process anything new because the processing buffer is full.

This is the basis for John Sweller’s Cognitive Load Theory, and it explains why good teachers scaffold complex tasks — they break them into manageable chunks, remove extraneous complexity, and build knowledge in sequences that prevent overload.

AI reduces cognitive load. Dramatically. That can be good: remove extraneous load, preserve the essential learning. Or it can be catastrophic: remove the load so completely that no cognitive work happens at all.

But the deeper issue is what happens over time when students consistently outsource their cognitive work to AI. We don’t yet have long-term longitudinal data on AI-dependent learners (the technology is too new), but we have strong theoretical frameworks and some early warning signals.

Figure 8:The Knowledge Tax. When AI consistently does the cognitive work, the pathway from effort to schema-building never forms. The result is productive-looking output with no underlying knowledge structure — a student who can produce essays but cannot think.

The theoretical framework works like this:

Short-term: Student asks AI to explain a concept. AI explains it clearly. Student reads the explanation. Student can answer questions about that explanation immediately. Looks like learning.

Medium-term (days later): Without retrieval practice, the explanation fades. The student hasn’t done the cognitive work of constructing their own understanding, so there’s no robust schema to retrieve from. Looks like forgetting.

Long-term (habit): Student learns to reach for AI whenever they encounter cognitive difficulty. The tolerance for confusion drops. The willingness to persist through struggle atrophies. The metacognitive skills — knowing what you know and don’t know, planning how to address gaps — never fully develop because they never needed to.

The knowledge tax is real. Students who use AI as a bypass don’t just fail to learn the content — they fail to build the learning infrastructure: the schemas, the metacognitive skills, the tolerance for difficulty, the confidence that comes from having actually done hard things.

That infrastructure is what lets them do the next hard thing. Without it, every new challenge requires the same bypass, and the dependency deepens.

This is not an argument against AI. It is an argument for intentional AI pedagogy — design that preserves the cognitive work that matters while offloading the work that was never the real point.

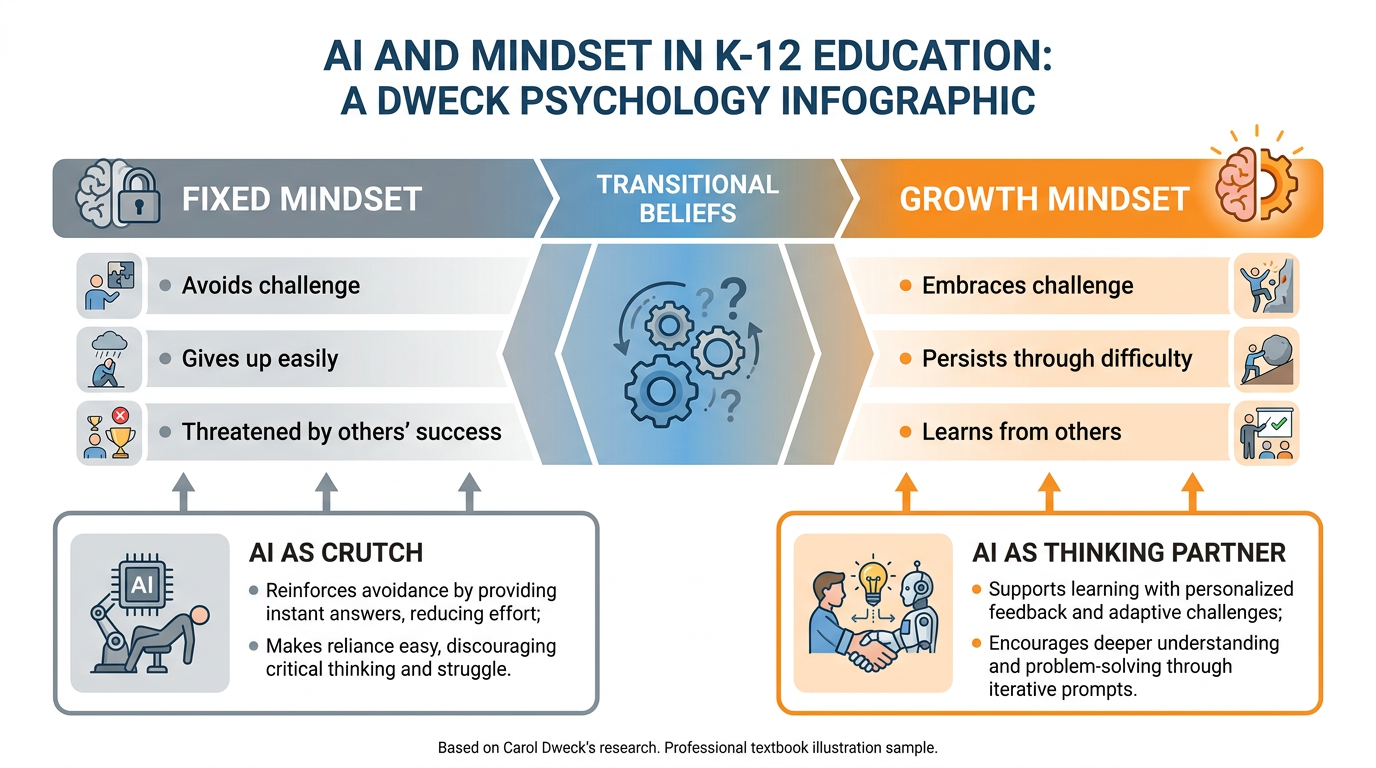

121.12 When Everyone Has the Same Tools, Mindset Becomes the Differentiator¶

In 2006, Carol Dweck published Mindset: The New Psychology of Success. It contained a deceptively simple idea that has since become one of the most applied findings in education.

Dweck found that students hold one of two basic beliefs about intelligence. Students with a fixed mindset believe intelligence is a fixed trait — you either have it or you don’t. Challenges are threatening because they reveal your level. Failure means you’re not smart enough. Effort is suspect because smart people shouldn’t need to try hard.

Students with a growth mindset believe intelligence is developable — it’s not what you have, it’s what you build through effort and learning. Challenges are opportunities. Failure is information. Effort is the mechanism, not a red flag.

The practical implications are dramatic. Fixed-mindset students avoid challenges to protect their self-concept. Growth-mindset students seek challenges because they see them as the path to development. In the research, mindset differences predict academic trajectory even when controlling for baseline ability.

Figure 9:The Mindset Spectrum in the AI Era. AI doesn’t determine which mindset students develop — but how teachers frame AI use does. The same tool can reinforce learned helplessness or accelerate growth, depending entirely on the pedagogical context.

Now add AI.

AI, used poorly, is a fixed-mindset accelerant. If the implicit message is “AI is the smart one, you just use the output,” you’ve created a classroom culture where student intelligence is irrelevant. Why struggle? The tool gets it right anyway. This isn’t just demoralizing — it’s a direct attack on the development of a growth mindset, which depends on students experiencing the direct causal link between their own effort and their own growth.

AI, used well, is a growth-mindset amplifier. When AI is framed as a thinking partner — a tool that extends your capacity, not one that replaces it — students who use it learn more through the engagement than they would alone. The AI surfaces possibilities they hadn’t considered. It asks questions that make them think harder. It provides the “just enough scaffold” that keeps productive struggle productive. And when students look at their work alongside the AI’s contribution and can clearly see their own thinking advancing, growth mindset deepens.

The difference is framing. It’s what you say when you introduce the tool. It’s what you ask students to do in the lab. It’s what you value when you grade.

When everyone in the world has access to the same AI tools — and within the next few years, they will — the variable that matters is not access. It’s what students do with the tool, and that’s determined by who they are as learners. Mindset is not a soft skill. In the AI era, it is the differentiator.

Where the analogy breaks down: mindset is not entirely internal. Structural barriers — poverty, trauma, inadequate instruction, language barriers — create fixed-mindset conditions regardless of belief. AI can amplify inequality as easily as it amplifies equity, and the teacher who assumes mindset is purely a matter of individual psychology is ignoring half the equation.

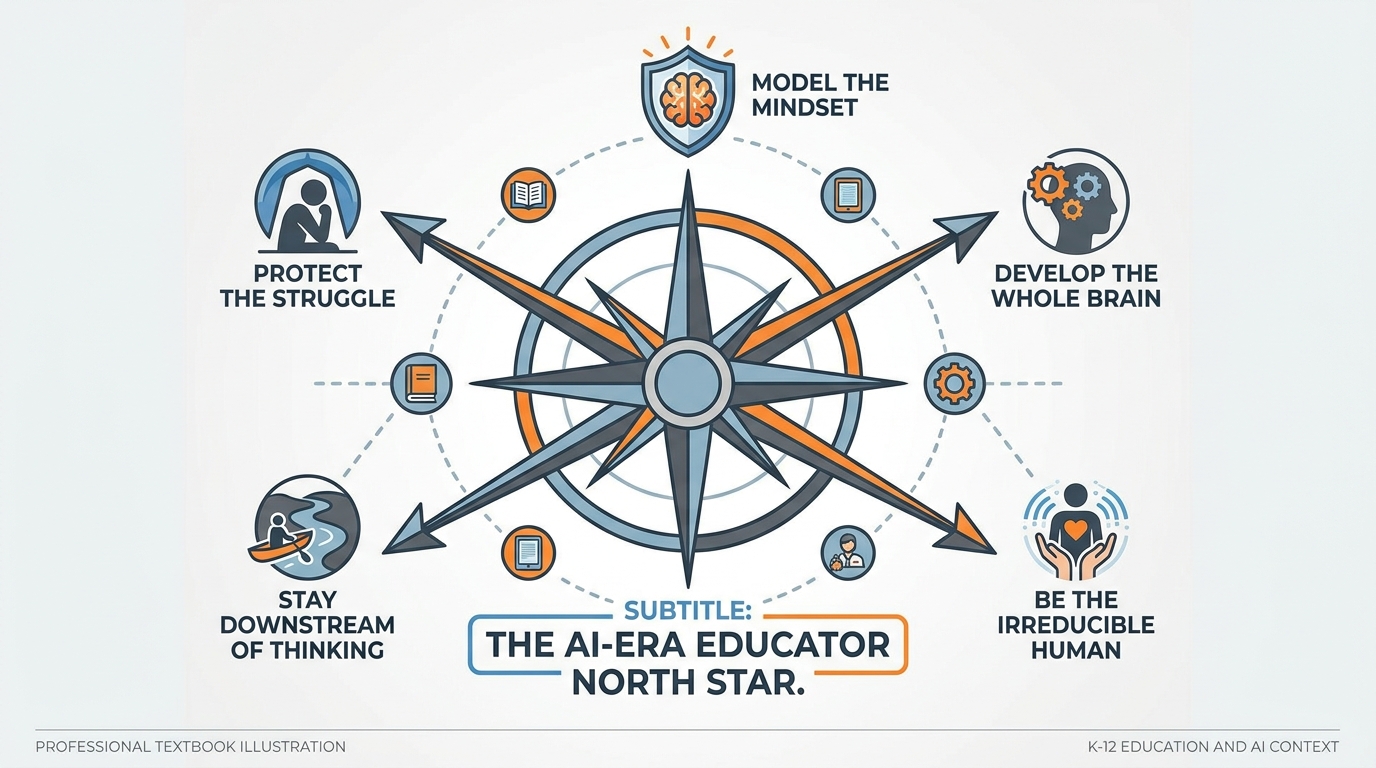

131.13 Your North Star as an AI-Era Educator¶

You’ve traveled a long way in this chapter. You’ve met Bloom and Piaget and Skinner and Bjork and Kapur and Dweck and Hebb. You’ve looked at the prefrontal cortex, the knowledge tax, the death of the answer, and the slot machine built into most educational software.

Now, before the chapter ends, let me give you something to carry with you — a North Star, five principles that hold regardless of what tools change, what policies shift, what new models get released, what new crises arrive.

Figure 10:The AI-Era Educator’s North Star. Five principles that hold regardless of what changes. Return to these when the technology landscape shifts and you’re not sure which way to move.

Principle 1: Protect the Struggle. Not all struggle. Not unproductive frustration that defeats and demoralizes. The productive kind — the cognitive effort that carves neural pathways, builds schemas, and develops the learning infrastructure your students will carry for life. Design for struggle. Protect it from technology that removes it.

Principle 2: Stay Downstream of the Thinking. When AI does the thinking, AI learns. When students do the thinking — supported, scaffolded, challenged, and guided by AI as needed — students learn. Your job is to keep the student upstream of the cognitive work. AI is always downstream. The student is always the agent.

Principle 3: Develop the Whole Brain, Not Just the Output. The product of education is not an essay or a test score or a portfolio. It’s a human being whose brain has been developed — whose schemas are richer, whose metacognitive skills are sharper, whose capacity to think through hard problems has grown. Evaluate your AI use by this standard: does it develop the brain, or does it produce the output while leaving the brain unchanged?

Principle 4: Be the Irreducible Human. There are things you do that AI cannot: notice that Marcus is having a bad week, decide that this moment requires humor and not instruction, make a judgment call about what this particular student needs right now that has nothing to do with the curriculum. These are not soft skills. They are the load-bearing structure of real education. Invest in them.

Principle 5: Model the Mindset. Your students learn about AI use primarily by watching you use it. When you use AI to cut corners, you teach them that cutting corners is what AI is for. When you use AI to do harder, more ambitious, more interesting work than you could do alone, you teach them something entirely different. Be what you want them to become.

14Case Study & Discussion Board (2 pts)¶

14.1Case Study: The Jaylen Problem¶

Read the following scenario and respond to the discussion prompt below.

Ms. Rivera teaches 10th-grade U.S. History. On a Tuesday in October 2025, she assigned the class a one-page reflection: “Why do revolutions happen? Use at least one primary source.” She collected the papers the next day.

Five students submitted papers that read like polished magazine articles — clearly AI-generated. Two submitted work that was slightly better than their usual writing but still noticeably AI-assisted. One student, Jaylen, submitted something surprising: a two-page argument titled “The American Revolution Was a Mistake,” complete with a primary source he’d found and two counterarguments he’d personally dismantled. When Ms. Rivera pulled him aside and asked how he’d written it, he said: “I asked AI what arguments people make against the Revolution, then I argued back against them myself.”

Jaylen had not done the assignment as written. He had used AI in a way that was arguably more educationally valuable than what the assignment asked for — but he’d also gone off-script. Ms. Rivera doesn’t have a clear AI use policy. The other five students who submitted AI-generated work claim they “didn’t know” they weren’t supposed to use AI.

Discussion Prompt:

Consider the following questions in your response (minimum 250 words):

What did Jaylen’s approach preserve that the other five students’ approaches abandoned? Ground your answer in at least one of the theories from this chapter (Bjork’s desirable difficulties, Piaget’s constructivism, Kapur’s productive failure, or Dweck’s mindset).

What is the teacher’s responsibility in this scenario? Should Jaylen be penalized, rewarded, or something more nuanced?

What would a clear classroom AI use policy have changed about this situation?

Discussion Guidelines:

Your initial post must be a minimum of 250 words.

You must include at least one scholarly citation (APA format) from a source covered in this chapter or your own research.

You must respond meaningfully to at least two of your peers — not just “I agree” but a substantive engagement with their argument (minimum 75 words per response).

Due: Initial post by Wednesday, responses by Sunday.

15Before You Begin: Course Prerequisites¶

16Hands-On Lab: Draft Your Classroom AI Use Policy (10 pts)¶

16.1Overview¶

You’ve spent this chapter building a theoretical foundation for AI in the classroom. Now you’re going to apply it. This lab asks you to do something that every educator in America needs to do right now: create a clear, practical, pedagogically-grounded AI use policy for your classroom.

By the end of this lab, you will have a real, usable document — not a hypothetical. You’ll draft it with Gemini as a thinking partner, ground it in current institutional guidelines, and submit it for peer review.

Time required: Approximately 90 minutes

Tools: Gemini (gemini.google.com), Google Docs

Points: 10

16.2Step 1: Research the Policy Landscape (20 min)¶

Before you write a single word of your policy, you need to know what institutional guardrails already exist. Open Gemini in a new tab and use it to help you research the following:

Prompt to use in Gemini:

“I’m a K-12 teacher in Florida creating a classroom AI use policy. What are the current AI guidelines from Miami-Dade County Public Schools (MDCPS), the Florida Department of Education, and Google for Education as of 2025-2026? Summarize the key principles from each and note any specific rules about student data privacy, AI disclosure, and academic integrity.”

Review the response critically. Then independently verify with these current sources:

Miami-Dade County Public Schools Technology Acceptable Use Policy: Visit dadeschools.net → search “AI use policy” or “acceptable use technology” for current guidelines

Florida Department of Education: Visit fldoe.org → Technology in Education section for Florida-specific AI directives

Google for Education: edu

.google .com /products /workspace -for -education/ — Student Data Privacy and AI use guidelines ISTE (International Society for Technology in Education): iste

.org /areas -of -focus /AI -in -education — current educator AI standards

16.3Step 2: Build Your Policy Framework (30 min)¶

Open a new Google Doc. Title it: [Your Name] — Classroom AI Use Policy, [Year]

Your policy must address all six of the following sections. Use this framework as your structure:

CLASSROOM AI USE POLICY — FRAMEWORK

Section 1: Purpose and Philosophy (2–3 sentences)

State what AI is, what it isn’t, and what your overarching philosophy about its role in your classroom is. Ground this in at least one principle from Chapter 1.

Section 2: Permitted AI Uses

List at least 5 specific, concrete examples of how students are permitted to use AI tools (e.g., “AI may be used to brainstorm ideas before writing a first draft” / “AI may be used to check spelling and grammar after writing is complete”).

Section 3: Prohibited AI Uses

List at least 5 specific, concrete examples of what students may NOT use AI for (e.g., “AI may not be used to generate the text of a submitted assignment” / “AI may not be used to produce responses to discussion board prompts”).

Section 4: Disclosure Requirements

State clearly: when students use AI for any part of an assignment, what must they disclose? How? In what format? (Include a sample disclosure statement students can add to their work.)

Section 5: Consequences

State what happens when the policy is violated. Be specific and fair.

Section 6: My Commitment

State what you, the teacher, commit to in this policy — including how you’ll model AI use, how you’ll update the policy as the technology evolves, and how you’ll give students voice in shaping these norms going forward.

16.4Step 3: Gemini as Co-Author (20 min)¶

Now use Gemini to help draft your policy. Use the following prompts in sequence:

Prompt 1 (Foundation):

“I’m a K-12 [your grade level and subject] teacher. Help me write a classroom AI use policy. I want it to be grounded in the following educational principles: [paste 2-3 key ideas from Chapter 1 that resonated with you]. The policy needs to cover: permitted uses, prohibited uses, disclosure requirements, and consequences. Write in clear, student-accessible language.”

Prompt 2 (Refinement):

“Here’s the draft I have so far: [paste your current draft]. Make the language more concrete and specific. Replace any vague phrases like ‘appropriate use’ with specific examples. Add a student-facing explanation section that explains WHY each rule exists in terms a 9th grader would understand.”

Prompt 3 (Reality-Check):

“Review this policy for any gaps, inconsistencies, or potential problems. What questions might a parent, administrator, or student raise about this policy? What scenarios does it fail to address?”

Review Gemini’s responses and integrate what’s useful. Remember: you are the author. Gemini is your thinking partner. Every word in the final policy is your responsibility.

16.5Step 4: Group AI Challenge (20 min)¶

This is where the lab goes beyond your individual policy draft.

Working in your group, use AI to do two things:

First — identify a real problem. Have a conversation with Gemini where your group collectively brainstorms a genuine challenge facing teachers in your school or district right now. Let the AI help you sharpen the problem statement. Push back on vague answers. Keep going until you have a problem that is specific, real, and worth solving.

Second — use AI to solve it. Once you have the problem, use Gemini to help your group develop a concrete solution, strategy, or approach. This could be a policy, a process, a lesson design, a communication strategy — whatever the problem calls for. The AI is your thinking partner, not your ghostwriter. Your group’s judgment drives the decisions.

Be prepared to share with the full class:

What problem you identified and why it matters

How AI helped you think through it

What your proposed solution looks like

What the AI got right — and what it missed

There are no wrong problems and no wrong solutions. The goal is to practice using AI as a genuine thinking tool and to be able to articulate the value of what you built.

16.6Submission¶

Submit to Canvas:

A link to your individual AI Use Policy Google Doc (sharing set to “Anyone with the link can view”)

A 150-word reflection: What was the hardest section of your policy to write, and why? What surprised you about using AI as a drafting partner?

Grading rubric:

| Criterion | Points |

|---|---|

| All six policy sections present and substantive | 3 pts |

| Policy reflects understanding of Chapter 1 concepts | 3 pts |

| Evidence of Gemini use and critical revision | 2 pts |

| Group AI challenge participation and class discussion | 2 pts |

| Total | 10 pts |

17🎯 In-Class Assignment: Personal AI Readiness Audit (10 pts)¶

Details and instructions will be provided in class.

Points: 10

Chapter 1 of 8 — AI Thinking for Educators · Dr. Ernesto Lee · Miami Dade College