Pedagogy First: Putting AI in Its Place

Everything You've Learned So Far Is Technology. This Chapter Is About Teaching.

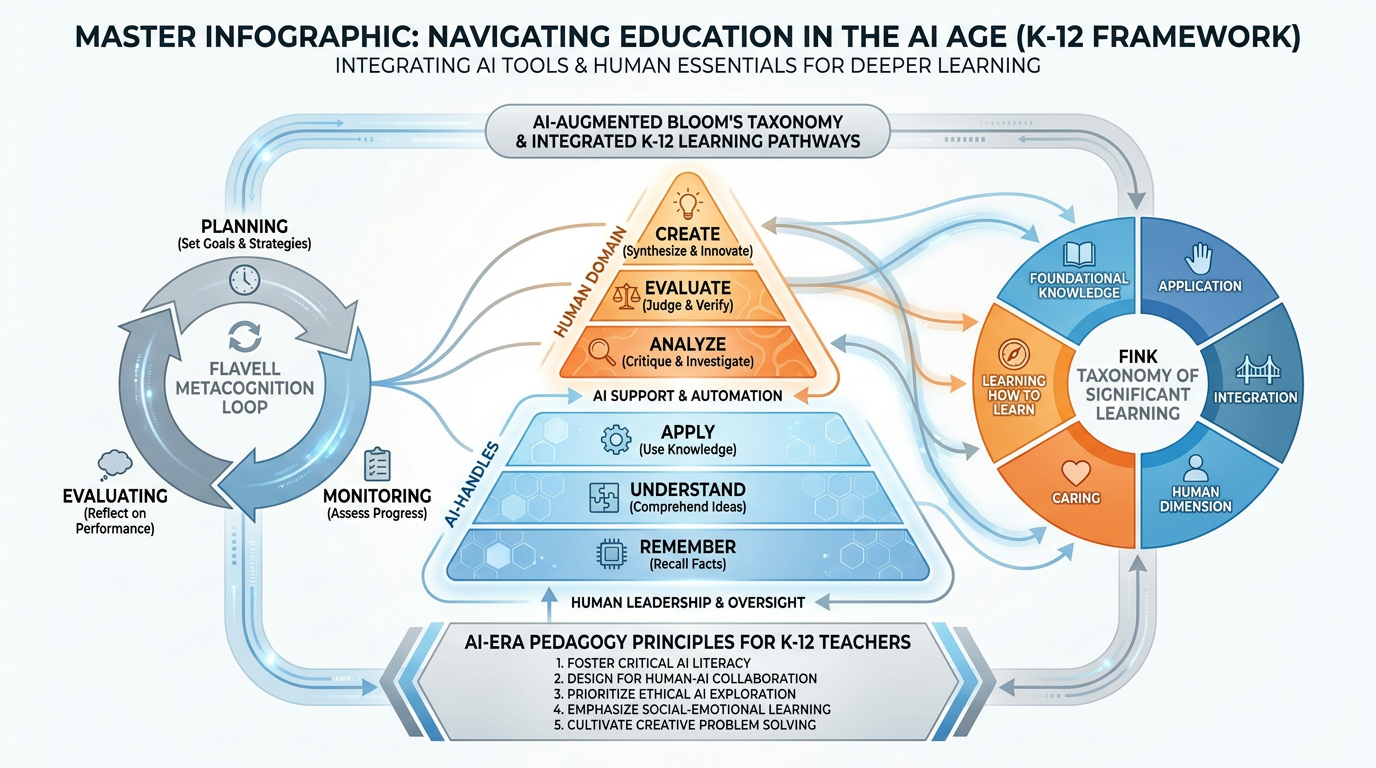

Figure 1:Chapter 7 at a Glance. Bloom rebuilt. Metacognition in the AI era. The human zones no algorithm touches. This chapter is your pedagogical compass for teaching when the tools are smarter than we ever imagined — and the human teacher matters more than ever.

Let’s be honest about what the last six chapters have been.

They’ve been an orientation tour of a new landscape. You’ve learned what AI is, how large language models work, how to prompt effectively, how to spot hallucinations, how to use AI tools across different subjects, how to think about the ethics. That was all necessary. You needed to understand the technology before you could use it wisely.

But here’s what none of that addresses: how you teach.

Technology doesn’t teach. You do. And in a world where AI can generate a lesson plan in thirty seconds, write a student’s essay in four, and explain the French Revolution in twelve languages, the question that actually matters is this: What does excellent teaching look like now?

That’s what this chapter is about. Not tools. Teaching. The craft, the science, the irreducible human work of it.

We’re going to start with Bloom — and show you why the entire taxonomy has shifted under your feet. We’re going to look at what assessment means when AI can write the essay. We’re going to talk about metacognition, the equity problem hiding inside the AI revolution, and the categories of your work that no model will ever touch.

By the end, you’ll have something more useful than any tool: a pedagogical framework for the AI era that holds regardless of what gets released next year, or the year after, or in 2030 when everything changes again.

17.1 The Teacher Is Still the Teacher¶

Start here: the most dangerous thing AI has done to education is convince some people that teachers are optional.

You’ve probably seen the headlines. “AI tutors will replace teachers.” “Personalized learning at scale makes human instruction obsolete.” “Students can learn anything, anytime, without a classroom.” And behind every one of those headlines is a real grain of truth — AI can tutor, AI can explain, AI can adapt in real time to a student’s level.

But here’s what AI cannot do, and what the research has been saying for decades long before AI existed:

AI cannot notice that Marcus hasn’t turned in anything in three weeks because his parents are getting divorced and he’s sleeping on his cousin’s couch. AI cannot make the split-second decision to set the lesson plan aside because the room tells you that what the kids actually need right now is to talk about the shooting that happened four blocks away last night. AI cannot look at a struggling student and see the moment they’re about to give up — and choose exactly the right word to keep them in the game.

AI cannot care. Not really. It can simulate the linguistic patterns of caring. But a student who needs to be seen, to be known, to matter to someone in the building — that student needs you.

This isn’t sentimentality. It’s developmental science. John Hattie’s massive meta-analysis of what influences student achievement — spanning over 1,400 studies and 300 million students — found that the teacher-student relationship is one of the most powerful positive influences on learning outcomes, with an effect size of 0.52. That’s more than homework, more than class size, more than grade retention, more than most educational technology.

The relationship is not the delivery mechanism for learning. The relationship is part of the learning.

So yes. The technology is powerful. The tools are extraordinary. The landscape has changed. And the teacher is still the teacher. The question is what that means now.

27.2 Bloom’s Taxonomy, Rebuilt for the AI Age¶

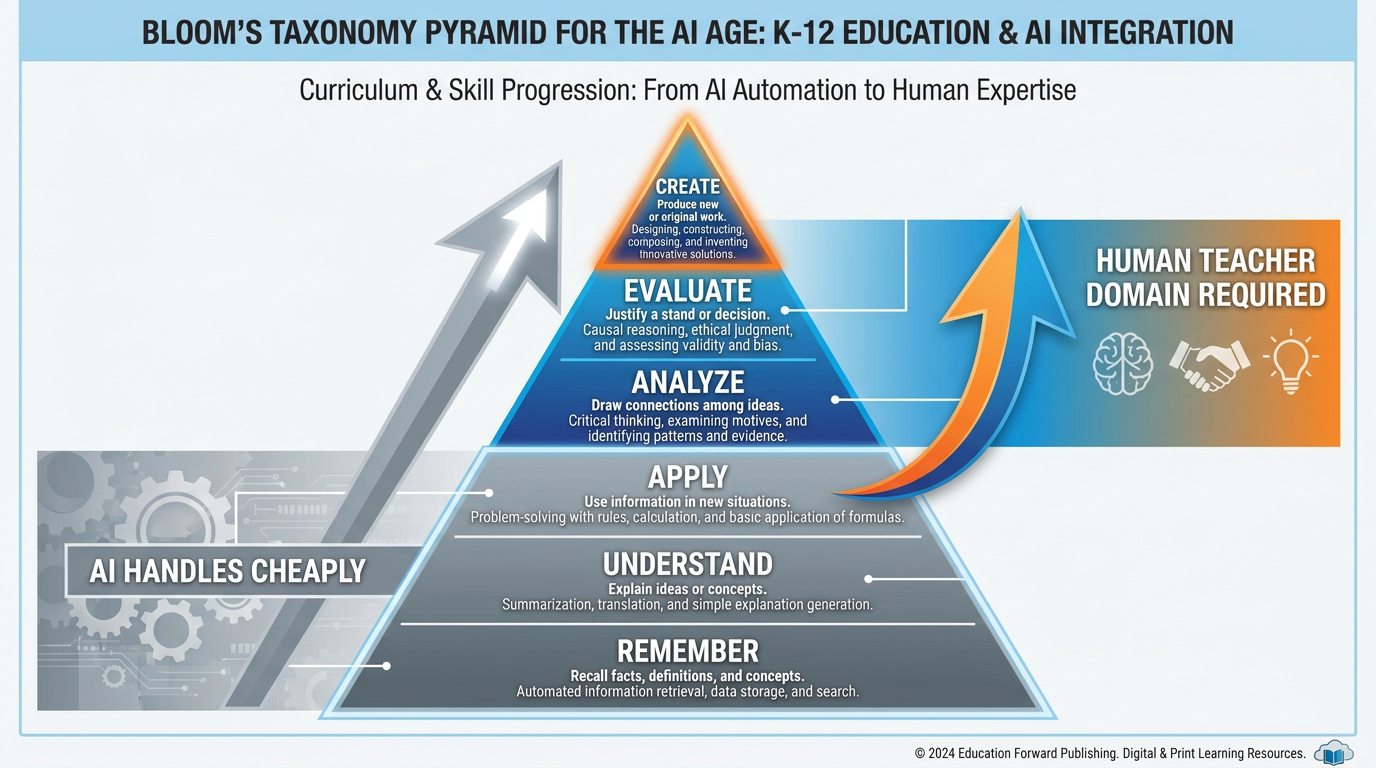

In 1956, Benjamin Bloom and his colleagues published a classification of educational objectives that would become the most widely used framework in curriculum design. Revised in 2001 by Anderson and Krathwohl, Bloom’s Taxonomy describes six levels of cognitive complexity, from simplest to most demanding:

Remember — Retrieve relevant knowledge from memory

Understand — Construct meaning from instructional messages

Apply — Carry out a procedure in a given situation

Analyze — Break material into parts and determine relationships

Evaluate — Make judgments based on criteria

Create — Produce something new by combining elements in a novel way

For most of K-12 education’s history, the bottom three levels consumed most of instructional time. Define the term. Explain the concept. Solve the problem using the procedure. These tasks were hard — not cognitively demanding in a deep sense, but hard because information was scarce, practice was effortful, and the teacher had to spend enormous time and energy moving students from “don’t know” to “can demonstrate.”

That world ended. Quietly, around 2022.

AI now performs Remember, Understand, and Apply with speed, accuracy, and patience that no human teacher can match at scale. Ask it to define a term: done. Ask it to explain a concept in six different ways until one clicks: done. Ask it to walk through a hundred practice problems with instant feedback: done. The bottom three tiers of Bloom’s Taxonomy have been, for practical purposes, outsourced.

Figure 2:Bloom’s Taxonomy, Rebuilt. The bottom three tiers — Remember, Understand, Apply — are now performed cheaply and competently by AI. Your instructional investment must climb to Analysis, Evaluation, and Creation, where human cognition and human guidance remain essential.

This is not a small thing. It is a structural shift in what curriculum design must look like.

Here’s the practical implication: if your lesson plan’s primary cognitive demand lives at Remember, Understand, or Apply, a student can outsource that entire lesson to an AI in fifteen minutes. The lesson exists — but the learning may not. Not because your lesson was bad, but because the landscape changed around it.

The curriculum has to climb. Every unit plan, every lesson objective, every assessment has to ask: where is the cognitive demand? If it’s in the bottom three tiers, AI has already arrived there. You need to build in higher-order work — the analysis, the evaluation, the creation — that cannot be outsourced without eliminating the learning entirely.

This is both a challenge and a liberation. For decades, teachers lamented that they never had enough time to get to the “good stuff” — the debates, the projects, the real problems, the creative synthesis. They were stuck drilling the bottom tiers because students needed that foundation. Now AI can scaffold the bottom tiers far more efficiently than whole-class instruction. That frees you. Your job isn’t to be a worse version of a search engine. Your job is to take students somewhere the search engine cannot.

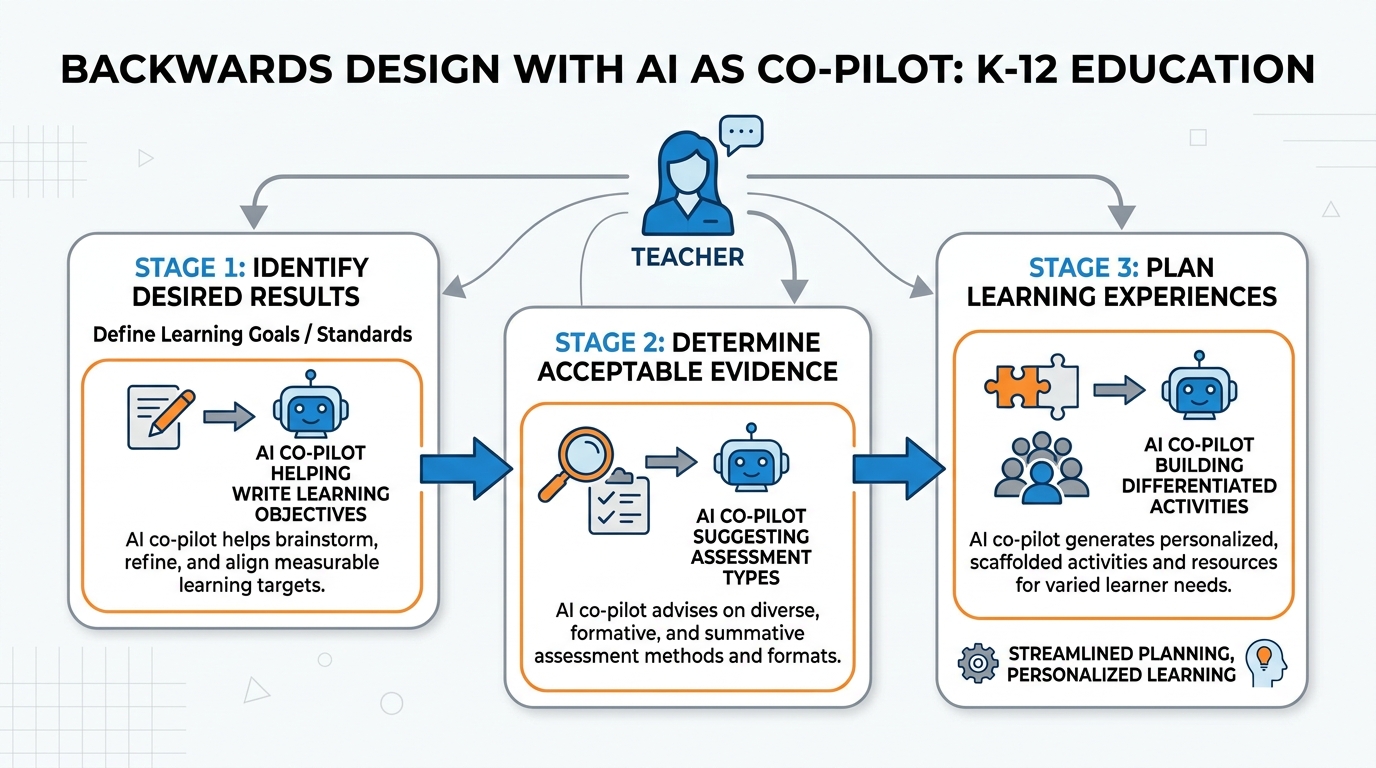

37.3 Backwards Design With AI as Your Co-Pilot¶

Grant Wiggins and Jay McTighe introduced Understanding by Design (UbD) in 1998, and it changed how thoughtful curriculum designers work. The core insight is deceptively simple: start with the end. What do you want students to know, understand, and be able to do? Then design backward to figure out what evidence would prove they got there, and then plan the learning experiences.

Most teachers still plan forward: here’s the content I need to cover, here’s what I’ll do this week, I’ll figure out the assessment later. Backwards design flips that sequence and produces dramatically better alignment between what you teach, what you assess, and what students actually learn.

AI doesn’t change this principle. It supercharges it.

Figure 3:Backwards Design With AI as Co-Pilot. Start with the outcome. Work backward to evidence. Then design the journey. AI accelerates every stage of this process without replacing the professional judgment that drives it.

Here’s what backwards design with AI as your co-pilot looks like in practice:

Stage 1 — Identify Desired Results: Prompt AI to help you articulate learning objectives at the right tier of Bloom’s. Not “students will understand photosynthesis” (Remember/Understand tier — AI can already do that for them). Instead: “Students will evaluate the tradeoffs between different agricultural practices given competing evidence about carbon sequestration” (Evaluate tier — now we’re somewhere interesting). AI is excellent at generating objective options; you’re excellent at selecting the ones that matter for your specific students, school, and context.

Stage 2 — Determine Acceptable Evidence: Ask AI to generate a menu of assessment types that could demonstrate the objective. Portfolio? Oral defense? Real-world application? Performance task? Socratic seminar? Debate? AI gives you options you might not have considered. You decide which ones are authentic, feasible, and actually worth your students’ time.

Stage 3 — Plan Learning Experiences: Now — and only now — plan the daily activities. AI can draft differentiated versions at multiple reading levels, suggest analogies and examples, propose discussion questions, identify likely misconceptions, and help you sequence the content logically. You read the room, adjust in real time, and make the ten judgment calls per day that no lesson plan ever predicts.

The professional judgment is yours throughout. AI doesn’t replace Stage 1-2-3 thinking. It removes the friction so you can think faster and more ambitiously.

Week starts with the textbook chapter.

Cover Section 4.1 Tuesday

Cover Section 4.2 Wednesday

Quiz Friday on 4.1 and 4.2

Assessment: Multiple choice test, matching, short answer recalling content

The content drives the design. Assessment is an afterthought.

Week starts with the end goal.

Where do I want students to be cognitively after this unit?

What would constitute real evidence of that understanding?

AI drafts differentiated materials, multiple explanations, discussion scaffolds

Assessment is authentic performance, not content recall

The understanding drives the design. AI serves the teacher’s professional vision.

47.4 Designing Lessons That Preserve Productive Struggle¶

You met productive struggle in Chapter 1 — Bjork’s desirable difficulties, Kapur’s productive failure. Let’s go deeper now, because this is where AI pedagogy either succeeds or fails.

Here’s the brutal truth: a lesson that AI can complete for the student is not a lesson that teaches the student anything. And in 2026, AI can complete most traditional lessons. The quiz, the worksheet, the essay prompt, the five-paragraph response, the fill-in-the-blank note guide. All of it.

The question is not “how do I stop students from using AI?” The question is “how do I design lessons where the work that matters cannot be bypassed?”

Productive struggle happens in the gap between what students know and what they need to figure out — a gap wide enough to require genuine intellectual effort but narrow enough that the effort is achievable. Your job is to keep that gap alive in an AI-filled classroom.

Four design moves that preserve productive struggle:

Move 1: Put the struggle before the explanation. Give students the problem before they have the concept. Let them wrestle. Let them be wrong. Then teach into the wrongness. Kapur’s research shows this sequence produces dramatically better retention than teaching first, then practicing.

Move 2: Make the output process, not just product. “Submit an essay” is completable by AI. “Submit a thinking log showing your decision-making process, with at least three places where you changed direction and why” is harder to fake. Process documentation — rough drafts, decision logs, revision histories, reflection annotations — requires the student to have actually done the thinking.

Move 3: Create problems that require personal knowledge. AI knows the internet. It doesn’t know your classroom, your community, your students’ lives. “Analyze the causes of the Civil War” is AI-solvable. “Interview one person in your family or neighborhood about an experience with injustice, then connect their experience to a structural cause we’ve studied” requires something AI doesn’t have.

Move 4: Design for the oral moment. Whatever students submit on paper, build in regular low-stakes oral moments: “Tell me about your thinking here.” “Walk me through how you arrived at this claim.” “What would happen if your premise changed?” Students who did the thinking can do this. Students who outsourced can’t — and they know it, and that knowledge is itself pedagogically important.

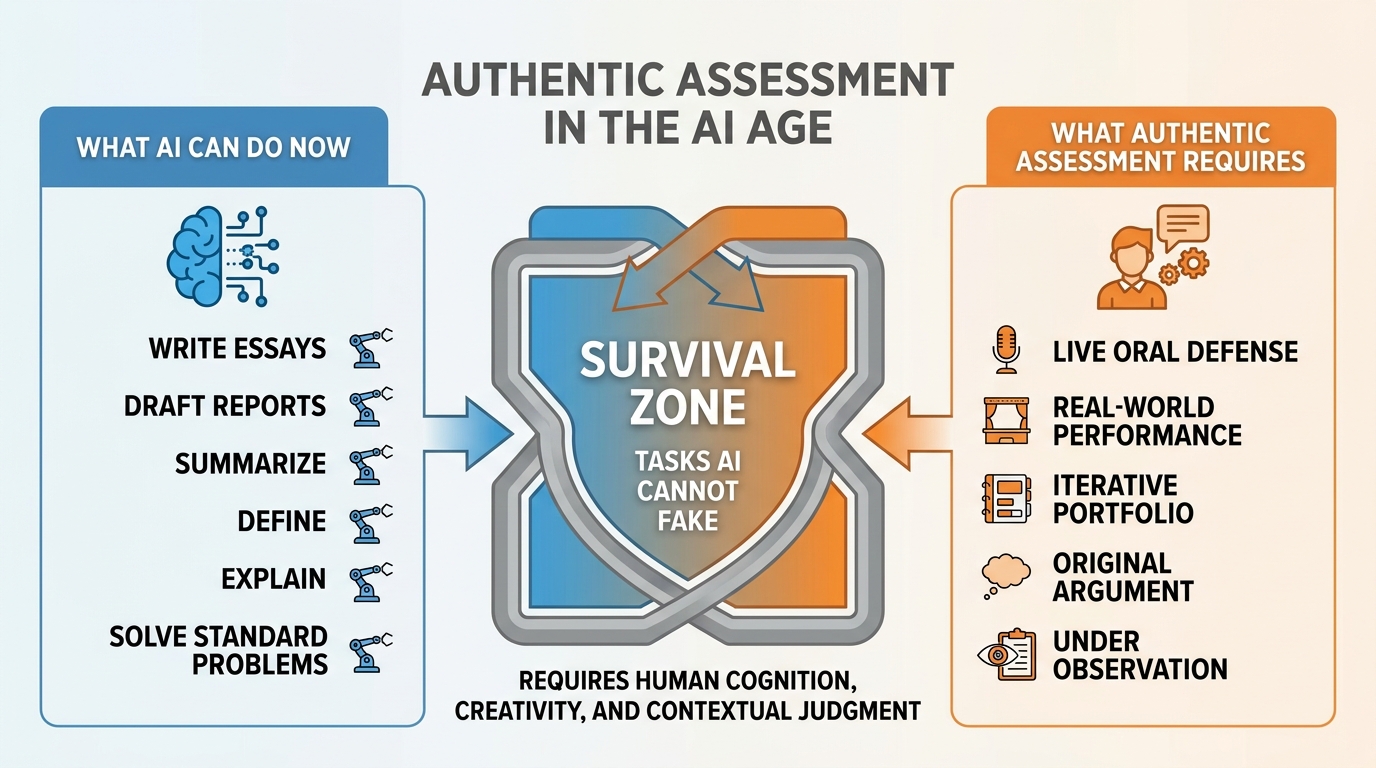

57.5 Authentic Assessment When the AI Can Write the Essay¶

The five-paragraph essay is over as an assessment instrument. Not because it’s bad pedagogy — it was always limited — but because AI can write a technically proficient one in about seven seconds.

This is actually good news. The five-paragraph essay was a proxy for thinking, not thinking itself. Assessment should have always been closer to the real thing. Now it has to be.

What survives the AI era in assessment?

Figure 4:Authentic Assessment in the AI Age. The left column belongs to AI. The right column belongs to human learners. Design your assessments to live in the space AI cannot occupy.

Authentic assessment — assessment that requires students to demonstrate understanding through performance in realistic, meaningful contexts — was always theoretically superior. Now it’s practically necessary. Here’s what that looks like across different forms:

Portfolio with Revision History: Not just the final product — the whole journey. Every draft, every revision, every reflection note. AI can write Draft 1. It can’t fake a genuine intellectual evolution across eight weeks that leaves evidence of a specific student’s thinking developing over time.

Oral Defense: Ten minutes. Student presents their work. You ask three questions they couldn’t have anticipated. The authenticity of understanding is immediate and unmistakable. This doesn’t have to be formal — it can be a brief one-on-one conversation. What it does is close the gap between produced text and human understanding.

Performance Tasks: Build something real. Teach a concept to a younger student. Present a proposal to the principal. Write for an authentic audience (a local newspaper op-ed, a letter to a city council member, an explainer for parents). Real stakes produce real engagement. AI can draft — but the student has to perform.

Iterative Reflection: Require students to document their thinking before getting feedback (from the teacher, from peers, from AI). What do I think so far? What am I uncertain about? What question am I trying to answer? Recorded before any response. AI can’t retroactively produce authentic pre-task cognition.

The Socratic Method, Revived: Questioning is the oldest assessment instrument in human education. Socrates never gave a multiple-choice test. Neither did the best teachers you’ve ever had. A well-placed question — one that requires the student to synthesize, to defend, to apply — tells you more about what they actually know than any paper they submit.

The Assessment Design Principle: Move from Product to Process

The deeper principle behind all authentic assessment in the AI era is this: shift from assessing products to assessing processes.

AI can produce products. What AI cannot produce is the authentic cognitive journey of a specific student — their specific confusions, their specific revisions, their specific choices, their specific voice developing over time.

Design assessments that leave evidence of the journey, not just the destination. The destination is interesting. The journey is educational.

67.6 AI as a Mirror — Helping Students See Their Own Thinking¶

Here’s something counterintuitive: AI, used well, can make students more metacognitively aware, not less.

Most students have very little visibility into their own thinking. They write, they submit, they move on. They rarely step back and ask: What was my reasoning process here? Where did I get confused? What assumptions did I make? What would a strong critic say about this?

AI can serve as a mirror for that thinking — if you design for it.

The AI-Mirror Protocol: After students complete a first draft or initial problem-solving attempt, have them share it with an AI tool and ask a specific set of questions:

“What are the three strongest assumptions underlying my argument? What evidence would challenge those assumptions? Where is my reasoning weakest?”

Then have students respond in writing: Do you agree with the AI’s analysis? Where did it miss something? Where did it see something you didn’t?

That conversation — between the student’s thinking and the AI’s reflection of it — is enormously productive. The student is now the expert, the evaluator, the judge. AI is the mirror, not the answer. The cognitive work has shifted to exactly where it belongs.

Making Thinking Visible: Use AI to help students articulate thinking they usually leave implicit. “Explain your thinking process step by step, as if you were teaching it to someone who knows nothing.” “What question were you really trying to answer?” “What would you do differently if you started over?” These are metacognitive prompts that AI can facilitate but students must answer — from their actual experience.

The key is the framing. “Use AI to make your argument better” produces outsourcing. “Use AI to see your argument more clearly” produces metacognition. The tool is the same. The pedagogy is different.

77.7 Knowing When to Use the AI (and When Not To) Is the Central Skill¶

Of everything in this chapter, this is the section that matters most for your students’ long-term futures.

Because in ten years, the specific AI tools your students use today will be as obsolete as the graphing calculator feels now. What will not be obsolete is the capacity to regulate their own thinking in the presence of powerful AI — to know when to reach for the tool and when to resist, when to trust the output and when to question it, when AI is helping them think and when it’s thinking for them.

That capacity has a name: metacognition.

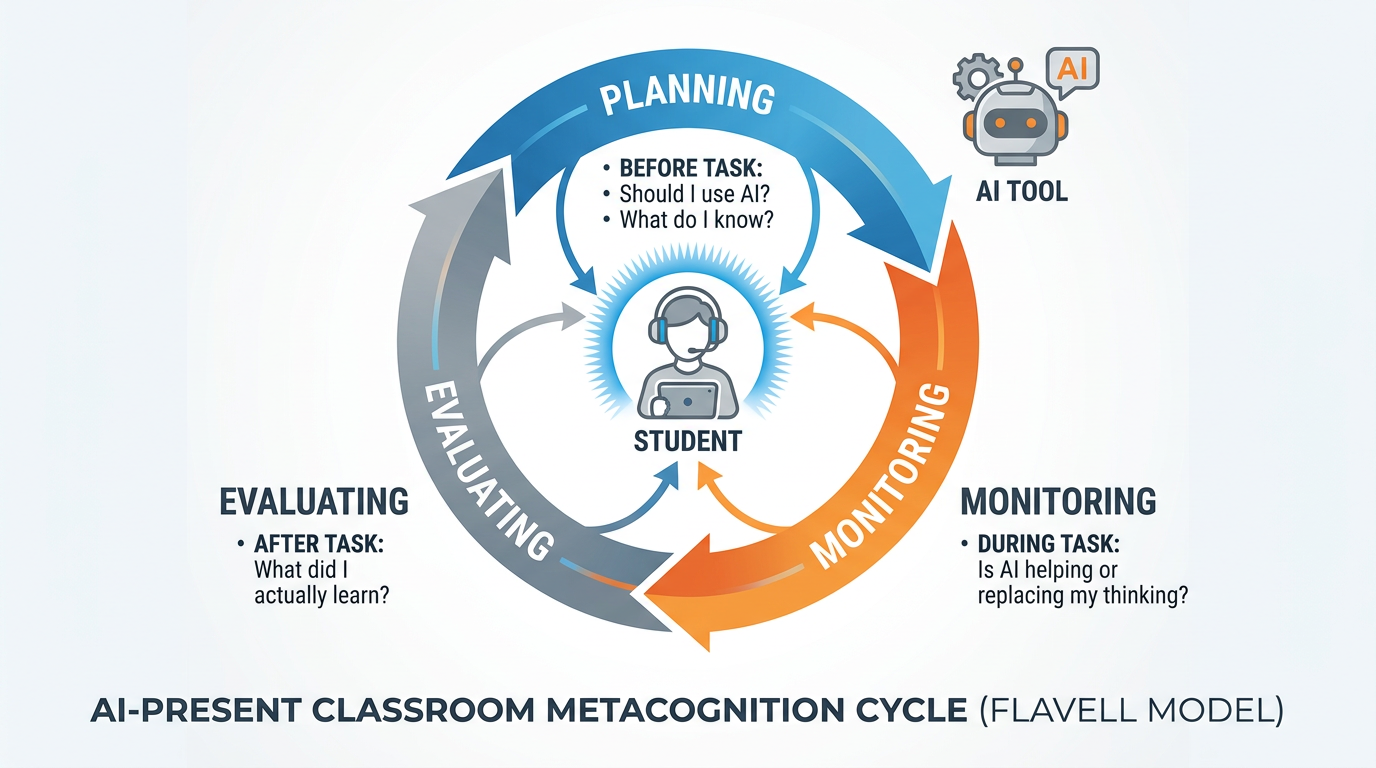

John Flavell (1979) coined the term to describe knowledge and control of one’s own cognitive processes. Metacognition operates at three levels:

Planning: Before beginning a task, what do I already know? What do I need to find out? What strategy makes sense? In the AI era: Should I use AI for this? What specifically should I use it for? What should I do myself?

Monitoring: During the task, how is my thinking going? Am I understanding, or just moving? In the AI era: Is AI helping me think, or doing the thinking for me? Am I learning here, or just getting an output?

Evaluating: After the task, did I succeed? How do I know? What would I do differently? In the AI era: What did I actually learn? What can I now do that I couldn’t before? How much of this was mine?

Figure 5:Metacognition in the AI-Present Classroom. Flavell’s three-phase loop — Plan, Monitor, Evaluate — applied to AI use. The students who master this loop will be genuinely AI-fluent. Those who don’t will be AI-dependent.

The difference between AI-fluent and AI-dependent is entirely metacognitive. The AI-fluent student uses the tool with intention: “I’m going to use AI to generate counterarguments I might have missed, then evaluate each one.” The AI-dependent student uses the tool without intention: “I’ll just ask it and see what it says.” The output might look similar. The learning is completely different.

Teaching metacognition is not a separate unit. It’s woven into every lesson. It’s the questions you ask: “Before you check with AI, what’s your current best thinking?” “After reading that AI explanation, can you explain it in your own words?” “What would you have done differently if AI weren’t available?”

These questions are not punitive. They’re instructional. They’re teaching students to watch their own thinking — the single most important skill in an age of powerful cognitive tools.

87.8 The Disclosure Question: When and How to Tell Students AI Helped¶

Let’s talk about transparency — because AI is already in your classroom whether you’ve addressed it or not, and silence is its own kind of policy.

The disclosure question has two sides. There’s the question of when students must disclose that AI helped with their work. And there’s the equally important — and often forgotten — question of when you should model disclosure by telling students when AI helped with your materials.

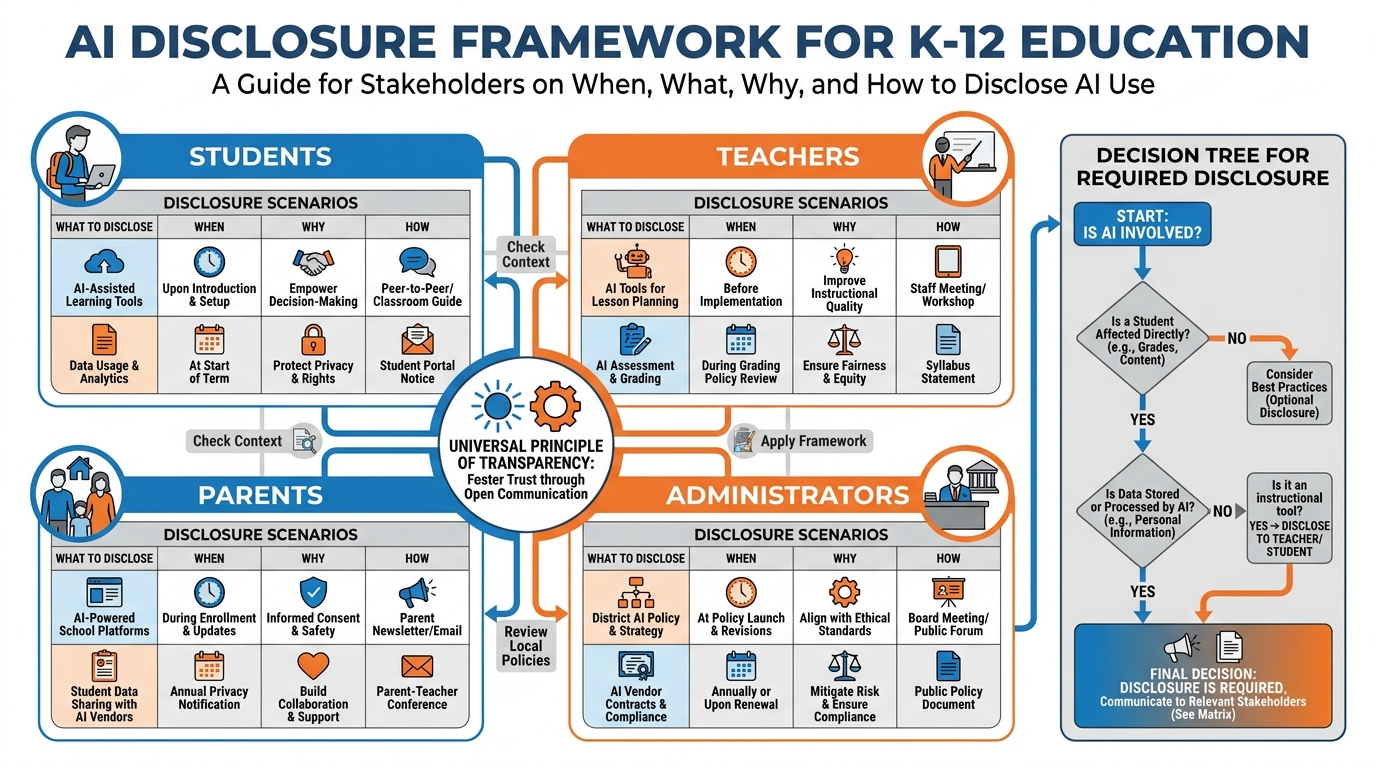

Figure 6:The Disclosure Framework. Transparency about AI use is not just a student issue. It’s a professional practice for teachers, a communication obligation to parents, and an institutional expectation from administrators — addressed differently in each context.

For Students:

Require explicit disclosure whenever AI contributed to submitted work, and be specific about the form. A blanket “I used ChatGPT” is not sufficient. Useful disclosure looks like this:

“I used [tool] for [specific purpose — e.g., generating counterarguments, checking grammar, explaining the concept of X in a different way]. I verified the information by [specific verification step]. The core argument and all final writing decisions are my own.”

This format does several things at once: it builds transparency habits, it forces students to actually know how they used AI (passive users often can’t answer this question), and it creates a record that helps you evaluate whether the use was appropriate.

For Teachers:

Model what you want to see. When you use AI to generate discussion questions, say so. When you use AI to build a rubric draft, say so. When you use AI to differentiate a reading — say so. Not because you did something wrong, but because students learn professional AI use practices primarily from watching you practice them.

“I used Gemini to help me draft these discussion questions, then revised them based on what I know about our class. Here’s what I kept and what I changed, and why.” That five-minute debrief teaches more about thoughtful AI use than any policy document.

For Parents:

Proactive communication at the start of the year prevents the confused phone call in October. A short parent letter explaining your AI use policy, what AI tools students will and won’t use, what students are responsible for doing themselves, and how you’ll assess for genuine learning — this is not bureaucratic overhead. It’s professional communication that builds trust before misunderstanding builds instead.

For Administrators:

Know your institutional requirements and follow them. Many districts have issued AI use policies in the last two years. If your school has one, your classroom policy should align with it. If your school doesn’t have one yet, your classroom policy is a contribution to the emerging institutional norm. Document what you’re doing and why. You’ll want that record.

97.9 Equity: The Digital Divide Has a New Shape¶

Here’s the equity reality that nobody in the AI enthusiasm conversation wants to face head-on: AI could be the largest accelerant of educational inequality in a generation, or it could be one of the most equalizing forces in the history of public education. The difference is almost entirely in how teachers use it.

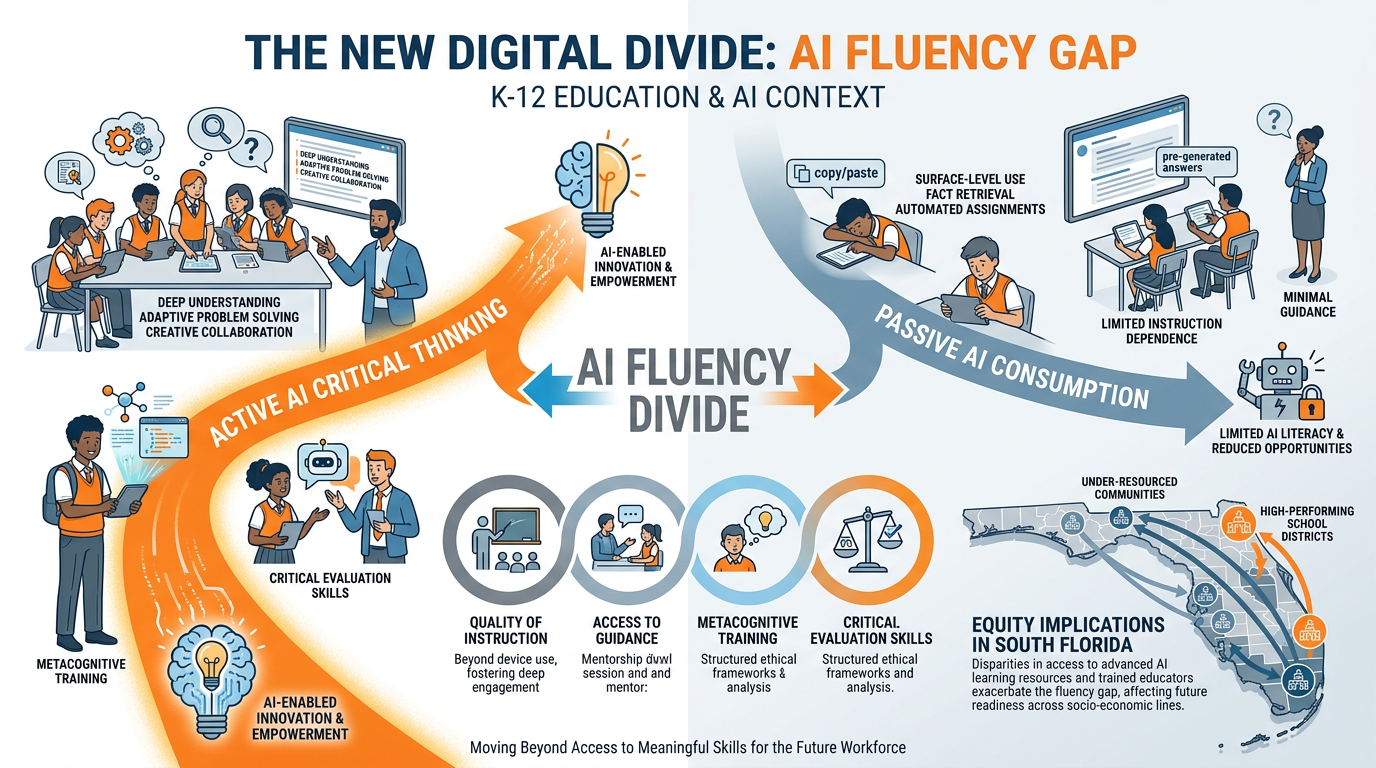

The old digital divide was about access: who has a computer, who has internet at home, who has a device in their hands. That divide still exists. But AI has introduced a second divide that’s less visible and more consequential: the AI fluency gap.

Figure 7:The AI Fluency Divide. The new equity gap is not about who has a device. It’s about who has a teacher helping them use it thoughtfully. Students in under-resourced schools are more likely to use AI passively. Students in well-resourced schools are more likely to learn critical AI use — and that gap compounds.

The AI fluency gap works like this:

Students in well-resourced schools, with teachers who have received training and time to redesign their pedagogy, learn to use AI as a cognitive tool — with intention, critical evaluation, and professional judgment. They learn to verify AI outputs, to use AI to extend their own thinking, to treat AI as a thinking partner. These students graduate AI-literate.

Students in under-resourced schools, with teachers who haven’t had the training or time, are more likely to experience AI as either a banned distraction (and therefore a novelty that gets used covertly without guidance) or an unmonitored shortcut that produces outputs without building understanding. These students graduate AI-dependent.

The compounding is brutal. AI-literate students enter college and the workforce with a genuine productivity advantage. AI-dependent students enter without the cognitive foundation to use these tools effectively for high-stakes work, and without the metacognitive skills to know what they’re missing.

What equity-conscious AI pedagogy looks like:

First, it treats every student as capable of sophisticated AI use — not just the AP students, not just the kids with the best devices, not just the students who’ve already had the conversations at home. Every student deserves explicit instruction in how to think with AI rather than just use it.

Second, it prioritizes access equity proactively. If some students don’t have reliable home internet, AI-based homework creates an inequitable situation. Design AI activities for in-school use, with devices and access you can ensure.

Third, it values the knowledge that doesn’t come from AI. Community knowledge, family knowledge, local knowledge, cultural knowledge — the things your students know that no model knows — are not inferior to AI-generated content. An assessment that values personal experience and community perspective is inherently more equitable than one that values polished prose that AI can generate for anyone.

The equity question is not “are we using AI?” It’s “are we using AI in ways that compound inequality or interrupt it?” You are the variable that makes the difference.

107.10 The Human Dimension and Caring — The Irreducible Zone of the Teacher¶

L. Dee Fink’s 2003 Creating Significant Learning Experiences proposed a taxonomy of learning that has never gotten quite the attention it deserves. Fink identified six interconnected categories of significant learning:

Foundational Knowledge — Understanding and remembering key information and ideas

Application — Skills, critical thinking, managing projects

Integration — Connecting ideas, people, realms of life

Human Dimension — Learning about oneself and others

Caring — Developing new feelings, interests, values

Learning How to Learn — Becoming a better, more self-directed learner

Look at that list again, and ask yourself: which of those can AI provide?

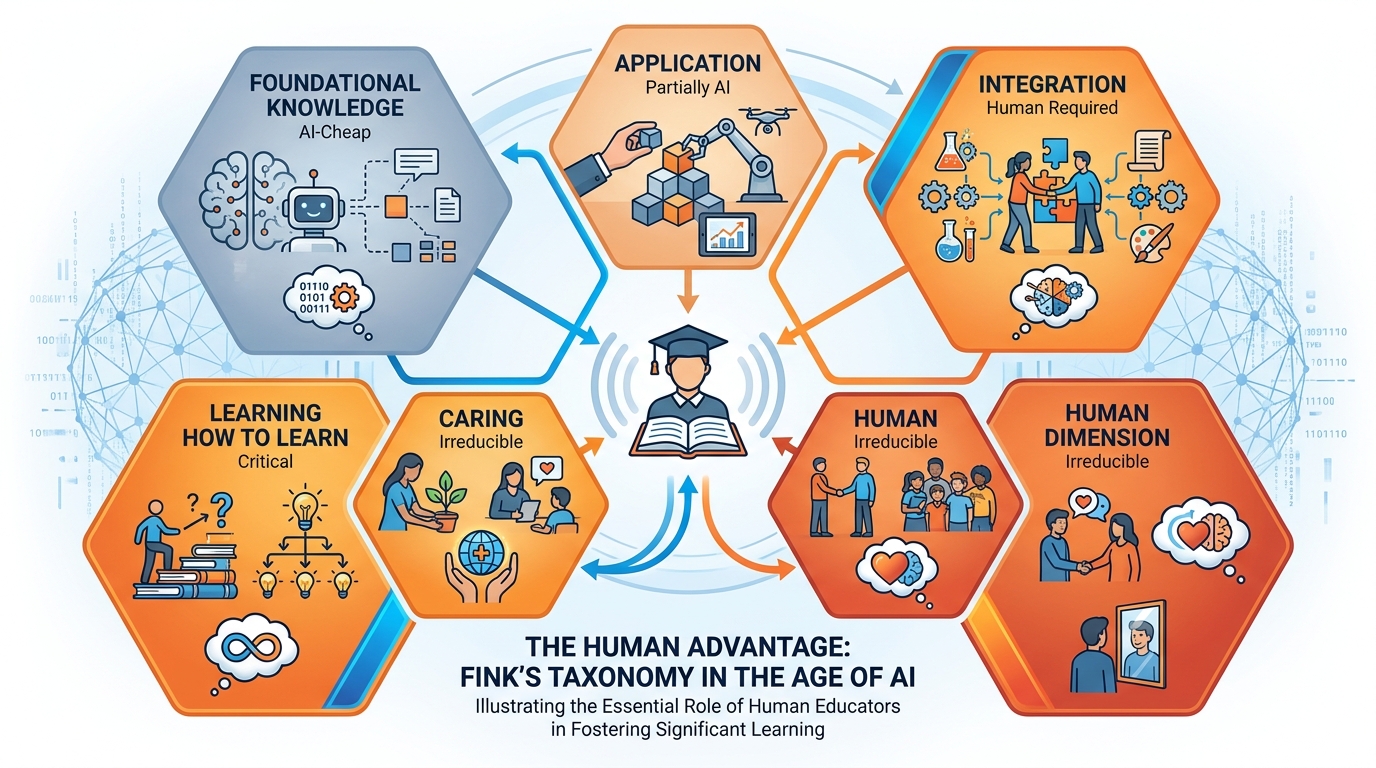

Figure 8:Fink’s Taxonomy in the AI Era. Foundational Knowledge — what used to consume most of instructional time — is now AI-cheap. The categories that require human guidance, human relationship, and human context are exactly the categories AI cannot provide.

Foundational Knowledge: Now AI-cheap. This doesn’t mean it doesn’t matter — foundations still matter enormously. But the delivery of foundational knowledge no longer requires you to stand at the front of the room for 45 minutes. AI can explain the nitrogen cycle in twelve different ways, at six different reading levels, with personalized examples, on demand. This is what AI is genuinely excellent at.

Application: Partially AI-assisted. AI can model application, generate practice problems, and provide feedback on student attempts. But real application — using knowledge to do something meaningful in the actual world — requires human judgment, human context, and real stakes that AI cannot fabricate.

Integration: Requires human guidance. Making connections across disciplines, across life, across time — this kind of synthesis is possible with AI support, but the meaning of the integration, the way it changes how a student sees themselves and their world, requires a human conversation. The teacher who says “this connects to what happened to your grandmother’s generation” has done something no AI can replicate.

Human Dimension: Irreducible. Learning about oneself — discovering that you’re capable of hard things, that your perspective matters, that your experience is connected to history and to other people — this is developmental work that happens in relationship. With peers. With teachers who see them. AI can’t see a student. It can’t know them. It can’t recognize growth.

Caring: Irreducible. The development of values, interests, passions — the “I care about this” that transforms a student from someone who does school into someone who becomes something. Teachers inspire this. Mentors inspire this. A chatbot does not inspire this.

Learning How to Learn: Critical and increasingly urgent. This is the metacognitive category — becoming a better, more intentional, more self-aware learner. In the AI era, this is perhaps the most important of Fink’s six categories, and it’s entirely a human teaching project. AI can provide resources, AI can offer feedback, but the development of a student who knows how to learn — who can direct their own intellectual development, who can evaluate what they know and don’t know, who can seek out the right resources and challenge the right assumptions — that is a human teacher’s greatest gift.

Here is the implication that matters most: Fink’s framework shows us that Foundational Knowledge — the category that historically consumed the most instructional time — is now the category that needs it least. And the categories that received the least instructional time — Human Dimension, Caring, Learning How to Learn — are the ones that are genuinely irreplaceable, genuinely developmental, and genuinely requiring of a human teacher’s sustained investment.

AI didn’t cheapen education. AI clarified what education was always really for.

117.11 Classroom Management of AI Use (Without Becoming a Cop)¶

Let’s be direct about the management reality: you cannot police AI use out of existence, and the attempt will cost you far more than it’s worth.

Phone bans, paper-only rules, Faraday cage dreams — these approaches assume that the problem is access, and that restricting access solves the problem. They don’t. Students who are determined to use AI will find ways. Students who’ve been told it’s forbidden but not why will assume it’s forbidden because adults fear it, not because it has real risks. And teachers who spend their instructional energy running an AI detection operation have stopped teaching.

The management goal is not prevention. It’s structure with purpose.

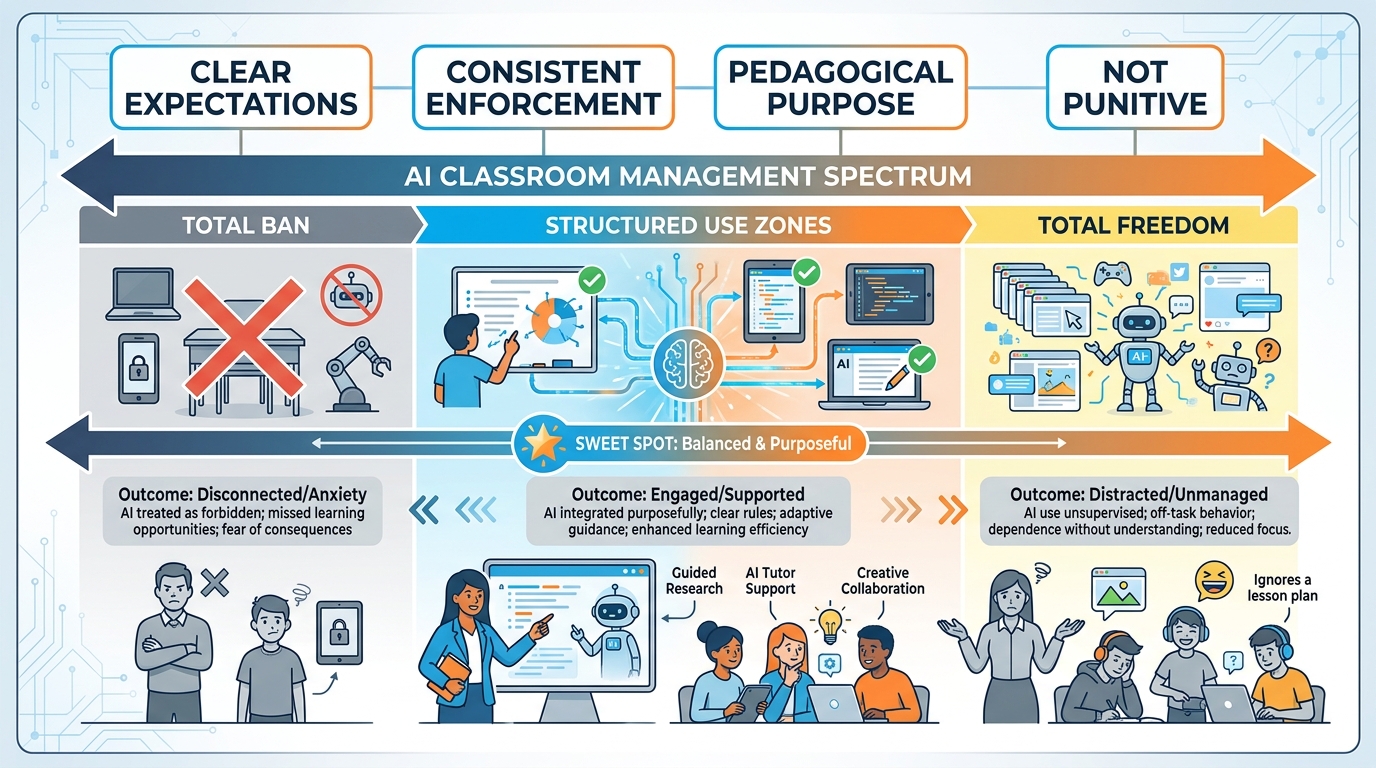

Figure 9:The Management Spectrum. Total ban and total freedom are both failure modes. The productive zone is structured, purposeful AI use with clear expectations, pedagogical rationale, and consistent norms — not punitive, but intentional.

The three-zone classroom:

Zone 1 — AI-Free: Some activities are designed to build cognitive capacity through pure student effort. Timed writing. The first draft. The initial problem-solving attempt. The oral response. These activities are AI-free not as punishment, but as intentional design. Tell students why: “This first fifteen minutes is you and the problem, no tools. Like a musician practicing scales — the difficulty is the point.” Students understand this when you explain it as pedagogy rather than restriction.

Zone 2 — AI-Assisted: The middle of many tasks is where AI as a thinking partner makes most sense. After students have their own first thinking on paper, they can use AI to challenge it, expand it, find counterarguments, look up related concepts. This is scaffolded use. Students are in charge; AI is a tool they’re directing. Structure this phase with specific prompts: “Use AI to find three objections to your argument. Then write a paragraph responding to the strongest one.”

Zone 3 — AI-Required: Some tasks explicitly require AI use and require students to show their work with it. “Use Gemini to research three perspectives on this issue. Document the prompts you used, the responses you received, and how you evaluated each source.” AI-required tasks teach AI fluency directly and give students legitimate reasons to use the tools you’ve seen them reach for anyway.

Be clear, be consistent, and be pedagogically grounded in every conversation about the rules. “Because I said so” produces resentment and covert noncompliance. “Because your brain needs this kind of practice to develop” produces — not always immediately, but over time — students who understand why the structure exists.

What to Do When Students Break the Rules

When you discover a student has used AI in a Zone 1 activity or without disclosure, the first response is not punishment — it’s curiosity. “Tell me about your thinking on this section.” “Walk me through how you came to this conclusion.”

Often, students who’ve used AI inappropriately can’t explain their own work. That gap — between what they submitted and what they can articulate — is itself a learning moment. Make it one.

The conversation that follows should address: what was the purpose of the restriction, what was lost by bypassing it, and what needs to happen now to build the understanding they missed. A redo, a conversation, a different assessment — not detention. The goal is learning, not compliance.

127.12 Communicating with Parents and Administrators¶

The most confused stakeholders in the AI education conversation right now are parents and administrators — not because they’re behind, but because they’re receiving wildly inconsistent messages from wildly inconsistent sources.

Some parents are terrified their children are learning to cheat. Others are frustrated that their child’s school is “banning the future.” Administrators are trying to write policies for technology that didn’t exist when their last policy was written, for a landscape that will look different by the time the policy is reviewed.

You are on the front lines of all of this. And your professional credibility depends on being able to communicate clearly, confidently, and honestly with both groups.

Communicating with Parents:

Lead with what hasn’t changed. Learning still requires effort. Struggle is still the engine. Your child still needs to think, write, argue, create, and demonstrate understanding — and your classroom still requires all of that. What has changed is the landscape of tools available, and your job is to make sure students know how to use them in ways that build rather than bypass their education.

Address the fear directly: “I know some parents worry that AI use in school is teaching students to cheat. Here’s how I think about it: cooking class doesn’t teach cheating by using knives. Driver’s ed doesn’t teach recklessness by using cars. The tool isn’t the problem. What matters is whether students are learning to use the tool with intention and judgment — and that’s what this class is building.”

Explain the disclosure requirement. Parents understand honesty. “In my classroom, if students use AI, they document exactly how they used it and what they decided themselves. That transparency is a habit we’re building.” That framing makes sense to almost every parent.

Communicating with Administrators:

Document everything. Your learning objectives are mapped to state standards. Your AI use policies align with district guidelines. Your assessments are designed to measure genuine student understanding, not AI output. You can articulate why each AI activity serves a specific pedagogical purpose.

This documentation is not defensive paperwork — it’s professional clarity. Teachers who can explain what they’re doing pedagogically and why are the ones who get to keep doing it. Teachers who can’t explain it get their autonomy restricted.

Bring administrators examples of excellent AI-era student work — the oral defense that demonstrated genuine understanding, the portfolio that showed real intellectual development, the authentic task that required knowledge no AI could fake. Make the case with evidence. The administrator who understands what you’re building will become your ally, not your obstacle.

137.13 The AI-Era Teacher’s Operating System¶

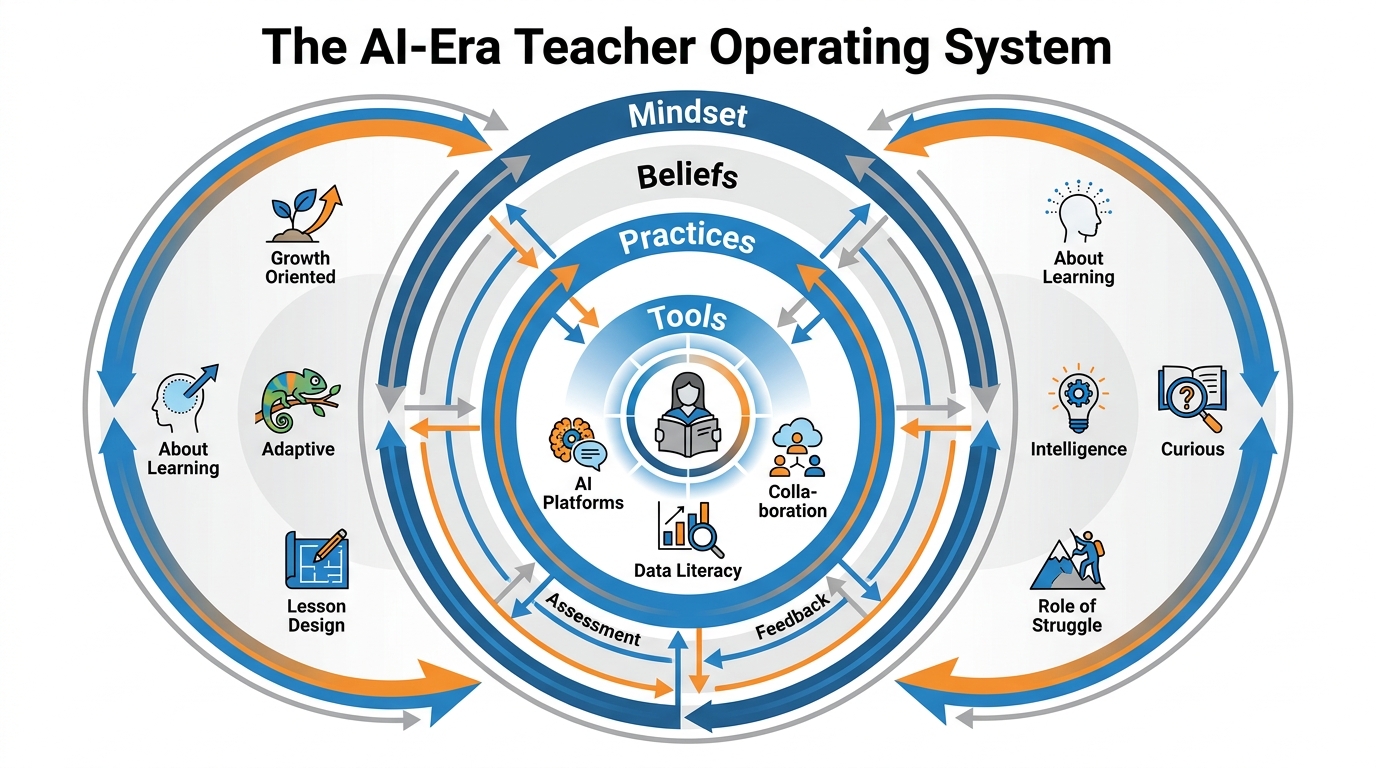

Everything in this chapter comes down to one question: Who are you as a teacher in this new landscape?

Not what tools do you use. Not what your policy says. Not what your district mandates. Who are you — as a professional, as an educator, as a person who has chosen to stand in a room with young people and help them develop?

Figure 10:The AI-Era Teacher’s Operating System. Mindset at the core, beliefs as the foundation, practices as the expression, tools as the instruments. Change the outer layers all you want — the OS that runs underneath determines everything.

Think of it as an operating system. The tools — the apps, the platforms, the AI models — are the software running on top. But underneath is the OS: the deeper layer of beliefs, values, and dispositions that determine how you use any tool, respond to any situation, make any decision.

Your OS has four layers:

Layer 1 — Mindset: Are you orienting toward learning or toward compliance? Do you believe your students are capable of sophisticated thinking? Are you genuinely curious about AI, or are you managing your fear of it? The teacher whose mindset is growth, curiosity, and professional confidence makes consistently better decisions than the one operating from anxiety and control.

Layer 2 — Beliefs About Learning: Do you believe struggle is necessary, or do you believe confusion is a problem to be solved? Do you believe relationship is load-bearing in education, or is it nice but optional? Do you believe that what you teach is less important than who you help your students become? These beliefs — often implicit, rarely examined — drive every pedagogical decision you make.

Layer 3 — Practices: Backwards design. Authentic assessment. Metacognitive prompting. Process documentation. Oral moments. Zone-based AI management. These are practices — repeatable, improvable habits that express your beliefs in real classroom decisions. The practices can be upgraded. Learn new ones. Try them. Revise based on what you see.

Layer 4 — Tools: AI platforms, apps, models, hardware. These change fast. Very fast. In three years, the specific tools we’ve discussed in this course will be outdated. Knowing tools is temporary knowledge. Knowing pedagogy is permanent. Teachers who understand the OS will adapt to any new tool that appears, because they know what they’re looking for and what they’re trying to do.

The single most important investment you can make in your professional development right now is not learning more AI tools. It’s deepening your understanding of how humans learn, why struggle matters, what authentic assessment looks like, and what only you can provide in a room full of young people.

That investment compounds. Every new tool that arrives — and they will keep arriving — will be something you can evaluate, adopt, adapt, or decline from a position of pedagogical clarity rather than anxious reaction.

The teacher who understands pedagogy deeply is never obsolete. The teacher who only knows the tools is always at risk of being replaced by the next release.

14Chapter Summary¶

Seven chapters in. You’ve moved from the foundations of learning science through AI tools, ethics, subject-specific applications, and now to the heart of it: teaching itself.

Here are the seven things to carry out of this chapter:

The teacher is still the teacher. AI handles information delivery efficiently. Teachers provide relationship, context, judgment, and the human dimension that research consistently shows drives learning outcomes.

Bloom’s taxonomy has shifted. AI now handles Remember, Understand, and Apply cheaply. Design instruction and assessment at the Analyze, Evaluate, and Create tiers — where human cognition and guidance remain essential.

Start with the end. Backwards design — desired results, then evidence, then experiences — produces better alignment between what you teach, what you assess, and what students actually learn. AI accelerates every stage without replacing your professional judgment.

Preserve the struggle. Design lessons where AI can’t do the essential cognitive work for students. Process documentation, personal knowledge, oral moments, and problems-before-explanations all preserve the productive struggle that creates real learning.

Assess for understanding, not output. Oral defense, portfolio with revision history, performance tasks, and Socratic questioning are AI-era assessment forms that require genuine cognition. Move from product to process.

Metacognition is the central skill. Teaching students to plan, monitor, and evaluate their own thinking — including their AI use — is more important than teaching any specific tool. Flavell’s loop is your curriculum.

You inhabit Fink’s irreducible zone. Human Dimension, Caring, and Learning How to Learn cannot be provided by AI. These are your most important work. Foundational Knowledge is now AI-cheap; invest your time where only you can go.

15📝 Case Study & Discussion Board (2 pts)¶

15.1Case Study: Two Eighth Graders, One Assignment¶

Ms. Chen teaches eighth-grade English Language Arts in Miami. She assigned a literary analysis of The Giver with the prompt: “Analyze how Jonas’s society uses the concept of ‘sameness’ to control individual freedom.”

Keyla submitted a polished four-paragraph essay. The argument was coherent, the textual evidence was accurate, and the prose was fluent. When Ms. Chen asked Keyla to elaborate on her most interesting point in a brief hallway conversation, Keyla struggled to paraphrase her own argument and couldn’t remember which specific passages she’d cited.

Marcus submitted a rougher draft — three paragraphs, one citation, informal language. But in the same hallway conversation, Marcus immediately expanded his argument with two additional examples from the novel that weren’t in his essay, explained his reasoning behind the connection he made, and asked Ms. Chen whether she thought Jonas’s father’s role in releasing twins was a form of moral dissonance or just rule-following. Ms. Chen had to think before answering.

Both students used AI. Only one learned.

Discussion Prompt (minimum 250 words):

What’s the difference between how Keyla and Marcus used AI? Connect your analysis to at least one theory or framework from this chapter — Bloom’s taxonomy, Fink’s taxonomy, Kapur’s productive failure, or Flavell’s metacognition.

Ms. Chen suspects Keyla outsourced the thinking but has no hard evidence. What should she do? What shouldn’t she do? Discuss the assessment design, the response to the student, and any implications for the class policy.

What change to the assignment design would have made Keyla’s approach less likely — not by banning AI, but by making the bypass less productive than doing the actual thinking?

Discussion Guidelines:

Your initial post must be a minimum of 250 words.

Include at least one scholarly citation (APA format) from a source covered in this chapter or your own research.

Respond meaningfully to at least two peers — substantive engagement, not just agreement (minimum 75 words per response).

Due: Initial post by Wednesday, responses by Sunday.

16🧪 Hands-On Lab: Redesign a “Before-AI” Lesson into an AI-Aware Lesson Plan (10 pts)¶

16.1Overview¶

You are going to take a lesson you’ve actually taught — one that was designed before AI changed the landscape — and rebuild it from the ground up using the frameworks from this chapter. This isn’t a theoretical exercise. By the end, you’ll have a real lesson plan you can use.

Time required: Approximately 90 minutes

Tools: Gemini (gemini.google.com), Google Docs

Points: 10

16.2Step 1: Select and Document Your “Before-AI” Lesson (15 min)¶

Choose a real lesson you’ve taught, or one you know well from your student teaching or curriculum materials. It should be a lesson that feels vulnerable to AI shortcuts — where the main student deliverable is something AI could now produce easily.

Open a Google Doc. Write a brief description of the lesson: subject, grade level, learning objective, the main activity, and the assessment. Be honest about what part of the lesson AI can now complete without the student actually learning.

16.3Step 2: Bloom’s Audit with Gemini (20 min)¶

Share your lesson description with Gemini and prompt:

“Here is a lesson I designed before AI tools were widely available: [paste your lesson description]. Using Bloom’s Revised Taxonomy, audit this lesson. Which cognitive levels does it target? Which parts of the lesson could a student now outsource to AI without meaningful learning loss? Where is the lesson most vulnerable to the AI bypass problem?”

Read the response critically. Push back where you disagree. Ask follow-up questions. This is your audit — own it.

Document: What did Gemini identify? What did you agree with? What did you see that Gemini missed?

16.4Step 3: Redesign for Higher-Order Thinking (30 min)¶

Now redesign the lesson. Your redesigned version must:

Shift the primary cognitive demand to Analyze, Evaluate, or Create tier

Include at least one “Zone 1 — AI-Free” component that preserves productive struggle

Include at least one authentic assessment component (oral, performance, portfolio, or process-based)

Have a disclosure plan: if AI is permitted at any stage, what must students document?

Use Gemini to help you brainstorm: “Here is my original lesson and what I want to change about it. Help me generate five different approaches to redesigning the assessment so it requires genuine understanding rather than AI-producible output.”

You choose what to keep. Gemini doesn’t decide — you do.

16.5Step 4: Group Build (20 min)¶

Working with your group, use AI to identify a real lesson from your collective teaching context that’s vulnerable to AI shortcuts. Don’t pick a hypothetical — pick something actual.

Use Gemini as a thinking partner: “We’re a group of teachers. Here’s a lesson we all recognize as vulnerable to AI shortcuts: [description]. What makes it vulnerable, and what redesign approaches would preserve the essential learning while allowing AI to support the parts where it genuinely helps?”

Rebuild the lesson together. Then be ready to discuss with the class:

What you found in the lesson that needed changing

How AI helped you see the problem and develop the solution

What your rebuilt lesson looks like

What the AI got right about your situation — and what it missed

16.6Submission¶

Submit to Canvas:

Your Google Doc showing the original lesson, the Bloom’s audit, and the redesigned lesson plan

A 200-word reflection: What surprised you most about auditing your own lesson? What’s the hardest part of redesigning for higher-order thinking?

Grading rubric:

| Criterion | Points |

|---|---|

| Original lesson clearly documented with honest AI-vulnerability assessment | 2 pts |

| Bloom’s audit shows genuine engagement with the taxonomy | 2 pts |

| Redesigned lesson shifts cognitive demand to upper Bloom’s tiers | 3 pts |

| Authentic assessment component is genuinely AI-resistant | 2 pts |

| Group Build participation and class discussion | 1 pt |

| Total | 10 pts |

17🎯 In-Class Assignment: Pedagogy First (10 pts)¶

Details and instructions will be provided in class.

Points: 10

18Glossary¶

Authentic Assessment Assessment that requires students to demonstrate understanding through performance in realistic, meaningful contexts — rather than through AI-producible artifacts like standard essays or multiple-choice tests.

Backwards Design (Understanding by Design) A curriculum design framework (Wiggins & McTighe, 1998) that begins with desired learning outcomes, then determines acceptable evidence, then plans instruction — rather than starting with content coverage.

Bloom’s Revised Taxonomy Anderson & Krathwohl’s (2001) revision of Bloom’s (1956) classification of cognitive learning into six levels: Remember, Understand, Apply, Analyze, Evaluate, Create. In the AI era, the lower three levels are increasingly AI-automatable.

Bypass Problem The phenomenon in which AI enables students to produce the appearance of learning (a completed output) without performing the cognitive work (the thinking) that would constitute actual learning.

Cognitive Disequilibrium Piaget’s term for the discomfort of encountering information that doesn’t fit existing schemas — the state that drives genuine learning through schema restructuring.

Desirable Difficulties Bjork’s term for learning conditions that feel effortful or frustrating in the short term but produce superior long-term retention — including spaced practice, retrieval practice, interleaving, and generation effects.

Fink’s Taxonomy of Significant Learning Fink’s (2003) framework identifying six categories of significant learning: Foundational Knowledge, Application, Integration, Human Dimension, Caring, and Learning How to Learn. In the AI era, Foundational Knowledge is AI-cheap while Human Dimension, Caring, and Learning How to Learn remain irreducible.

Metacognition Flavell’s (1979) term for knowledge and control of one’s own cognitive processes — including planning how to approach a task, monitoring understanding during it, and evaluating one’s learning after it. In the AI era, metacognition is the capacity to regulate AI use rather than be regulated by it.

Productive Failure Kapur’s framework in which students attempt challenging problems before receiving instruction, generating wrong answers that create “intellectual need” — improving learning when instruction subsequently arrives.

Productive Struggle Cognitive effort that is challenging enough to activate deep processing but achievable enough to maintain engagement — the optimal zone for learning, as opposed to both trivial ease and unproductive frustration.

Zone-Based AI Management A classroom management approach that designates different activities as AI-Free, AI-Assisted, or AI-Required — based on pedagogical purpose, not prohibition. Students learn to navigate zones with intentionality rather than covert noncompliance.

Chapter 7 of 8 — AI Thinking for Educators · Dr. Ernesto Lee · Miami Dade College