The Long View: Education's Next Decade

The future isn't something that happens to you. It's something you teach into being.

1The Long View: Education’s Next Decade¶

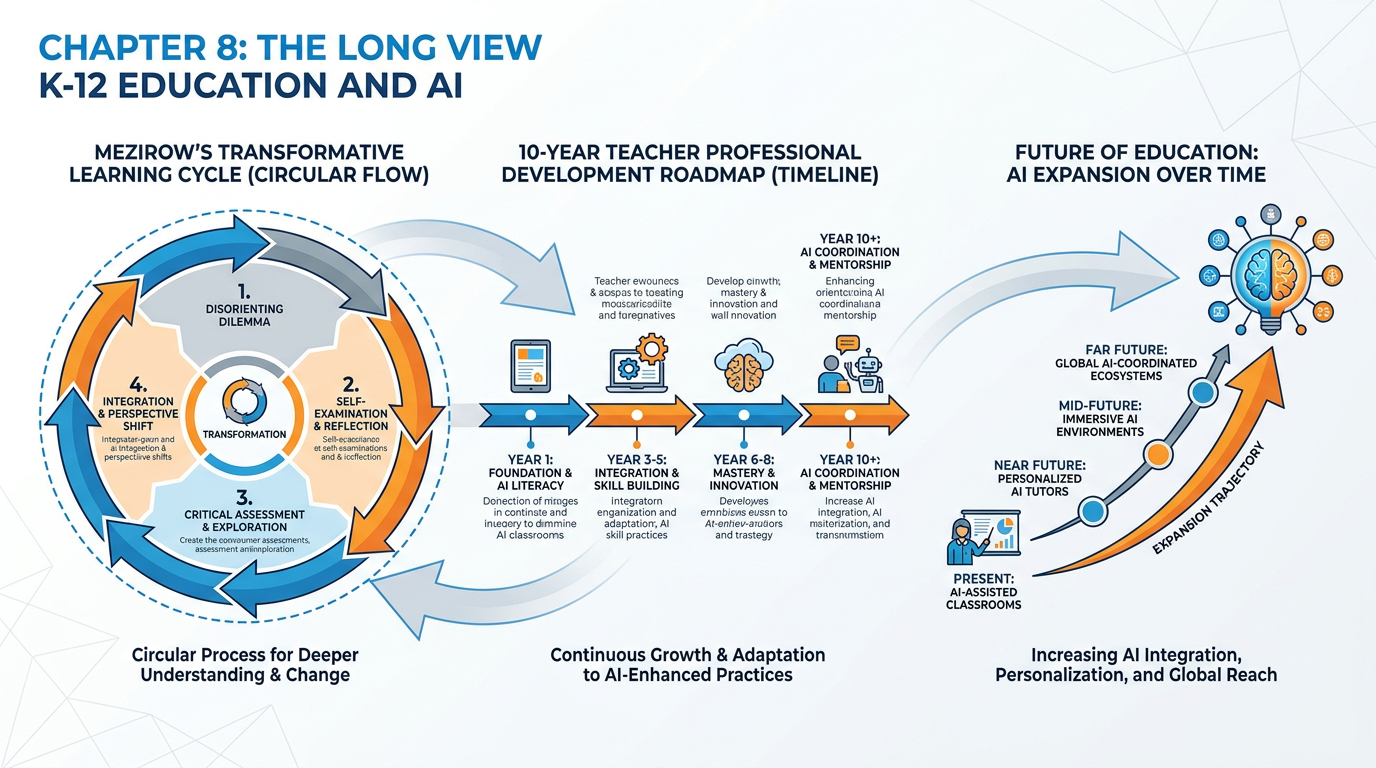

Figure 1:Chapter 8 at a Glance. Three interconnected ideas: how AI becomes a disorienting dilemma that transforms you as an educator, what the next decade actually looks like, and where you are headed on your own 10-year arc. By the end of this chapter, each of these will feel like a compass — not a map.

There’s a moment every teacher remembers. Not the first day of school, though that matters too. A different kind of moment — the moment something you believed about your craft turned out to be incomplete. A student you misjudged. A lesson that failed spectacularly and taught you more than the ones that worked. A discovery that reordered your mental model of what learning actually is.

Mezirow called these moments disorienting dilemmas. He wasn’t being dramatic. He was being precise.

AI is doing that to the teaching profession right now. Not gradually and quietly, but at a scale and speed that doesn’t give you time to adjust incrementally. The ground is shifting under a craft you’ve spent years building. And the question is not whether it shifts — it already has. The question is what kind of teacher you become on the other side.

This final chapter is about the long view. It’s about understanding Mezirow well enough to recognize that what’s happening to you — the discomfort, the confusion, the “I don’t know how to teach this anymore” feeling — is not failure. It’s the beginning of transformation. And transformation, when you understand the process, is something you can navigate with intention instead of just surviving.

We’ll look at what’s actually coming in the next decade — not hype, not panic, just the most honest reading available of where this is headed. We’ll look at what will absolutely not change, because those anchors matter more now than they ever have. And then we’ll look at you: where you are, where you could be in ten years, and the letter waiting for you on the other side.

1.18.1 AI Is the Disorienting Dilemma — and the Encounter Is the Curriculum¶

Jack Mezirow spent his career trying to understand how adults change. Not just learn new things, but genuinely transform — shift their frame of reference, the deep-seated assumptions through which they interpret the world and their role in it.

In 1978, studying women returning to college after years outside the workforce, he noticed something. The most profound learning didn’t happen through the curriculum. It happened through crisis — through encounters so jarring that the existing mental model could not accommodate them without restructuring. He called these encounters disorienting dilemmas: experiences that don’t fit what you already believe, and that therefore demand either that you dismiss the experience or rebuild the belief.

Transformative learning — real transformation, not just information accumulation — begins with a disorienting dilemma. It cannot begin any other way.

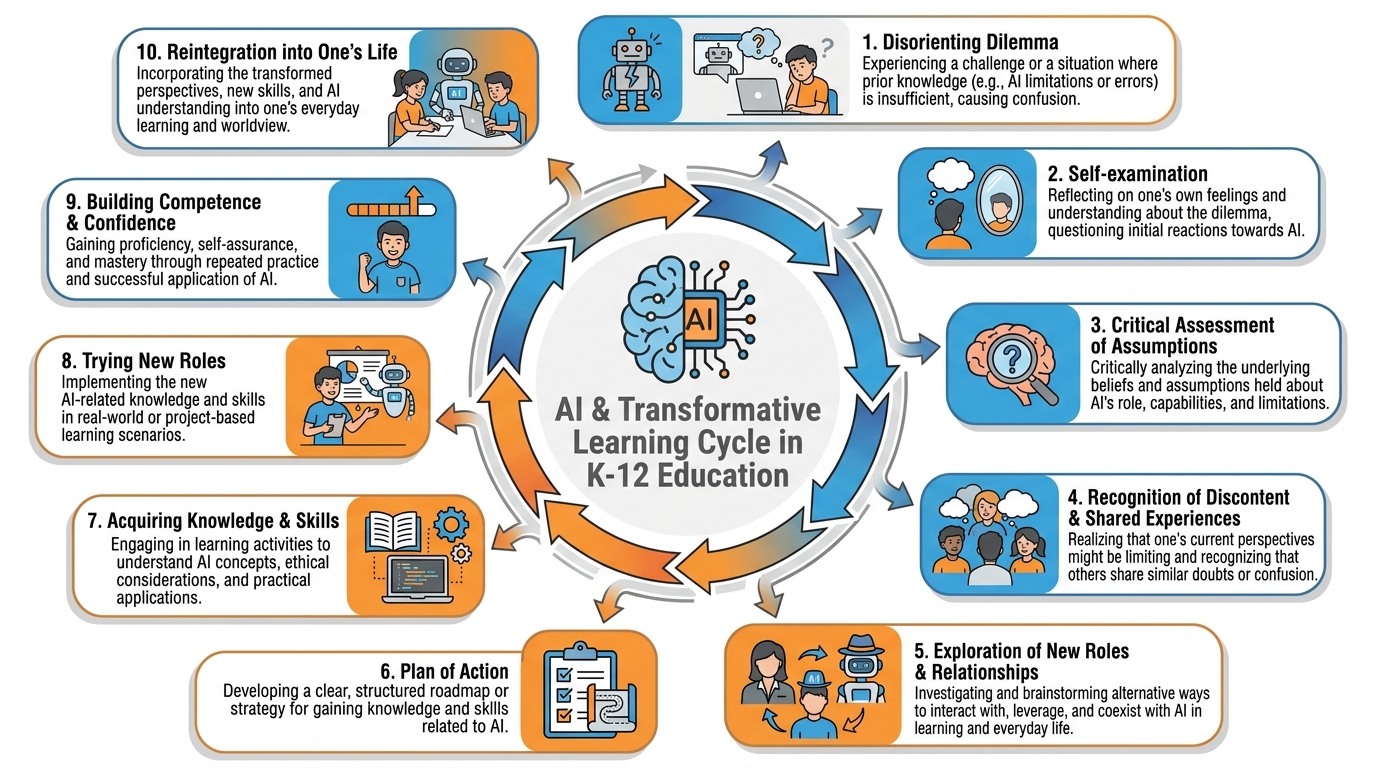

Figure 2:Mezirow’s Transformative Learning Cycle. The cycle does not begin with curiosity. It begins with disruption. Every phase requires something of the learner — honesty about assumptions, courage to explore new frames, willingness to act on a perspective that isn’t yet comfortable. AI is activating this cycle in educators worldwide.

Here are the ten phases Mezirow identified, and here is what they look like right now, in 2025–2026, for a K-12 teacher encountering AI:

Phase 1 — Disorienting Dilemma: A student submits an essay that is better than anything they’ve written before. You read it and feel something wrong. You run it through an AI detector. The result is inconclusive. You don’t know what to do.

Phase 2 — Self-Examination: You start asking questions that are uncomfortable. Have I been assigning the right things? Is my rubric measuring what I think it’s measuring? Am I teaching skills or just tasks?

Phase 3 — Critical Assessment of Assumptions: You realize that the essay assignment you’ve given every year for fifteen years was built on an assumption — that producing the essay was the learning. Maybe it was only ever the evidence of learning. Maybe those are different things.

Phase 4 — Recognition That Others Share the Discontent: You go to a faculty meeting and find out that every teacher in your building is wrestling with the same thing. You find an online community of thousands of educators asking the same questions. The isolation lifts.

Phase 5 — Exploration of New Roles: You try something. Maybe you redesign the assignment so the AI does the first draft and the student’s job is to improve it, argue with it, cite where it’s wrong. Maybe you go oral — sit with each student for ten minutes and have them explain their thinking. You don’t know if it’s working. You do it anyway.

Phase 6 — Planning a Course of Action: You decide to take this course. You decide to read. You attend a conference. You start building a new framework — not perfectly, but intentionally.

Phase 7 — Acquiring Knowledge and Skills: You learn what AI actually is. You learn prompt engineering. You learn about cognitive load and desirable difficulties and why smooth AI responses can hollow out learning if you’re not careful. You build Gems and use NotebookLM and run an AI Studio experiment with your juniors.

Phase 8 — Trying on New Roles: You facilitate instead of lecture. You design instead of deliver. You ask questions instead of answering them. It feels strange. Some days it fails. You try again.

Phase 9 — Building Competence and Self-Confidence: One day, a student says something that surprises you — a genuine insight, a creative synthesis, a question you hadn’t thought to ask — and you realize your redesigned environment produced it. That’s the moment. That’s when the new framework starts to feel like yours.

Phase 10 — Reintegration: You’ve changed. Not back to who you were before the dilemma. Into someone who carries the old craft and the new understanding together. A teacher for the present, not despite the disruption but because of it.

Teaching = Delivering Content

I am the expert; I transfer knowledge to students

The lesson is the curriculum

Assessment measures retention

AI is a threat to academic integrity

The goal: students who can answer questions correctly

Teaching = Designing Conditions for Learning

I am the architect of cognitive experiences

The encounter is the curriculum

Assessment reveals thinking, not just retention

AI is a tool in the service of deeper learning

The goal: students who can think through hard problems

Mezirow was explicit about one thing: transformation cannot be imposed from the outside. A workshop cannot transform you. A mandate cannot transform you. Reading a book — not even this one — can transform you. The transformation happens inside you, in the encounter with the disorienting dilemma, in the willingness to do the hard work of phases 2 through 9.

But the encounter can be prepared for. The phases can be navigated with more skill and less suffering when you understand what they are. That’s what this chapter offers: not a shortcut through the transformation, but a map of the territory you’re already in.

1.28.2 What’s Coming in the Next 24 Months¶

Let’s be concrete. The education AI landscape is moving fast enough that any specific prediction has an expiration date — but certain structural shifts are close enough to visible that you can plan for them right now.

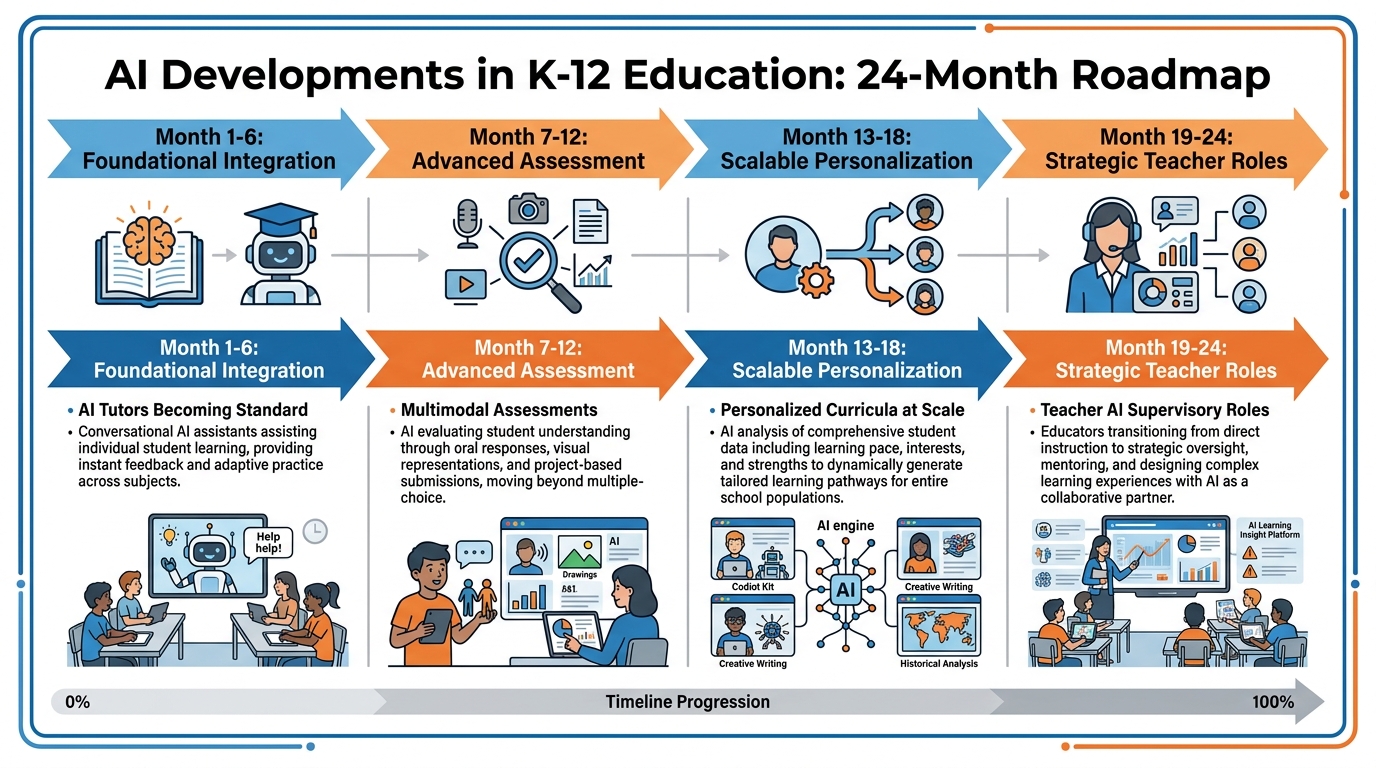

Figure 3:The Next 24 Months in Education AI. These aren’t speculative predictions — they’re extrapolations from products already in beta, pilots already underway, and policies already in the regulatory pipeline. Your job is to be ahead of the wave, not under it.

Months 1–6: AI tutors go from supplemental to standard. Products like Khan Academy’s Khanmigo, Google’s Gemini for Education, and Microsoft’s Reading Coach are moving from “interesting experiment” to “expected infrastructure.” Districts that invested in pilot programs in 2024–2025 are scaling. Districts that didn’t are scrambling. The baseline expectation — that students have access to AI-assisted explanation, feedback, and practice outside class time — is becoming normalized.

What you need to do now: Get ahead of your district’s rollout. Understand what AI tutor your students will have access to, what its guardrails are, what it can and cannot do. Don’t wait for the mandatory training. Know it before you need to explain it.

Months 7–12: Multimodal assessments start disrupting text-only design. AI can write a competent essay on almost any topic for a high school student. Increasingly, AI can also write competent code, solve standard math problems, and produce reasonable lab reports. The assessment formats that remain genuinely student-diagnostic are the ones that can’t be completed by AI without a human in the loop: oral defense, live problem-solving, creation of novel artifacts, performance tasks. Districts and exam boards are moving — slowly and imperfectly — in this direction. Florida is not exempt.

What you need to do now: Start practicing assessment formats that place the student’s thinking in the foreground. Oral check-ins, think-alouds, process documentation, revision narratives. These are not new pedagogical ideas — they’re old ones that AI has made newly urgent.

Months 13–18: Personalized curriculum engines hit the classroom. Not just adaptive practice tools — full curriculum scaffolding platforms that can generate differentiated versions of a lesson for ELL students, students with IEPs, advanced learners, and grade-level students simultaneously. The promise is real. The implementation challenges (privacy, equity, teacher control, accuracy) are also real. Your district will be confronted with a sales pitch during this window. You’ll need the framework to evaluate it.

Months 19–24: AI-supervisory teacher roles emerge. As AI takes on more of the routine instructional scaffolding, a new teacher task emerges: auditing the AI. Checking that the AI’s explanation of photosynthesis is accurate and grade-appropriate. Noticing that the AI has generated a reading passage that is culturally insensitive. Intervening when the adaptive algorithm has mislabeled a student’s learning level. This is not a minor add-on. It is a professional skill that will be expected of you — and currently almost no teacher preparation program teaches it.

1.38.3 The Personalized Curriculum — Promise and Peril¶

The dream is beautiful. Every student learning at their own pace, in their own style, with instruction calibrated to exactly where they are and exactly where they need to go. Not thirty students getting the same lesson at the same moment — thirty individual learning trajectories, each optimized in real time.

This is not science fiction. It is the near-term direction of AI-powered education, and the promise is genuine.

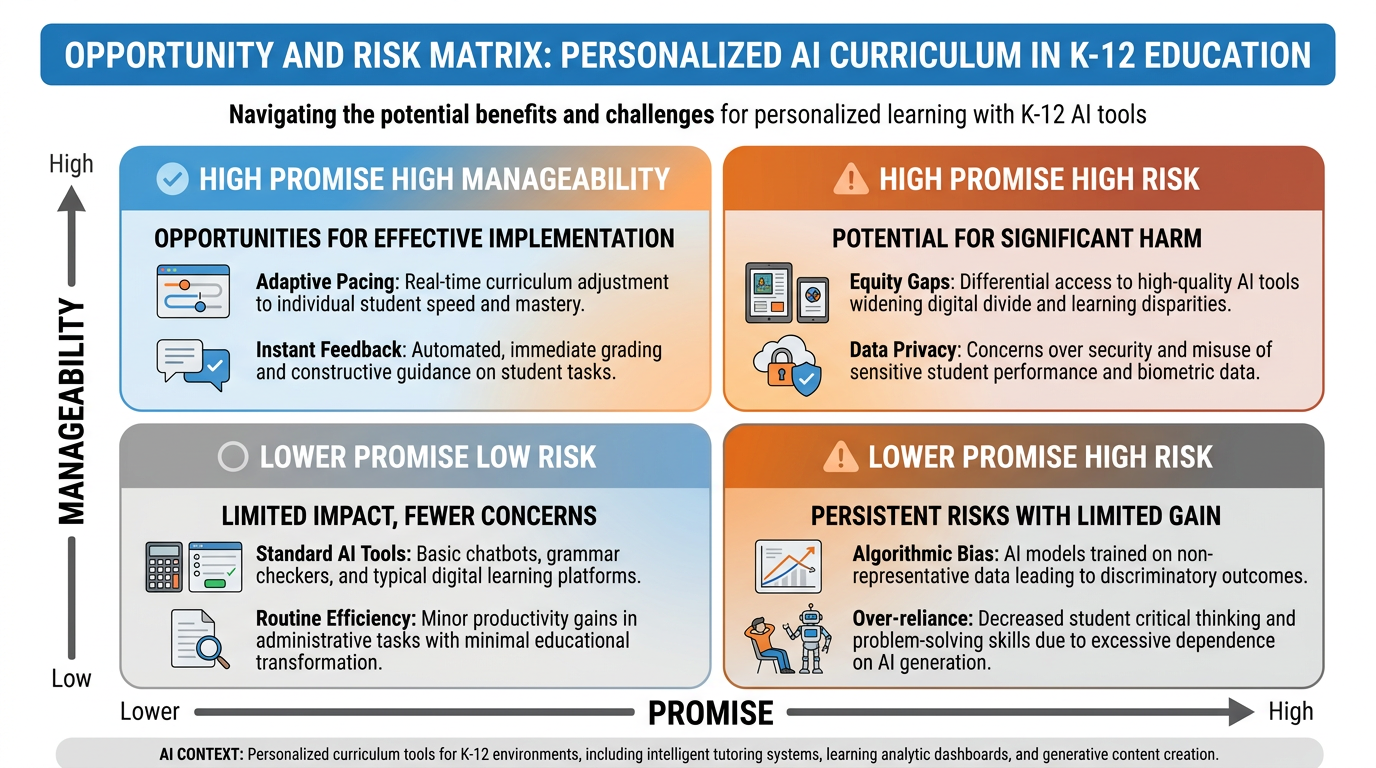

Figure 4:The Personalized Curriculum Matrix. Promise and peril share the same address. The same technology that can accelerate equity can deepen inequality. The difference is not in the tool — it is in who controls it, who audits it, and what values are embedded in its design.

The promise, honestly stated:

A student who struggles with fractions doesn’t have to fall behind the class while the teacher moves on. An ELL student can have complex texts summarized and explained in their home language before engaging with the English original. A gifted student who has mastered fifth-grade content can access seventh-grade material without leaving their classroom or their friends. Students with anxiety can practice difficult skills in low-stakes private conversations with an AI that doesn’t judge them. Students who need ten repetitions instead of three can get ten repetitions without consuming the teacher’s finite attention.

None of this was possible at scale before. All of it is possible now.

The peril, honestly stated:

Personalization at scale requires data — massive, granular, longitudinal data about every student’s learning behaviors, errors, and progress. That data has enormous value to entities whose interests are not aligned with students’ wellbeing. The history of EdTech privacy is not reassuring. Surveillance creep — the gradual expansion of data collection beyond its original stated purpose — has occurred in nearly every major EdTech deployment of the past two decades.

Algorithmic labeling is real. A system that flags a student as “low achiever” based on early data can shape every subsequent interaction the student has with the platform — and with teachers who consult it. Students from historically underserved communities are most vulnerable to being mislabeled, most likely to be in districts with fewer resources to audit algorithmic outputs, and most likely to bear the long-term consequences of bad labels.

Personalization can also mean isolation. A classroom where every student is on their own learning pathway has gained efficiency and lost something harder to measure: the productive friction of encountering someone who thinks differently than you do, the social negotiation of shared knowledge, the experience of learning together that is not just a pedagogical nice-to-have but a developmental necessity.

1.48.4 Multimodal Learners and Their New Superpowers¶

Something genuine is happening in classrooms right now that deserves more celebration than it gets.

Students who have always learned better through hearing than reading — who struggled with dense text but lit up when the same content was explained aloud — now have a tireless companion that will read to them, explain in audio, generate diagrams, create simulations, and answer follow-up questions in any modality they need. Students who think visually can watch AI generate a concept map in real time. Students who learn by doing can run AI-powered simulations of historical events, scientific processes, and economic systems at negligible cost.

The theory underneath this is not new. Howard Gardner’s multiple intelligences framework (1983) — whatever its limitations as a scientific theory of cognitive architecture — pointed at something real: that the modality in which a student encounters information affects how well they can process and retain it. Richard Mayer’s Multimedia Learning Theory (2001) gave this a more rigorous foundation: learning is deeper when it combines verbal and visual channels in a way that doesn’t overload working memory.

What’s new is the access. Multimodal instruction used to require significant production resources — video, animation, audio, physical manipulatives — and careful pedagogical design. Now any teacher with a Gemini account can generate a concept in five modalities in ten minutes.

The superpower for students isn’t just access to modalities. It’s agency over their own learning pathway. A student who knows they learn better through conversation can now have that conversation, on demand, at 11 p.m. before a test. A student who needs three repetitions in visual format before text clicks can get those three repetitions without holding up the class or embarrassing themselves by asking again.

The teacher’s role in this is curatorial: knowing which modalities work for which students, designing experiences that invite students to discover their own most effective learning pathways, and refusing to let “the AI will handle it” become an excuse for less thoughtful differentiation rather than more.

1.58.5 What Won’t Change: Human Bandwidth, Human Care, Human Witness¶

Here is the most important paragraph in this chapter.

No matter what AI becomes in the next ten years — no matter how sophisticated, how personalized, how eerily human-like — there are things it will not do, because those things require something AI does not have: a stake in the outcome.

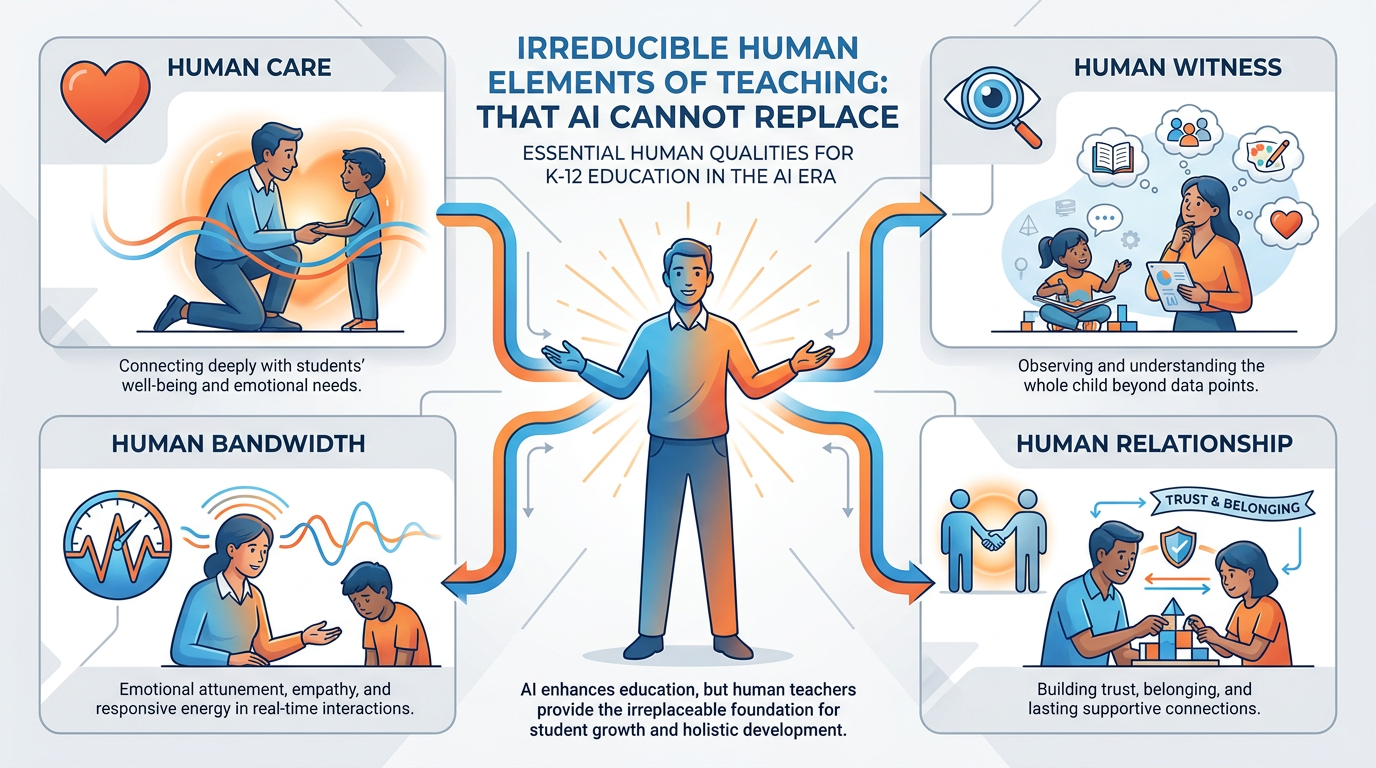

Figure 5:The Irreducible Human. These are not soft skills. They are the load-bearing structure of genuine education. Every piece of research on what separates teachers who change students’ lives from teachers who don’t points to these same elements. AI, no matter how capable, does not have a stake in the outcome.

Human Care. AI cannot care. It can simulate the linguistic markers of care — warmth, encouragement, interest, patience — with remarkable accuracy. But simulation is not the thing itself. Care is not just saying the right things. It is being genuinely affected by another person’s struggle. It is the 6 a.m. email you send because you were still thinking about a student at midnight. It is the adjustment you make to Wednesday’s lesson because you could see on Monday that something had shifted in the room. This is not incidental to teaching. It is the mechanism through which many students become capable of learning at all.

Human Witness. There is something that happens when a human being looks at another human being and truly sees them — not their test scores, not their demographic profile, not their IEP, but them. The child who hasn’t slept because her parents are fighting. The boy who is brilliant in ways the curriculum never measures. The student who has been told they’re not smart and has decided to believe it. Being seen — really seen — by another human being is a precondition for many students’ willingness to take the risks that learning requires. AI cannot witness. It can process. It can infer. It can generate responses calibrated to what the data suggests about a student. But the student knows the difference.

Human Relationship. Learning happens in relationship. This is not a platitude. It is one of the most replicated findings in developmental and educational psychology. Students take more risks, persist longer in difficulty, and develop more robust identities as learners in classrooms where they feel genuinely connected to the teacher and to each other. The mechanism is trust: the cognitive and emotional safety to be wrong, to try, to fail, to try again. AI cannot build this kind of relationship. It can be warm. It can be patient. It can remember previous conversations. But it cannot earn trust the way a human does, through the accumulation of shared experience, mutual investment, and the willingness to be present for someone even when it’s hard.

Human Bandwidth. Teachers read rooms. They notice micro-signals — the slight slump in a student’s posture, the edge in a student’s voice, the quiet that’s different from the usual quiet. They make real-time decisions about when to push and when to let go, when to address the whole class and when to have a private conversation, when the lesson plan should be abandoned because something more important is happening in the room. This is not a skill AI will develop because it is not primarily a cognitive skill. It is an embodied, social, relational skill that emerges from being a human body in a room with other human bodies, sharing the same air, the same history, the same moment.

None of this means that teachers are irreplaceable because they’re human. It means teachers are irreplaceable because they do human things — and those human things are load-bearing in the structure of learning in ways that no amount of algorithmic sophistication can substitute.

1.68.6 Designing for Tomorrow’s Minds — A Look at the Cognitive Light Cone¶

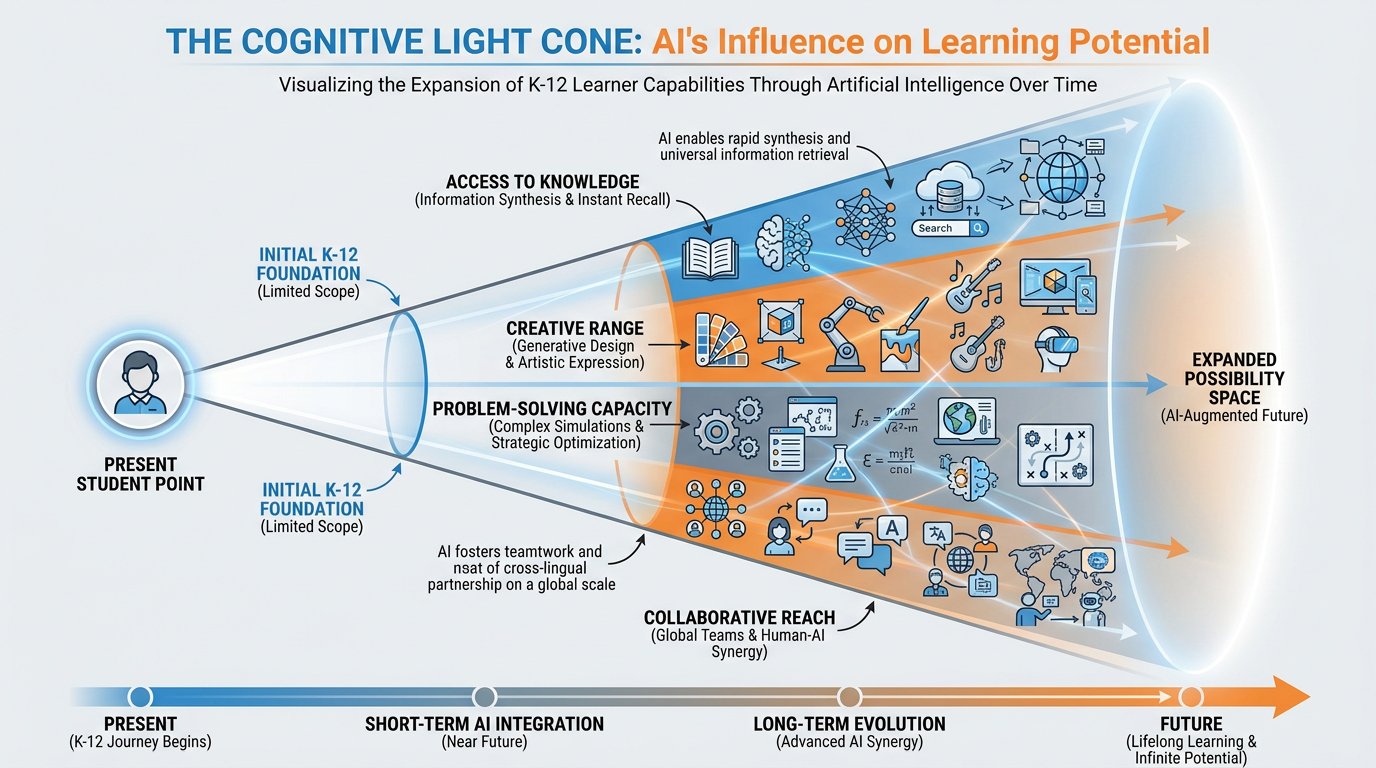

Physicists use the concept of a light cone to describe the region of spacetime that can causally influence a future event. Given the speed of light as an absolute limit, only events within the light cone of a given point can affect what happens at that point. Everything outside the cone is causally disconnected.

I want to apply this structure to learning.

Figure 6:The Cognitive Light Cone. Without AI augmentation, the cone of what any individual learner can access, create, and understand expands gradually — bounded by time, resources, and individual cognitive bandwidth. With AI augmentation, the cone expands faster and further. The question is not whether to expand it — it will expand either way. The question is whether you help your students navigate the expanded space with judgment.

Think of each student as a point in the present. Their cognitive light cone — the space of things they can understand, create, and solve — expands over time as they learn. More knowledge means more connections. More skills mean more tools. More experience means more frames of reference.

Without AI, that expansion is bounded by the student’s individual cognitive bandwidth, time, and access to resources. A student who can’t afford a private tutor, a student who doesn’t speak English at home, a student whose school doesn’t offer advanced courses — their light cone expands more slowly, not because of innate capacity, but because of access.

AI changes the shape of the cone. Not by replacing the student’s own cognitive development — you can’t shortcut that — but by removing access barriers, by providing scaffolding that doesn’t require an expert human to be physically present, by making it possible for a student in Overtown or Opa-locka to have the same quality of feedback on their writing as a student in a private school in Coral Gables.

This is the genuinely radical promise. Not that AI makes learning easier (it can, disastrously, when misused). Not that AI makes teachers obsolete (it doesn’t). But that AI can equalize the light cone — can make the expansion of cognitive possibility less dependent on accidents of birth, geography, and socioeconomic position than it has ever been.

Designing for tomorrow’s minds means designing for this: using AI to expand what every student can attempt, while using human teaching to ensure that the expansion happens with depth, not just breadth.

1.78.7 New Roles for Teachers (That Don’t Have Names Yet)¶

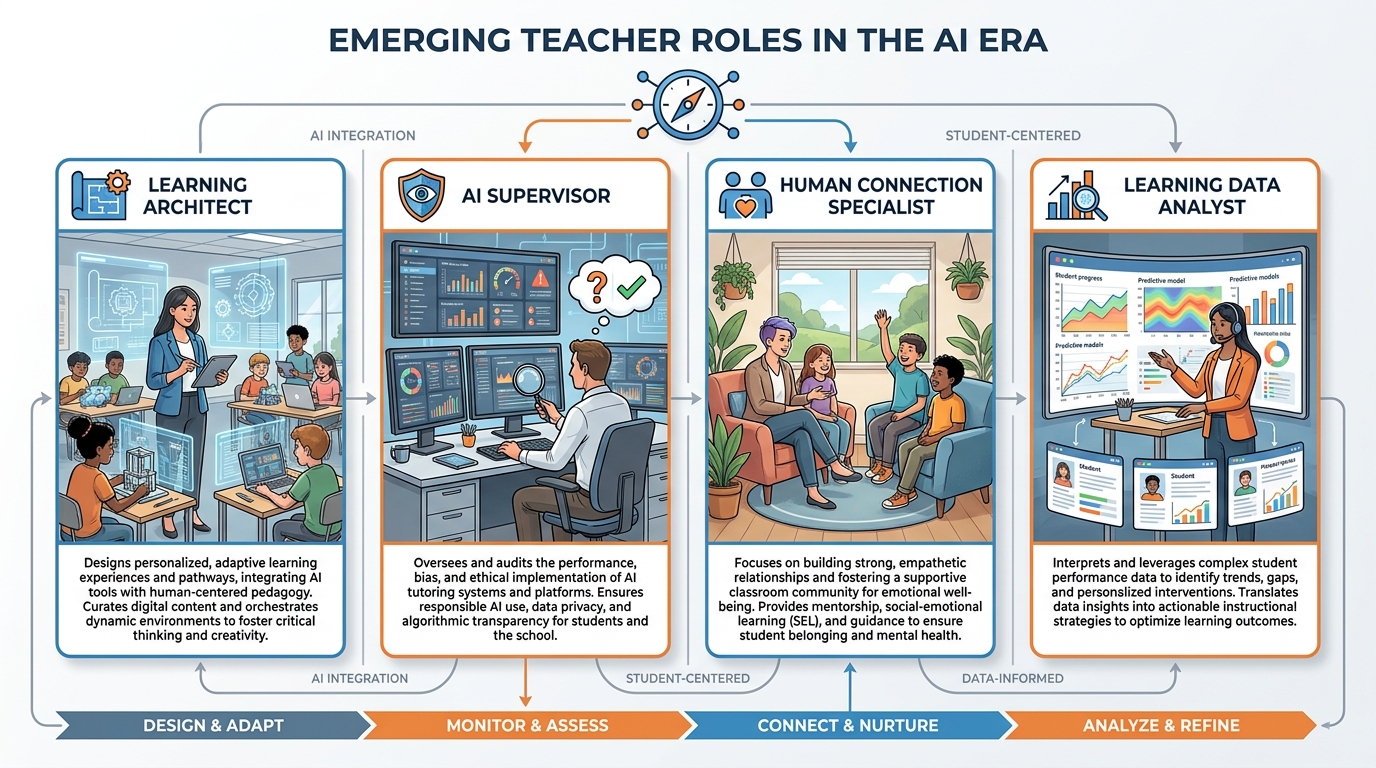

The industrial model of teaching had a job description that was essentially stable for a century: expert, deliverer, assessor, manager. You knew the content. You presented it. You tested retention. You maintained order. This model worked — imperfectly and unevenly — for a world that needed graduates who could perform standardized tasks reliably.

That job description is no longer sufficient.

Figure 7:Emerging Teacher Roles. These roles don’t have clean titles yet. You’ll find yourself doing all four of them in a single class period, without a raise, without a new contract, and often without adequate training. That’s the current reality. The goal of this chapter is to help you see them clearly enough to do them well.

Here are the emerging roles — imperfectly named, genuinely necessary:

The Learning Architect. This is the designer role. Instead of asking “what am I going to teach today?”, the Learning Architect asks “what experience am I going to create, and what cognitive work will students be required to do?” This means designing backwards from desired thinking — not desired content knowledge, but desired cognitive development. What kind of problem-solving? What kind of analysis? What kind of perspective-taking? The AI can scaffold almost any content. The human teacher designs the encounter with it.

The AI Supervisor. This role will be new to everyone. It means auditing the AI tools your students and your school use — checking for bias, inaccuracy, cultural insensitivity, and pedagogical mismatch. It means being the adult in the room who reads the AI-generated content before it reaches students, who questions the platform’s claims about its own effectiveness, who pushes back when the tool is producing outputs that undermine rather than support learning. This is quality control work. It requires both technical understanding and pedagogical judgment.

The Human Connection Specialist. As AI handles more of the routine instructional scaffolding, the teacher’s comparative advantage in human connection becomes more valuable, not less. This means investing deliberately in the relationship infrastructure of your classroom: the rituals that build community, the conversations that go beyond content, the moments of genuine curiosity about each student as a whole person. These are not “extra” activities squeezed in around the real curriculum. For many students, they are the real curriculum — the conditions that make everything else possible.

The Learning Data Analyst. AI generates more data about student learning than any teacher has ever had access to. The opportunity is real: genuine insight into where individual students are struggling, what misconceptions are widespread in the class, which interventions are working. But the data is only as good as the questions you ask of it — and as the values you bring to interpreting it. This role means developing the ability to read AI-generated learning dashboards critically, to notice when the data is leading you somewhere useful and when it’s leading you astray.

A Caution About New Role Creep

The emergence of these new roles does not mean the old ones disappeared. Teachers still need to know their content deeply. They still need to be skilled at direct instruction. They still need to manage classrooms effectively. What’s changed is the distribution of time and energy — and that distribution will need to shift.

The danger is what might be called “role creep without resource adjustment”: the expectation that teachers will add these new roles on top of an already full job description, without reduction in class size, without adequate preparation time, without compensation, and without training. That is not a professional development challenge. That is a labor conditions challenge. And teachers should name it as such.

1.88.8 The Threats Worth Worrying About¶

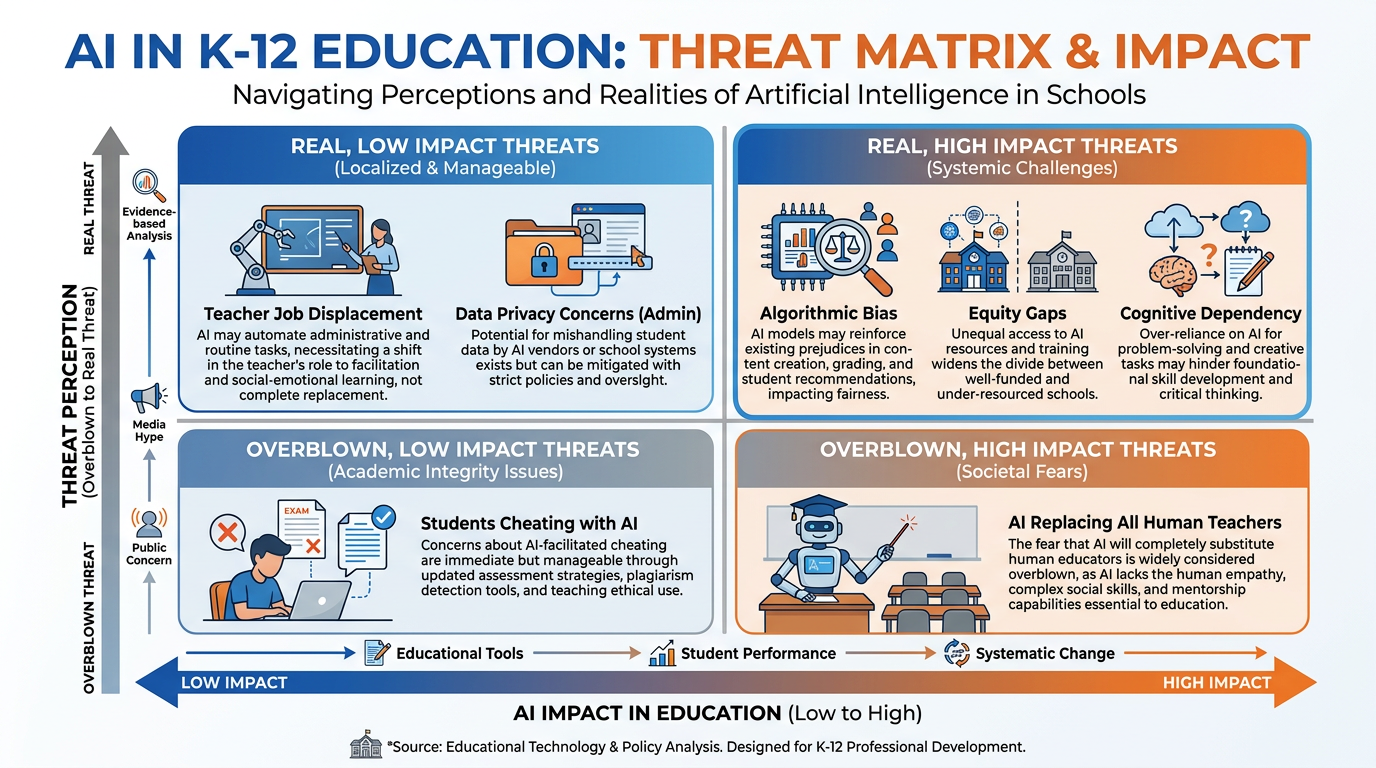

This book has been largely optimistic — because the honest view of AI in education, when you cut through both the hype and the panic, is more opportunity than threat. But “more opportunity” does not mean “no threat.” There are genuine risks worth real worry.

Figure 8:The Threat Matrix. Some risks are real and high-impact. Others are real but manageable. Some are overblown — not because they aren’t happening, but because the proposed responses are often worse than the problem. Knowing which is which is a professional competency.

Cognitive dependency. The most serious educational threat is also the most invisible. Students who consistently use AI to bypass the cognitive work of learning — not just occasionally, not as a scaffold, but habitually, as a replacement for thinking — are not building the cognitive infrastructure they need. They are producing outputs without developing capacities. This shows up slowly: not in this year’s grades, but in five years’ ability to function in situations where the AI isn’t available, or isn’t right, or isn’t sufficient. The students who will be most affected are the ones who lack the metacognitive awareness to notice that they’re not learning.

Algorithmic inequity. AI systems trained primarily on data from well-resourced, English-dominant, culturally mainstream contexts will perform worse for students from other contexts. When those systems are deployed at scale in under-resourced schools, the gap between the performance of AI for advantaged students and the performance of AI for disadvantaged students can amplify rather than close existing inequities. This is not a hypothetical risk. Studies of early AI tutoring deployments in high-poverty districts have already documented this pattern. It requires active counter-pressure from educators, administrators, and policymakers.

The surveillance state of learning. AI-powered learning platforms generate granular behavioral data. That data has commercial value, research value, and regulatory value. The history of EdTech data suggests that it will be used — sooner or later — for purposes beyond the ones disclosed to parents at sign-up. Students in K-12 settings cannot meaningfully consent to data collection about their learning behaviors, errors, and developmental trajectories. This is a genuine ethical problem that is currently being papered over by terms-of-service agreements that no parent reads and that no child can evaluate.

Teacher deskilling. If AI becomes the primary designer of lessons, the primary generator of feedback, and the primary adapter of instruction, teachers risk losing the skills those tasks require — skills that are also the foundation of the irreplaceable human roles. Pilots who rely entirely on autopilot lose manual flying proficiency. Teachers who rely entirely on AI-generated lesson plans lose pedagogical design judgment. This is not an argument against using AI tools. It is an argument for using them deliberately, with intention to maintain — not replace — your own professional capacities.

1.98.9 The Threats That Are Overblown¶

Fairness requires naming the risks that aren’t as serious as they’re often portrayed.

AI will replace teachers. This is the most common fear and the most thoroughly unsupported by evidence. Teaching is one of the most irreducibly human professions on the planet. The skills that make a teacher effective — relational attunement, contextual judgment, the ability to notice and respond to the whole child — are precisely the skills that AI cannot replicate. What AI will change is the task composition of teaching, not the irreplaceability of teachers. Districts that deploy AI as a cost-cutting strategy to reduce staffing are misunderstanding both AI and education. They will produce worse outcomes. The evidence, when it accumulates, will be unambiguous.

AI cheating will destroy academic integrity. Cheating is not new. Students have always found ways to avoid doing their own work. What has changed is the sophistication of the available tools and the near-impossibility of reliable AI detection. The response to this is not detection — it is better assignment design. If an assignment can be fully completed by AI without any student thinking, the assignment needs to be redesigned. That redesign is a professional opportunity, not a defeat. The teachers who are redesigning their assessments now are building a more honest and more powerful curriculum than the one AI supposedly threatened.

Students who use AI will be helpless without it. This is the “calculators will make everyone unable to do arithmetic” fear, reapplied. The evidence from calculator use in mathematics classrooms is instructive: students who learned to use calculators thoughtfully did not lose mathematical reasoning. Students who were never taught when and why to use calculators, and were just handed the tool, sometimes did. The variable is pedagogical intentionality — not the tool itself.

The Real Lesson of the Calculator Panic

In the 1970s and 1980s, the widespread introduction of calculators into mathematics classrooms provoked an intense cultural panic. Critics argued that students would lose the ability to compute mentally, would become dependent on machines, and would stop understanding numbers.

Decades of subsequent research produced a nuanced answer: calculators used thoughtfully, in a curriculum designed to leverage them, produced students who were better at mathematical reasoning — not worse. Calculators used as a bypass for learning basic number sense produced exactly the deficits the critics feared.

The variable was never the calculator. It was the pedagogy.

Apply this lesson to every AI tool you introduce. The technology is not the risk. Pedagogical thoughtlessness is the risk.

1.108.10 Your Personal 10-Year Plan as an Educator¶

The transformation Mezirow describes does not happen to you passively. It happens because of choices you make — choices about what to learn, what to practice, what community to invest in, what risks to take.

Here is a framework for thinking about the next decade of your professional life. Not a prescription — a scaffold.

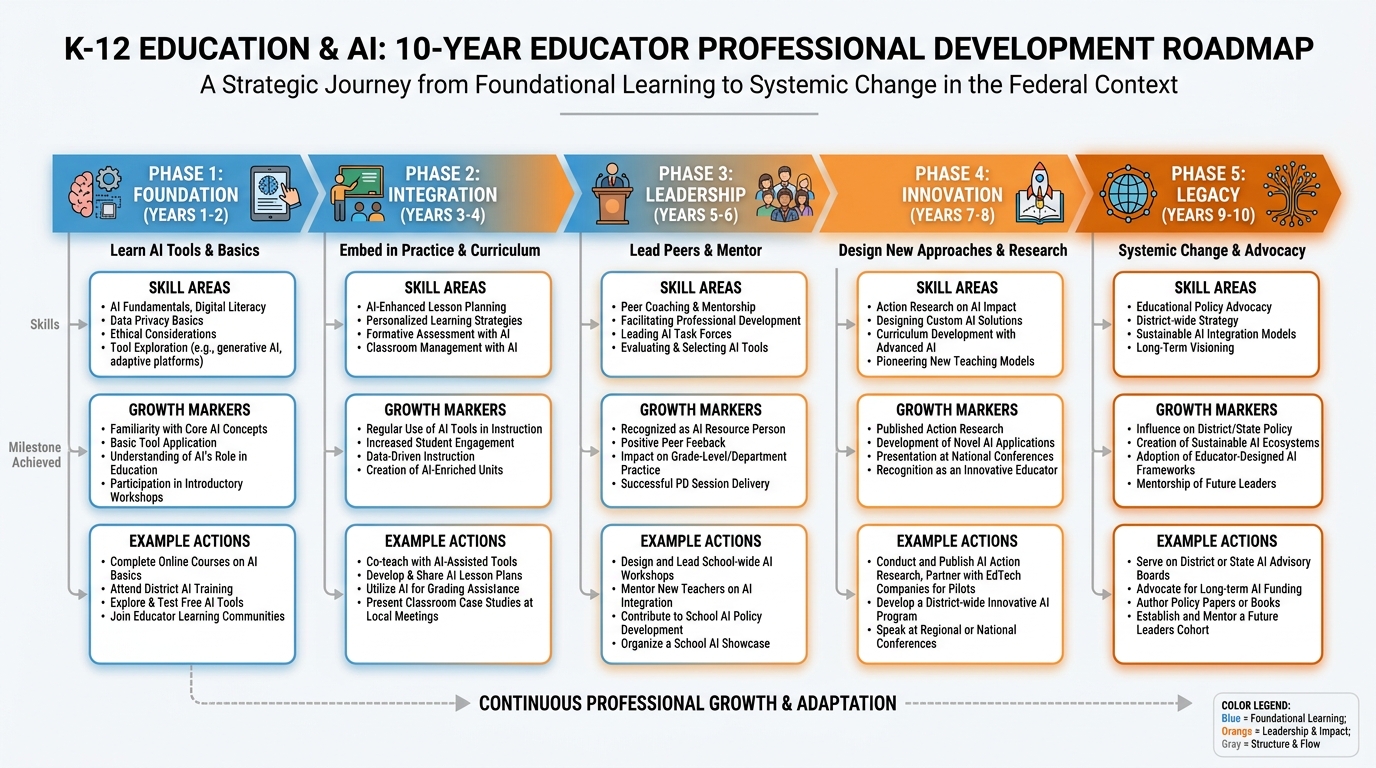

Figure 9:Your 10-Year Educator Roadmap. The phases are not rigid. You may move through them faster or slower. You may be simultaneously at different phases in different domains. The value of the framework is not its precision — it is the habit of intentional forward-planning in a profession that is often too reactive to be strategic.

What you’re doing: Getting fluent. Learning what AI actually is, what the major tools do, and how they interact with what you already know about learning.

Key actions:

Complete this course

Build and regularly use your Gem library

Develop and implement your classroom AI use policy

Find two or three trusted colleagues who are also learning — create a small professional learning community

Success indicator: You can explain AI tools to parents and administrators in a way that is accurate, calm, and grounded in learning science. You are not afraid of the conversation.

What you’re doing: Embedding AI into your core practice — not as a special activity, but as an integrated tool in the same way you use whiteboards, discussion protocols, or backward design.

Key actions:

Redesign at least one major unit entirely around AI-augmented learning

Experiment with two new assessment modalities (oral, portfolio, performance)

Document what’s working and what isn’t — build your own evidence base

Success indicator: AI use in your classroom is unremarkable to students and to you. It’s just part of how the room works.

What you’re doing: Becoming a resource for colleagues. You have enough fluency and enough evidence from your own practice to lead.

Key actions:

Facilitate a professional development session for your school or district on one aspect of AI-augmented teaching

Mentor a newer teacher through the foundation phase

Contribute to your school’s AI use policy development — not just comply with it

Success indicator: When there’s a question about AI in education at your school, people come to you. Not because you know everything, but because you know how to think about it.

What you’re doing: Designing genuinely new approaches. You have enough experience to see the limits of current frameworks and the confidence to try things that don’t have a model yet.

Key actions:

Design a learning experience or curriculum unit that couldn’t have existed before AI

Share it beyond your school — publish, present, open-source

Start asking bigger questions: what should assessment look like in your subject area in five years?

Success indicator: You are creating, not just implementing. Your practice is a source of ideas for others, not just a destination.

What you’re doing: Thinking systemically. Your practice, your leadership, and your innovation have accumulated into something that has shaped not just your students but your school and your profession.

Key actions:

Contribute to curriculum, policy, or professional standards at the district, state, or national level

Write or speak about what you’ve learned in a form that outlasts your current position

Ask: what do I want the teachers I’ve mentored to be doing in ten years because of our time together?

Success indicator: You can trace a line between your choices in Year 1 and the changes visible in your school or district now. The evidence of your transformation is in other people.

The ten-year plan is not about becoming a technology expert. It is about becoming a more effective teacher in the world as it is — a world where AI is infrastructure, where the demands on human teaching have shifted rather than diminished, and where the educators who thrive will be the ones who approached this moment with curiosity, rigor, and care.

1.118.11 A Letter to the Teacher of 2035¶

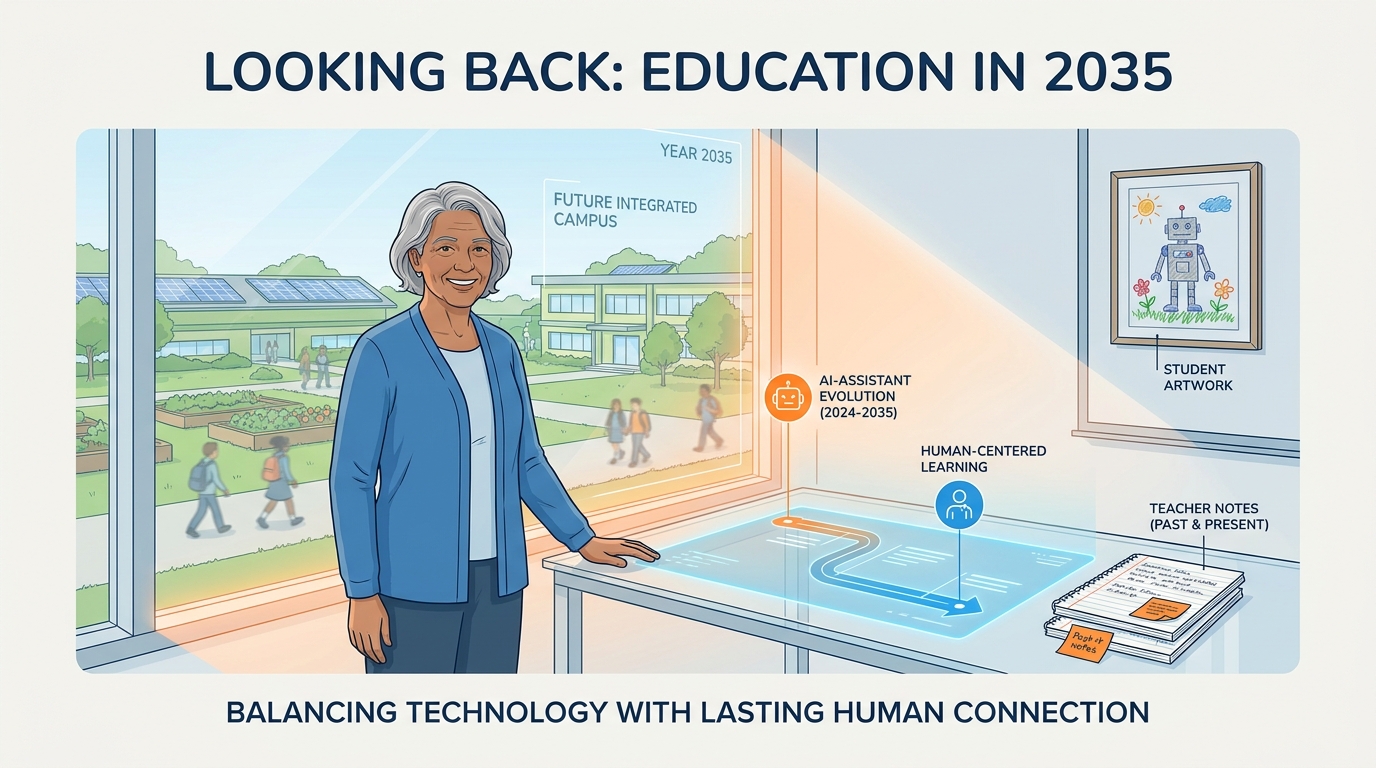

Figure 10:The Teacher of 2035. You are already becoming this person. The choices you make in the next ten years — what to learn, what to practice, what to protect, what to let go — are writing this letter right now. What will it say?

To the teacher reading this in 2035,

You made it.

I know that sounds small. It isn’t. The years between now and then were not easy for this profession, and the fact that you are still here — still in a classroom, still doing this work, still caring enough to think about it seriously — is not a small thing. It is the thing.

I’m writing from 2025, which I imagine looks to you the way 1995 looks to me now: quaint in some ways, prescient in others, and marked most of all by the particular anxiety of people who knew something large was coming and weren’t yet sure what it meant.

We were worried about a lot of things. Some of our worries turned out to be right. The equity gaps didn’t close themselves. The surveillance risks materialized in ways we hadn’t fully anticipated. The cognitive dependency problem became visible in cohorts of students who grew up using AI before they’d built the underlying capacities it was supposed to support. These were real problems, and I hope by the time you’re reading this, enough people took them seriously enough to address them with more than a terms-of-service update.

But we were also wrong about some things, and I want to tell you what I think we got most wrong.

We worried that AI would make teachers obsolete. It didn’t. But what we didn’t fully predict was the opposite: that AI would make the human parts of teaching more visible, more valued, and more clearly irreplaceable than they’d been in a long time. When the content delivery, the adaptive practice, and the first-pass feedback all moved to machines — the things that remained were the things that had always mattered most. Presence. Relationship. Witness. Judgment. Care.

I hope that by 2035, those things have a different status in schools than they did in 2025. I hope the teacher who is extraordinary at human connection is recognized as clearly as the teacher who produces high test scores. I hope the work of creating belonging — the invisible curriculum that makes everything else possible — has found a way into the professional evaluation systems that still, in my time, have no measure for it.

I want to tell you something about the students you’ve taught.

Some of them have been in your classroom for the last ten years. You know them — or you know the versions of them you encountered during those critical months when you happened to be their teacher. There are students who are, today in 2035, doing things in the world that they will tell people began in your classroom. Not because of the content you covered. Because of who you were when you covered it. Because of what it felt like to be in a room with someone who believed in them before they believed in themselves.

They may not have told you. Students rarely do — not in the moment, not even in the years right after. The gratitude tends to arrive late, quietly, in a message or an encounter years down the line when they’ve accumulated enough life experience to look back and name what made the difference.

You are making that difference right now. Even when you can’t see it.

I also want to say something about the machine.

You have lived with AI for ten years now. I imagine it has become ordinary in the way electricity is ordinary — present everywhere, expected everywhere, alarming only when it fails. I imagine there are tools in your classroom that you can’t imagine teaching without, and I imagine there are things about teaching that you protect fiercely from the machine because you understand, from experience, why they have to stay human.

I hope you are still arguing. Still pushing back. Still asking: what is this tool doing to my students’ ability to think, not just their ability to produce? Still being the person in the room who slows down when everything is speeding up, who asks the uncomfortable question when everyone is ready to move on, who notices the student who is quiet in a way that’s different from the usual quiet.

That is not a technological skill. It cannot be automated, delegated, or optimized. It is the oldest skill in teaching and it is the most important one we have, in 2025 and in 2035 and, I suspect, in any year you can imagine.

One more thing.

You were afraid, in 2025. Afraid that the ground would shift so far beneath you that you wouldn’t recognize your own craft. Afraid that what you’d spent years building — the intuitions, the relationships, the hard-won understanding of how learning actually works — would be made irrelevant by a technology you didn’t choose and didn’t design.

It wasn’t irrelevant. It was never irrelevant. Every year of careful, intentional, student-centered teaching you did before AI arrived made you better at teaching with it — because you had a standard to hold it to. You knew what genuine learning looked like. You knew what hollow output looked like. You knew the difference because you had earned the difference.

The disorienting dilemma was real. The transformation was hard. And you came out the other side not despite who you were as a teacher, but because of it.

That is what Mezirow was trying to tell us. The crisis doesn’t erase you. It reveals you.

Thank you for staying.

With admiration and solidarity,

Dr. Ernesto Lee Miami Dade College, 2025

1.12Chapter Summary¶

This chapter began with a disorienting dilemma — because that is where all real transformation begins. Mezirow’s ten-phase cycle of transformative learning is not a theoretical nicety. It is the map of what is currently happening to the teaching profession, and understanding the phases means you can navigate them with intention instead of just surviving them.

The next decade will bring AI tutors as standard infrastructure, multimodal assessments replacing text-only designs, personalized curriculum engines with genuine promise and genuine peril, and new teacher roles — Learning Architect, AI Supervisor, Human Connection Specialist, Learning Data Analyst — that are already emerging without yet having adequate names or adequate training pathways.

What will not change: human care, human witness, human relationship, and human bandwidth. These are not soft skills. They are the load-bearing structure of genuine education, and no amount of algorithmic sophistication can substitute for them.

The cognitive light cone concept offers a frame for the genuine possibility: AI can equalize access to the expansion of learning potential in ways that were structurally impossible before — but only if human teaching ensures that expansion happens with depth, judgment, and ethical grounding.

The threats worth taking seriously are cognitive dependency, algorithmic inequity, the surveillance state of learning, and teacher deskilling. The threats that are overblown include teacher replacement, the permanent destruction of academic integrity, and the permanent helplessness of students who use AI tools.

Your personal 10-year plan is not about becoming a technology expert. It is about becoming the teacher you were always trying to become — more effective, more strategic, more connected to what actually matters — in the world as it now is.

And finally, a letter to the teacher of 2035. You are already writing it.

1.13📝 Case Study & Discussion Board (2 pts)¶

1.13.1Case Study: The Teacher Who Stayed¶

Marisol has been teaching eighth-grade English Language Arts at a Miami-Dade public school for eleven years. In 2024, she was one of the early adopters of AI tools in her classroom — not because she was required to, but because she was curious. By 2026, her practice has been transformed in ways she didn’t predict.

Her essays are shorter now. More oral. More conversational. She stopped using a standard five-paragraph format requirement two years ago because AI made it meaningless. Instead, her students write one genuine paragraph — one claim, one piece of evidence, one explanation — and then defend it to her in a three-minute conversation while she takes notes on their reasoning.

Her Gemini Gems generate first-draft feedback on student writing before she reads it. She spends her marking time doing something different: looking for the gaps between what the AI flagged and what she notices. The AI catches surface errors and structural issues. She looks for the places where the student’s thinking is genuine, where it’s borrowed, and where there’s a seed of something worth pulling on.

One of her students — a boy who came to her class two years behind grade level in reading — recently submitted a piece of writing that the AI rated as grade-appropriate. Marisol read it and felt something wrong. She asked him to sit with her. “Tell me about this,” she said. He couldn’t explain what he’d written. He’d asked the AI to help him “improve” it, and the improvement had replaced him.

She wasn’t angry. She asked him: “What part of this is you?” He pointed to one sentence — a raw, clumsy, completely genuine observation about his own family. “That,” she said. “That’s the one I want to read. Write me five more sentences like that one.” He did. They were rough and honest and entirely his. She graded them higher than the polished AI-shaped paragraphs around them.

Discussion Prompt (minimum 250 words):

What did Marisol recognize in her student that the AI could not? Ground your analysis in at least one concept from this chapter — Mezirow’s transformative learning, the irreducible human elements from Section 8.5, or the cognitive dependency risk from Section 8.8.

What pedagogical principle does her “five more sentences like that one” response embody? How does it relate to productive struggle, desirable difficulties, or Piaget’s constructivism as discussed earlier in this book?

What would Marisol’s practice look like in 2035 if she continues on this trajectory? What is the 10-year arc you can see in her story?

Discussion Guidelines:

Your initial post must be a minimum of 250 words.

You must include at least one scholarly citation (APA format) from this chapter, the course, or your own research.

You must respond meaningfully to at least two of your peers with substantive engagement — not just “I agree” but a genuine extension or challenge of their argument (minimum 75 words per response).

Due: Initial post by Wednesday, responses by Sunday.

1.14🧪 Hands-On Lab: Build Your Five-Year Professional Development Roadmap (10 pts)¶

1.14.1Overview¶

This lab is about you. Not your students, not your classroom, not your school’s AI policy. You. By the end of this lab, you will have built a concrete, personalized, AI-assisted five-year professional development roadmap — a living document you can actually use.

Time required: Approximately 90 minutes

Tools: Gemini (gemini.google.com), Google Docs

Points: 10

1.14.2Step 1: The Self-Assessment (20 min)¶

Before Gemini can help you plan, you need to be honest about where you are.

Open a new Google Doc. Title it: [Your Name] — Five-Year AI Professional Development Roadmap

Answer these five questions in writing before opening Gemini. Be honest. No one grades this part except you:

What AI tools have you actually used in the last six months? (Not “heard of” — actually used, with real students or for real classroom work.)

What is the one thing about AI in education that still genuinely confuses or unsettles you?

What is the one aspect of your teaching practice that AI has most clearly made better?

What is the one aspect of your teaching practice that you are most protective of — the thing you most want to ensure AI doesn’t touch?

If your teaching practice in five years looks exactly the same as it does right now, what will you have missed?

These questions are your starting point. They are more honest than any survey instrument, and Gemini cannot answer them for you.

1.14.3Step 2: The AI Skill Mapping Conversation (25 min)¶

Open Gemini and begin a conversation using this prompt:

“I’m a K-12 teacher completing a professional development course on AI in education. I’ve completed the self-assessment below. Based on my answers, help me identify: (1) my strongest current AI skill areas, (2) my most significant growth gaps, and (3) three specific, concrete professional development actions I could take in the next 12 months to close those gaps. Here are my self-assessment answers: [paste your five answers].”

Have a real conversation with Gemini. Push back on its suggestions if they don’t fit your context. Ask follow-up questions. Ask it to be more specific. Ask it to explain the reasoning behind its recommendations. Ask it what assumptions it’s making about your teaching context and whether those assumptions are correct.

The goal is not to get a list. It is to understand your own professional development landscape well enough to make real choices about it.

1.14.4Step 3: Build the Roadmap (25 min)¶

Return to your Google Doc. Using your self-assessment and the Gemini conversation as inputs, build your five-year roadmap. Structure it like this:

Year 1 — Foundation

Current state (honest, specific)

Target state (concrete, achievable)

Two to three specific actions with realistic timelines

How you’ll know you’ve gotten there

Year 2 — Integration

Building on Year 1 outcomes

One major change to your practice or your classroom environment

Who you’ll learn with (professional learning community, mentor, colleague)

Year 3 — Leadership

One way you’ll share what you’ve learned with others

One contribution to your school or district’s AI approach

Year 4 — Innovation

One genuinely new practice or experience you’ll design that didn’t exist before AI

One question you want to answer from your own classroom research

Year 5 — Legacy

What you want your practice to look like

What you want the teachers you’ve mentored to be doing

One sentence statement of your professional identity as an AI-era educator

This is not a fantasy document. It should be challenging and achievable. Use Gemini to pressure-test it:

“Here is my five-year roadmap draft. What assumptions am I making that might not hold? What have I underestimated? What have I left out?”

Revise based on what you learn.

1.14.5Group Build (20 min)¶

Now work with your group to tackle something bigger than your individual roadmap.

Use Gemini to help your group identify the biggest professional development gap in your school or district — not a hypothetical gap, but a real one that your group members collectively know exists. Use the AI to sharpen the problem statement, identify its causes, and develop a concrete proposal for addressing it. This could be a PD program, a policy change, a resource, a partnership, a process — whatever the problem calls for.

Be ready to present to the class:

The problem your group identified and why it matters

How AI helped you think through it — and where it fell short

What your proposed solution looks like

What it would take to actually implement it

The goal is not a polished presentation. It is a genuine conversation about a real problem, powered in part by AI, evaluated by human judgment.

1.14.6Submission¶

Submit to Canvas:

A link to your Five-Year Roadmap Google Doc (sharing set to “Anyone with the link can view”)

A 200-word reflection: What did Gemini get right about your professional development needs? What did it miss? What does that tell you about the limits of AI as a professional development tool?

Grading rubric:

| Criterion | Points |

|---|---|

| Self-assessment complete and honest (all five questions answered) | 2 pts |

| Five-year roadmap addresses all five phases with concrete specifics | 4 pts |

| Evidence of real Gemini dialogue and critical revision | 2 pts |

| Group build participation and class discussion | 2 pts |

| Total | 10 pts |

1.15🎯 In-Class Assignment (10 pts)¶

Details and instructions will be provided in class.

Points: 10

1.16Glossary¶

Transformative Learning Mezirow’s (1978; 1991) theory of adult learning through fundamental shift in frames of reference, initiated by disorienting dilemmas and proceeding through critical reflection to new perspectives and reintegrated action.

Disorienting Dilemma In Mezirow’s framework, an experience or encounter that cannot be accommodated within the learner’s existing assumptions and therefore initiates the transformative learning process.

Frame of Reference The set of assumptions, expectations, and interpretive filters through which an individual gives meaning to experience. Transformative learning changes the frame itself, not just its contents.

Cognitive Light Cone A concept adapted from physics describing the expanding space of what a learner can think, create, and solve over time. AI has the potential to expand the cognitive light cone — particularly for learners with limited access to traditional educational resources.

Learning Architect An emerging teacher role focused on designing cognitive experiences rather than delivering content — asking what thinking students will be required to do, rather than what information they will receive.

AI Supervisor An emerging teacher role involving auditing AI tools for accuracy, bias, cultural appropriateness, and pedagogical soundness — the quality control function that AI deployment in schools requires.

Human Connection Specialist An emerging teacher role investing deliberately in the relationship infrastructure of the classroom — the conditions of trust, belonging, and safety that make learning possible.

Cognitive Dependency A risk pattern in which students habitually use AI to bypass cognitive work rather than scaffold it, resulting in output production without underlying capacity development.

Algorithmic Inequity The tendency of AI systems trained on majority-context data to perform less accurately for students from non-dominant linguistic, cultural, or socioeconomic backgrounds — potentially amplifying rather than closing existing educational disparities.

Multimodal Learning Learning that engages multiple sensory and cognitive channels simultaneously — text, audio, visual, simulation, conversation — in ways that leverage the complementary strengths of different modes of representation (Mayer, 2001).

Personalized Curriculum AI-driven instructional systems that dynamically adapt content, pacing, difficulty, and modality to individual student learning profiles in real time.

Teacher Deskilling The gradual erosion of professional capabilities when AI tools consistently perform tasks that previously required and developed those capabilities — analogous to pilots losing manual flying proficiency through over-reliance on autopilot.

Chapter 8 of 8 — AI Thinking for Educators · Dr. Ernesto Lee · Miami Dade College